Many performance incidents are detected in production, though they accumulate during design, development, and integration, then surface under load.

Teams that address performance late absorb avoidable cost: rework, infrastructure overspend, incident recovery, and delayed releases. Teams that treat performance as a continuous engineering concern reduce failure risk and improve release predictability.

For example, two teams at BDC used Axify to analyze delivery flow and adjust review ownership and sequencing. Delivery time improved by 51%, and time spent in quality control dropped by 81%.

In three months, this led to 10x ROI and $700k recurring productivity gains/year.

We want to help you achieve your business goals, too.

That’s why, in this article, we will outline:

- How software performance engineering fits into the SDLC

- How it is structured in practice

- Which decisions improve performance without slowing delivery

Pro tip: Axify Intelligence helps you make the right decisions by analyzing your historical delivery data, recommending workflow changes, and allowing you to apply them directly. Contact Axify today

What Is Software Performance Engineering (SPE)?

Software Performance Engineering (SPE) is a systematic and quantitative approach to developing software systems. The goal is for these systems to meet defined performance objectives from the very beginning of the development lifecycle. As such, you must treat performance as a design constraint and not as a late validation step.

This early focus prevents cost accumulating over time.

When you find performance defects in production rather than during design or coding, they cost 10x to 100x more to fix. That cost reflects avoidable rework, regression risk, and roadmap disruption.

Pro tip: SPE is not a single activity like load testing or tuning. It is a discipline embedded across the software development life cycle, from architecture decisions to code reviews and integration pipelines.

So what changes in your daily work?

- Early on, you define measurable performance indicators such as p95 latency thresholds, throughput targets, and resource limits.

- Then, during design, you evaluate architectural tradeoffs against those targets, such as database selection, caching strategies, and service boundaries.

- During implementation, you profile critical paths, validate scalability assumptions, and integrate performance regression checks in continuous integration.

- Throughout the SDLC, you monitor performance metrics to confirm that assumptions hold as features evolve and load increases.

To learn more, you can watch this MIT lecture for a clear explanation of software performance engineering:

How AI Coding Tools Change Software Performance Engineering

AI coding assistants are accelerating software development, but they also increase the risk of performance issues entering production.

Tools such as GitHub Copilot, ChatGPT, and other AI-assisted coding environments can generate large amounts of working code quickly. However, that code is usually optimized for correctness and speed of implementation, not for performance efficiency.

This creates two important shifts for engineering leaders.

- First, code volume grows faster than architectural review capacity. Teams may introduce inefficient database queries, unnecessary service calls, or poorly scoped caching layers simply because more code is produced in less time.

- Second, performance regressions appear earlier and more frequently as teams iterate faster. Without systematic performance checks, these regressions accumulate quietly until they surface under real user load.

This is exactly why Software Performance Engineering becomes more important in AI-assisted development environments.

When performance targets, profiling practices, and automated regression checks are embedded into the SDLC, teams can move fast with AI tools without sacrificing system reliability or scalability.

So, let’s discuss the gains of SPE in more depth.

Benefits of Software Performance Engineering

Many teams delay performance engineering because they assume it adds overhead.

Modeling, validation, and performance reviews can look like extra work during already constrained delivery cycles. The hesitation is understandable.

However, late performance discovery affects release timing, infrastructure cost, and rework scope. SPE changes when those constraints are surfaced and how large they are when addressed.

These are the outcomes you should expect:

- Improved system performance and reliability: When performance expectations are defined early and validated continuously, structural bottlenecks are identified before production traffic exposes them. In fact, high-quality codebases contain 15x fewer defects than low-quality ones and resolve issues 124% faster. This reduces avoidable incidents tied to capacity limits, inefficient queries, or contention.

- Earlier detection of performance risks: Architectural assumptions about scaling, caching, or service boundaries are tested during design and development. As such, potential performance bottlenecks surface before they require cross-team refactoring or emergency scaling.

- Reduced cost of late-stage fixes: Performance defects caught during coding require refactoring of small components. The same issues discovered in production typically demand cross-team coordination and infrastructure changes. SPE reduces that compounding cost.

- More predictable software delivery: Stable performance behavior reduces release instability caused by late performance regressions. That supports steadier deployment frequency and more reliable lead time, which strengthens roadmap confidence.

- Better alignment between engineering effort and outcomes: Performance targets become your explicit decision criteria. That allows you to connect technical choices to business risk and long-term sustainability.

Now, let's go over the difference between SPE, performance testing, and APM.

Software Performance Engineering vs. Performance Testing

Software performance engineering (SPE) defines performance constraints and validates them throughout the SDLC. Performance testing evaluates how a mostly completed system behaves under load.

The difference is scope and timing.

Performance testing is typically executed after feature development, most times within QA. Its purpose is to measure system behavior under simulated load and identify bottlenecks before release.

Common activities include:

- Load testing

- Stress testing

- Spike testing

- Endurance testing

- Benchmarking

These tests act as a validation gate. They simulate production conditions and expose issues that affect latency, throughput, or reliability.

However, performance testing does not influence earlier architectural decisions.

If tests reveal database contention, blocking service calls, or inefficient data access patterns, remediation may require refactoring across services, schema redesign, or release delays.

Software performance engineering, in contrast, integrates performance criteria into:

- Architecture reviews

- Capacity modeling

- Implementation standards

- CI-based performance regression checks

- Ongoing monitoring against defined thresholds

SPE aims to prevent structural bottlenecks before they propagate across teams and services.

In short:

- Performance testing asks: Does the system hold under load?

- SPE asks: Was the system designed to meet load expectations from the start?

Watch this video for a clear SPE vs. performance testing breakdown:

Do you still need performance testing if you follow SPE?

Yes. Software Performance Engineering does not replace performance testing. It makes those tests far more effective.

When performance objectives are defined early and validated continuously, load tests are no longer the first time teams discover system limits. Instead, performance testing becomes a final confirmation that architectural decisions hold under production-like conditions.

- Without SPE, performance testing usually reveals structural problems late in the release cycle. Fixing those issues may require redesigning database schemas, rewriting services, or delaying deployment.

- With SPE in place, most performance risks are already addressed during architecture design, implementation, and CI regression checks. Performance testing then acts as a validation step.

That’s why strong engineering organizations use both:

- SPE prevents performance problems.

- Performance testing verifies the system behaves correctly at scale.

Software Performance Engineering vs. Application Performance Monitoring (APM)

Software performance engineering (SPE) defines performance constraints before and during development. Application performance monitoring (APM) analyzes system behavior in production.

The distinction is timing and control.

APM provides real-time visibility into:

- Request latency

- Error rates

- Transaction traces

- Infrastructure metrics

- Dependency performance

Its purpose is operational diagnosis. When latency increases or error rates spike, APM helps identify the affected service, query, or downstream dependency. It supports incident response, root cause analysis, and recovery measurement under real traffic conditions.

However, APM operates after architectural and implementation decisions are in place. It detects performance failures; it does not prevent the design choices that caused them.

SPE, in contrast, shapes those decisions earlier. It defines capacity targets, concurrency limits, and resource budgets during design and validates them during implementation. The goal is to reduce the likelihood and severity of runtime performance incidents.

In short:

- APM asks: What is happening in production right now?

- SPE asks: Were performance limits defined and validated before release?

Both are necessary. APM stabilizes live systems. SPE reduces the frequency and scope of performance-related incidents across the lifecycle.

Watch this short video if you want to learn more about APM:

Next, let's take a look at the core components and processes of SPE.

Core Components of Software Performance Engineering

Software performance engineering combines modeling, validation, monitoring, and feedback loops across the SDLC.

These components work together to define performance expectations, test architectural assumptions, and prevent regressions as the system evolves.

Software Performance Engineering Objectives and Constraints

Software performance engineering begins with explicit objectives and constraints.

Objectives define what acceptable performance looks like. These typically include:

- Latency targets (for example, p95 response time under defined load)

- Throughput requirements (requests per second, transactions per minute)

- Concurrency limits

- Resource budgets (CPU, memory, I/O)

- Availability or SLO commitments tied to performance behavior

Pro tip: Update your goals frequently. A large study by Perdoo across 250 companies, 15,000 employees, and 150,000 goals found that employees who updated progress on their goals more often achieved about 2x more goals than others.

Constraints define the operating boundaries of the system. These may include:

- Infrastructure limits (cloud instance types, network bandwidth)

- Cost ceilings

- Regulatory or compliance requirements

- Dependency performance characteristics

- Scaling limits of databases or third-party services

These objectives and constraints must be measurable and documented before implementation scales. Vague goals such as “fast” or “scalable” do not inform architectural decisions.

Clear performance targets allow teams to:

- Evaluate architectural tradeoffs

- Model expected load behavior

- Detect deviations during development

- Validate assumptions during integration

Without defined objectives and constraints, performance decisions are deferred until testing or production exposes constraints. With them, performance becomes an engineering variable that can be tested, monitored, and adjusted throughout the SDLC.

Software Performance Engineering Architecture and System Design

Architecture determines how a system behaves under load. Once services, data stores, and communication patterns are established, performance characteristics become harder to change.

Performance-aware design starts with expected load profiles:

- Peak and average request volume

- Concurrency levels

- Read/write distribution

- Data growth projections

- Latency requirements by user path

These inputs shape structural decisions such as:

- Service boundaries and decomposition

- Synchronous vs. asynchronous communication

- Database selection and indexing strategy

- Caching layers and invalidation rules

- Load balancing and failover strategy

Scalability must be explicit. Horizontal scaling assumptions, replication models, and partitioning strategies should be validated against defined throughput and latency targets.

Capacity planning translates performance objectives into infrastructure requirements.

This includes estimating CPU, memory, storage I/O, and network bandwidth under expected load. It also involves modeling how usage growth affects those requirements over time.

Architectural trade-offs are evaluated against measurable constraints:

- Is reduced latency worth increased infrastructure cost?

- Does stronger consistency justify lower throughput?

- Does service isolation improve resilience at the cost of inter-service latency?

Without performance-informed architecture, systems rely on optimistic assumptions. With it, scalability and resource consumption become deliberate design variables rather than post-release corrections.

Software Performance Engineering Metrics and Models

Software performance engineering relies on defined metrics and explicit models. Without both, performance discussions remain qualitative.

Key Performance Indicators in SPE

SPE focuses on measurable indicators that reflect system behavior under load:

- Latency: Response time measured at defined percentiles (p95, p99)

- Throughput: Requests per second or transactions per minute

- Concurrency: Number of simultaneous users or sessions supported

- Error rate: Failed requests under load

- Resource utilization: CPU, memory, disk I/O, and network consumption

- Queue depth and wait time: Indicators of contention or saturation

These metrics must be tied to defined load conditions. Latency without context (e.g., under 10 RPS vs. 1,000 RPS) does not inform design decisions.

Predictive vs. Observational Metrics

SPE uses two categories of measurement:

Predictive metrics are used before full-scale deployment. They estimate how a system will behave under expected load. Examples include:

- Capacity models based on expected traffic growth

- Queueing theory calculations

- Resource consumption projections per request

- Load test simulations in staging environments

These metrics guide architectural decisions and infrastructure planning.

Observational metrics are collected from running systems. They reflect actual behavior under real workloads. Examples include:

- Production latency distributions

- Real concurrency peaks

- Live error rates

- Resource saturation patterns

Observational data validates predictive assumptions. When deviations appear, models are adjusted and constraints re-evaluated.

Remember: Effective SPE combines both: predictive models inform design, while observational metrics confirm whether those assumptions hold as usage scales.

Software Performance Engineering Feedback and Measurement Loops

Feedback loops ensure performance regressions are detected and corrected as the system evolves.

During development, performance checks run regularly, so regressions are visible before they spread across the codebase. This can include:

- Performance assertions in automated tests

- Resource budgets enforced in CI

- Targeted load tests for critical flows when dependencies or data patterns change

In production, performance signals inform planning. For example:

- If p99 latency spikes under specific traffic patterns, those conditions become explicit design requirements in the next cycle.

- If timeouts cluster around a dependency, the backlog should include isolation, caching, or asynchronous alternatives.

The loop closes when measurement changes behavior.

Post-incident reviews should not stop at identifying failure. They should update performance targets, assumptions, and validation steps so the same failure pattern is less likely to recur.

The Software Performance Engineering Process

Software performance engineering follows a structured, repeatable process.

It begins by defining measurable performance requirements, continues with validation during development, and extends into production monitoring that informs future design decisions. Each phase reinforces the next.

The goal is systematic control over how performance expectations are defined, tested, and revised as the system evolves.

Define Performance Requirements

Start by translating business needs into technical performance criteria. For example:

- If a payment flow supports peak campaigns, define the maximum acceptable p95 latency under expected traffic.

- If a reporting service runs overnight jobs, define completion time thresholds and resource ceilings.

Requirements should specify measurable limits: response times, throughput targets, concurrency levels, and acceptable error budgets.

Thresholds also clarify tradeoffs.

For instance, a strict latency requirement may increase infrastructure cost. A relaxed target may reduce cost but increase abandonment risk. These decisions belong in planning; they're not for post-incident reviews.

Also, early validation reduces waste. When teams rely on quality-assurance metrics during design and coding, they expose performance risks before integration amplifies them.

In fact, research shows that using early quality-assurance metrics can reduce testing effort by up to 34% and improve testing efficiency by about 50%. That gain comes from preventing rework.

So, small adjustments during design are cheaper than architectural changes after release.

Continuous Performance Validation

Requirements mean little without ongoing validation.

So, you should run performance checks during development. That includes:

- Lightweight load simulations for critical endpoints

- Query profiling for new database calls

- Automated thresholds inside CI pipelines that fail builds when latency budgets are exceeded

Regression prevention is the main objective here.

A single inefficient change can add milliseconds to a hot path. If repeated across multiple merges, that drift compounds. Regular validation stops incremental degradation before it becomes visible to users.

This stage changes how you work day to day.

- During code reviews, you assess the performance impact of new queries or synchronous calls.

- Design documents specify expected load patterns and latency budgets before implementation starts.

- CI pipelines enforce those budgets by failing builds when thresholds are exceeded.

Over time, performance stops being a separate activity handled by a specialist and becomes a standard part of how your team writes and reviews code.

Monitor and Learn

Production traffic will expose behavior that staging environments cannot replicate. Monitoring ensures those signals inform the next engineering decision.

Besides, continuous monitoring in DevOps pipelines reduces downtime by 30% and incident resolution time by 40%, as production metrics feed back into development for proactive adjustments.

We advise you to track performance under real conditions:

- Throughput at peak load

- Latency percentiles (p95, p99) by service

- Error distribution across dependencies

- Resource saturation patterns

When deviations appear, respond structurally.

- If a dependency fails under burst traffic, introduce queues, rate limits, or circuit breakers.

- If database contention increases during batch jobs, adjust scheduling, indexing, or partitioning.

- If latency degrades after feature releases, review recent changes for inefficient queries or blocking calls.

Remember: Monitoring only adds value when it changes future design or validation steps. Each production insight should translate into a design or process adjustment. Over time, that cycle reduces repeat failures and stabilizes delivery without slowing feature work.

Roles Involved in Software Performance Engineering

Software performance engineering isn’t owned by just one specialist. You need clear responsibilities across teams.

Here are the primary roles and what they actually own in practice:

Performance Engineers

Performance engineers define measurable performance targets, design validation strategies, and analyze bottlenecks at the system level.

They build load models, review architectural assumptions, and translate production incidents into design corrections. Their role connects requirements, testing, and monitoring so that performance decisions are based on data.

Software Developers

Developers make implementation decisions that directly affect performance.

They write code within defined latency budgets, resource limits, and concurrency constraints. During design and code reviews, they assess how new features affect query volume, network calls, and memory usage. They remediate regressions detected through automated performance checks.

Platform and Infrastructure Teams

Infrastructure decisions determine how applications behave under load.

Platform teams manage capacity planning, scaling policies, and environment consistency. They configure observability pipelines, enforce resource quotas, and validate that infrastructure behavior aligns with architectural assumptions.

Clear ownership prevents performance gaps between development and operations.

Tools Used in Software Performance Engineering

Here are the primary tool groups used in SPE and what they actually help you control.

Performance Testing Tools

Performance testing tools simulate load before release. That includes:

- Load testing to validate expected traffic

- Stress testing to find breaking points

- Spike testing to assess sudden surges

- Endurance testing to detect resource leaks over time

These tools answer a focused question: how does the system behave under controlled pressure?

They measure latency, throughput, and error rates under predefined scenarios. However, they operate on a built system.

If architectural assumptions were flawed, testing exposes the issue but does not explain why it happened upstream. Testing remains essential, but it is a verification step, not a design strategy.

Application Performance Monitoring (APM)

APM tools observe live systems in production. They provide runtime tracing, infrastructure metrics, transaction monitoring, and log analysis. When an incident occurs, APM helps you diagnose root causes by correlating service calls, database latency, and infrastructure saturation.

This layer focuses on runtime behavior. It tells you what is happening in production and where the failure manifests. It does not analyze how planning decisions, review delays, or batching practices contributed to that outcome.

Monitoring closes the operational feedback loop, but it does not evaluate workflow efficiency.

Engineering Intelligence Platforms

Engineering intelligence platforms operate at the delivery system level: they analyze how work moves through your development lifecycle.

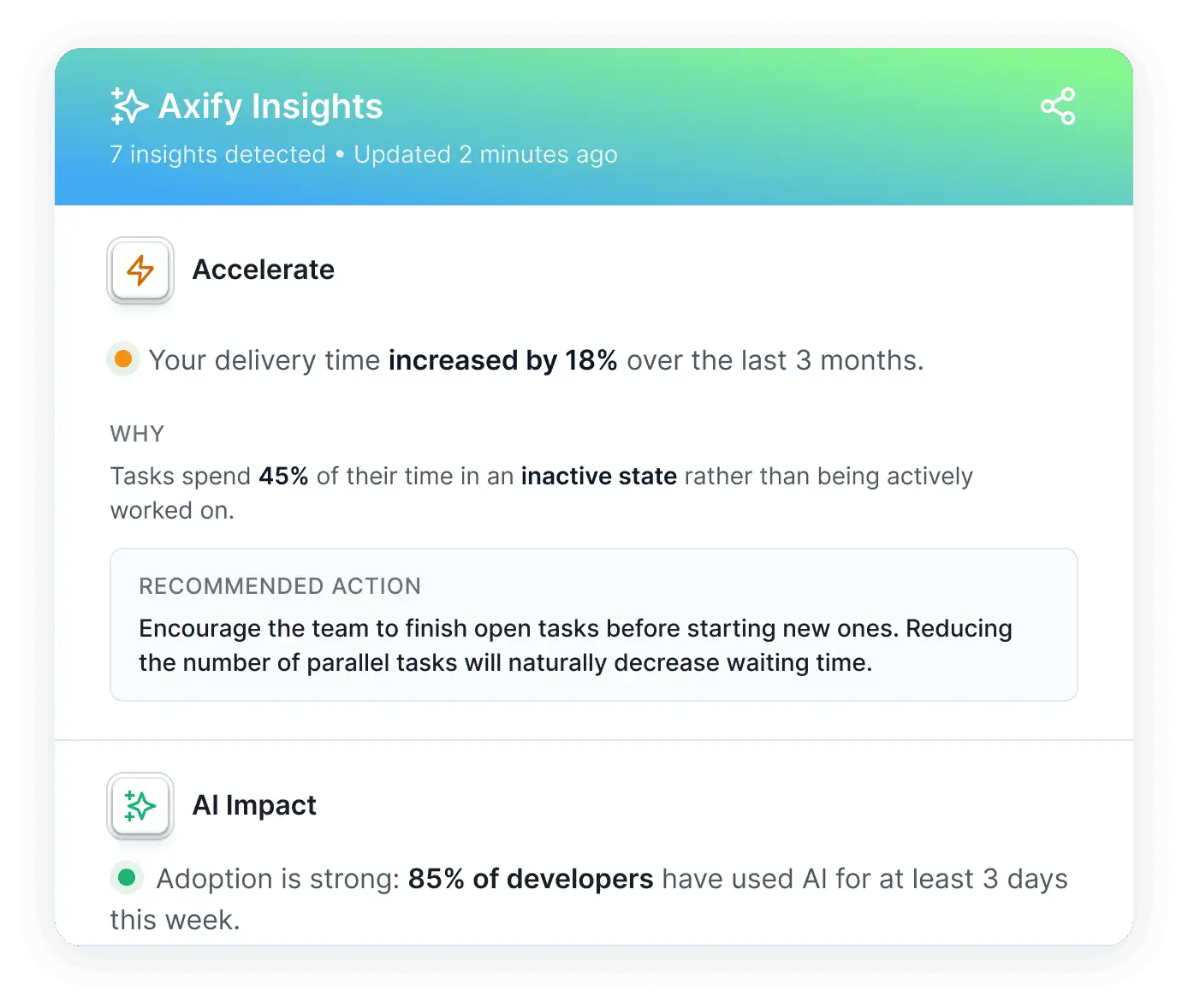

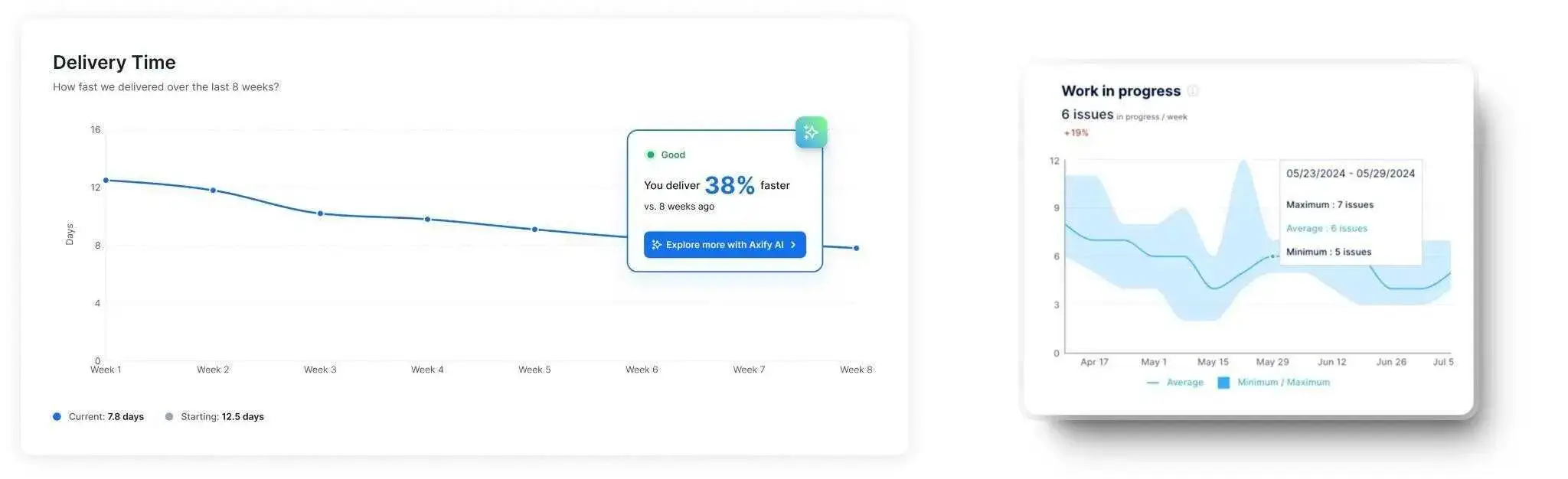

Axify operates at this level. It gives you visibility and helps you make the right decisions to power your SPE.

The platform aggregates SDLC data across Jira, Azure DevOps, GitHub and GitLab. It also imports data from AI coding agents like GitHub Copilot, Claude Code, and Cursor. Workflow phases such as coding time, pickup time, review time, and deployment time are analyzed to expose delays and handoff friction.

Delivery behavior is then correlated with outcomes like change failure rate and failed deployment recovery time, so you can see how workflow decisions influence production stability.

Axify’s Value Stream Mapping tool shows you how work flows from one stage of the SDLC to another, exposing team trends and possible issues.

As such, you feed your SPE analysis with real data.

You can understand whether review bottlenecks, large batch sizes, or delayed merges increase risk before deployment. That is delivery system analysis.

With Axify Intelligence, you gain a decision partner, too.

It detects bottlenecks, explains what changed in your workflow, and recommends actions tied to delivery constraints.

The embedded AI assistant understands your historical delivery data, surfaces structured executive summaries, and lets you ask targeted questions about trends or regressions. It can also support decision-making by suggesting policy adjustments such as limiting work in progress or redistributing review ownership.

As you can see, Axify goes beyond mere testing or runtime monitoring.

Instead, it helps you see how engineering behavior shapes your performance outcomes and helps you make the right decisions based on those findings.

Common Challenges in Software Performance Engineering

Even with a defined process, structural pressures inside delivery may undermine your performance discipline.

Here are the most common challenges you can face in practice:

- Balancing performance with feature delivery: Roadmap pressure pushes teams to prioritize visible functionality. As a result, performance checks are deferred or scoped down. That tradeoff leads to technical risk accumulation. When latency or saturation issues surface later, feature work pauses for unplanned fixes. What looked like acceleration becomes long-term disruption.

- Managing complexity in distributed systems: Modern architectures rely on microservices, third-party APIs, queues, and autoscaling infrastructure. A single slow dependency can cascade across services. Performance issues may arise from interaction patterns, concurrency assumptions, or traffic spikes that were underestimated during design.

- Integrating performance practices consistently: Staying consistent is a big problem for many teams. Red flags are letting new services bypass validation, or not updating thresholds after architectural changes. Without reinforcement in planning, reviews, and CI pipelines, discipline erodes.

- Turning metrics into actionable decisions: Dashboards show metrics like latency, throughput, and failure rates. The challenge is attribution and ownership. If metrics are not tied to specific services, teams, or backlog actions, trends remain informational rather than corrective.

Power Your Software Performance Engineering with Axify

Performance failures rarely come only from “slow code.” They typically come from how work moves through planning, implementation, review, and deployment.

So even when a load test or an APM trace points to a bottleneck, the upstream causes can still be hiding in batch size, review queues, or inconsistent team practices that keep shipping risk forward.

Axify gives you delivery-level visibility that helps connect those upstream behaviors to downstream performance risk.

This shows up in a few concrete ways:

- Delivery friction: See where work is waiting, for how long, and in which workflow phase delays accumulate (for example, pickup delays versus review delays).

- Practice inconsistency: Compare teams and projects to spot where standards drift, such as review policies, work-in-progress levels, or batching patterns.

- Structural causes of performance degradation: Identify patterns that increase risk over time, such as oversized pull requests, long review backlogs, or slow handoffs between stages.

This is why Axify complements performance testing and APM.

Testing validates behavior under load, and APM diagnoses runtime behavior in production. Axify focuses on the process and people side: how delivery decisions and workflow constraints shape what reaches production and how risky that change is.

Book a demo with Axify today to see how our AI decision layer supports performance planning, validation, and remediation using your delivery data.

FAQ

Is software performance engineering the same as performance optimization?

No. Performance optimization usually refers to improving an existing system after performance problems appear. Software performance engineering focuses on preventing those problems during design and development by defining performance objectives and validating them throughout the SDLC.

How is software performance engineering different from scalability engineering?

Scalability engineering focuses specifically on how systems handle increasing traffic or data volume. Software performance engineering is broader: it defines overall performance behavior, including latency, throughput, and resource efficiency, while also addressing scalability as one component of system design.

What is an example of software performance engineering in practice?

A common SPE practice is defining a latency target during system design, such as keeping p95 response time below 200 milliseconds under peak traffic. Engineers then validate architectural choices, database queries, and service communication patterns throughout development to ensure that the system can meet that target before release.

When should software performance engineering start in the SDLC?

Software performance engineering should start during system design and architecture planning. Performance constraints such as latency budgets, throughput targets, and concurrency limits influence architectural decisions like service decomposition, caching strategies, and database selection. Starting SPE after implementation limits the ability to correct structural issues without major refactoring.

What skills are required for software performance engineering?

Software performance engineering combines multiple skills, including system architecture design, load modeling, performance testing, observability analysis, and capacity planning. Engineers also need to understand how application code, infrastructure behavior, and delivery workflows interact to influence latency, throughput, and system reliability.