Shipping code quickly can look like a trade-off with stability. Faster pull requests can shorten release cycles, yet rushed reviews or delayed test feedback can introduce defects that surface later in production.

But your engineering teams don’t need to make a speed versus quality compromise. You need to understand how to optimize both inside your software delivery workflow.

You’re on the right page for that.

In this article, we’ll discuss how review, test, and release mechanics influence speed and quality. We will also compare practical ways to align both outcomes.

Pro tip: Axify’s AI engineering assistant (Axify Intelligence) analyzes your delivery data to surface bottlenecks and recommend workflow adjustments that you can implement in real-time. Get in touch to learn more!

What Is Speed in Software Development (And Why It Matters)

Speed in software delivery refers to how fast your team completes work in a certain interval, which includes:

- How quickly code and changes move through development and pull request submission

- How quickly review and CI pipelines provide feedback

- How quickly testing and validation complete

- How quickly teams can deploy code and code changes safely and frequently

In practice, this reflects how fast work travels through coding, PR review, CI, and release workflows, from the first commit to the moment the feature is deployed to production.

Example:

- Coding time: 2 hours

- PR review wait: 1 day

- CI queue: 40 minutes

- Deployment window: 2 days

Remember: This concept of delivery speed seen as flow velocity (or how fast work moves through the system) matters because software delivery is a network of interacting stages.

Let’s say a small pull request enters review quickly, tests run sooner in continuous integration, and engineers receive feedback while the context of the change is still fresh. That shorter loop reduces rework and supports faster iteration with less delivery friction.

As a result, prioritizing speed improves how work moves through review, testing, and release.

These are the benefits of prioritizing speed:

- Competitive advantage: Faster delivery allows quicker responses to user feedback and market shifts.

- Faster product-market fit: Rapid iterations help validate ideas before committing larger engineering efforts.

- Reduced batch size: Smaller work items move through reviews and testing with less friction.

- More learning cycles: Frequent releases create more opportunities to validate code in production.

Watch this short clip to see why shipping faster matters more than waiting for perfect quality:

Let's go over quality next.

What Is Quality in Software Development (And Why It Cannot Be Ignored)

Quality in software development refers to how reliably a system behaves in production and how safely it can evolve over time. This means writing and reviewing source code in a way that keeps systems stable today and easier to change in the long run.

However, reliability alone does not fully capture software quality. Stable systems also depend on:

- Maintainability

- Consistency

- Predictability

Well-structured codebases, readable implementations, and consistent review practices prevent minor defects from propagating into larger system failures.

This matters because delivery does not stop at deployment. A small defect in production might trigger incident response, rollbacks, or emergency fixes.

Industry research supports the cost of these late failures.

Research shows that fixing a bug in production can cost up to 100 times more than fixing it during the design phase. However, strong internal quality practices directly protect reliability and long-term stability.

As a result, prioritizing software quality leads to several benefits:

- Lower long-term costs: Fewer late-stage fixes and emergency patches.

- Fewer incidents: More stable releases and predictable deployments.

- Sustainable development pace: Engineers spend less time responding to production incidents and emergency fixes.

- Higher customer trust: Stable systems improve overall user confidence.

Side note: AI can speed up detection and recovery, but it does not eliminate the impact of defects in production. Without strong quality practices, faster delivery can increase instability.

Watch this short video to understand the key quality attributes that make software reliable and scalable:

This leads us to a new point.

Metrics That Measure Software Delivery Speed

Speed in software delivery must be measured consistently across the workflow. Below are the indicators engineering leaders review when evaluating development speed and identifying friction in software delivery workflows.

Core Speed Metrics

Delivery speed depends on how quickly work flows through reviews, testing, and deployment stages. Because each step can introduce a delay, teams track several indicators to understand how work flows and where time accumulates.

- Cycle time: Measures the elapsed time between starting a process and delivering the finished product to your clients. Short cycle time usually indicates small pull requests and fast feedback loops. Industry benchmarks show that the top 25% of successful engineering teams achieve a cycle time of 1.8 days. That pace usually reflects disciplined review practices and consistent continuous testing within CI pipelines.

- Lead time: Reflects the total time from the moment a stakeholder makes a request until the feature runs in production and becomes usable for end users. Tracking this metric helps you understand how quickly ideas move from request to real customer value.

- Deployment frequency: Shows how often new code reaches production. Frequent releases typically indicate smaller batches of work and stronger release automation.

- Flow velocity: Flow velocity measures how many work items move through the system over time. Higher flow velocity usually signals less waiting across review, test, and release stages.

- PR merge time: Pull request merge time reflects how long merges wait for review and approval. Large PRs or overloaded reviewers usually increase this time, which slows overall delivery.

Measuring Speed Before and After AI Adoption

AI coding agents can change how quickly code gets written, but that does not automatically mean work reaches production faster.

Side note: We discuss whether AI coding assistants really save developers’ time here, and the short answer is yes, in general, but not in all cases. In practice, most teams are still early in adoption, which means there is significant untapped potential to unlock.

That’s why you need to measure whether AI improves delivery speed or just shifts delays into review, validation, or merge stages. Then you can identify where further improvements are possible.

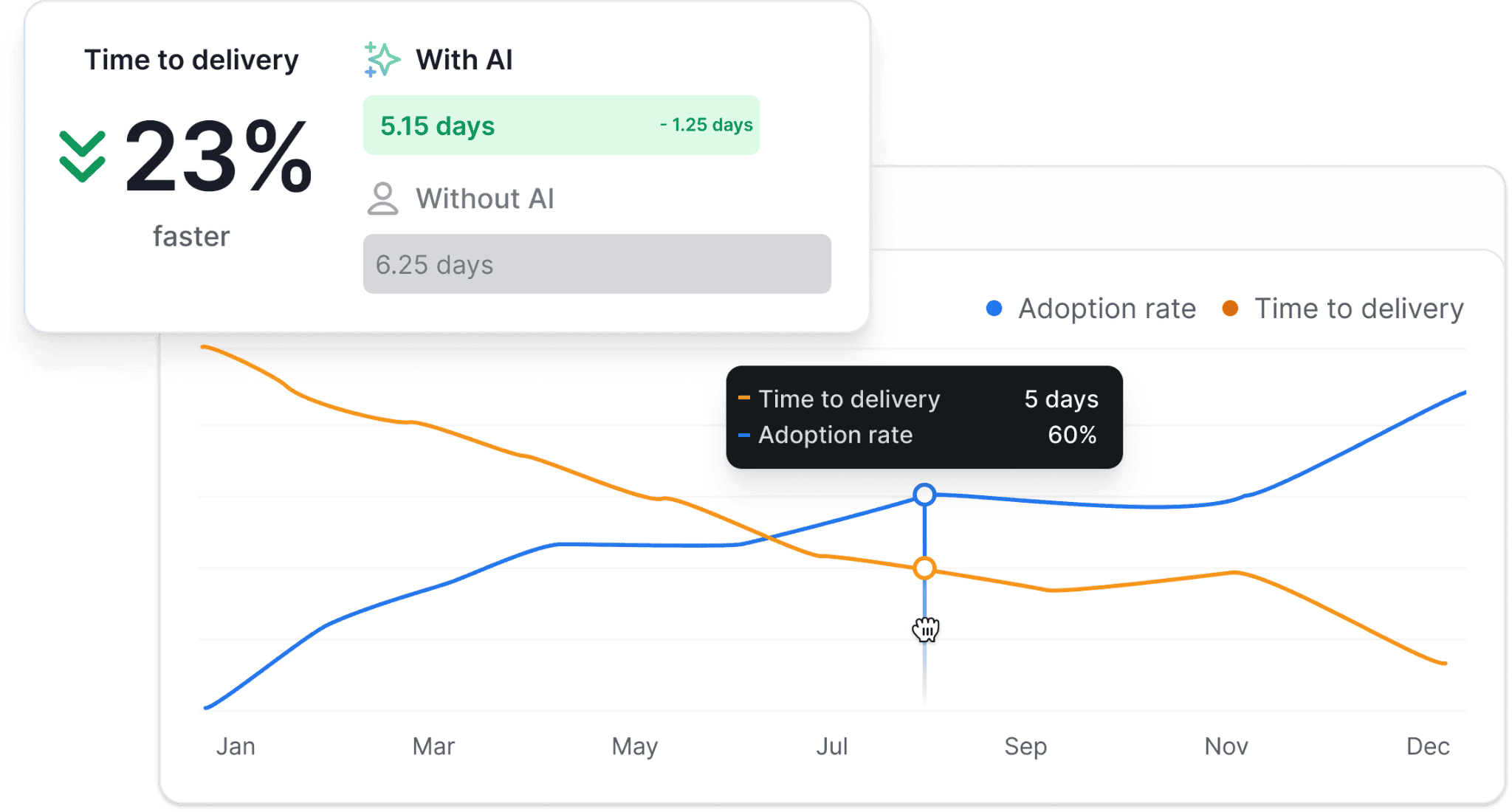

This is where Axify’s AI Impact feature can help.

It connects your AI usage data with delivery performance so you can compare team results before and after AI adoption. You can also track differences by tool, team, or organization, and see whether AI support is actually speeding up delivery.

Axify does this by correlating AI adoption with the speed metrics used to evaluate delivery performance. It helps you evaluate several indicators to understand these changes:

- Throughput: Whether teams complete more work after AI support is introduced.

- Cycle time: Whether work moves faster from start to completion.

- Delays: Whether AI reduces waiting time or adds friction and bottlenecks elsewhere in the workflow.

- PR merge time: Whether AI-assisted changes move through review and merge faster or slow down in review.

From our perspective, comparing speed metrics before and after AI adoption shows you whether AI is actually improving speed or simply moving the slowdown somewhere else.

But let's not forget about software quality.

Metrics That Measure Software Quality

Here, we’ll analyze the metrics commonly used to evaluate reliability and long-term maintainability in modern engineering environments.

Core Quality Metrics

Reliable systems depend on consistent validation throughout development, testing, and release stages. To avoid quality issues appearing after deployment, use indicators that expose risk earlier in delivery.

- Change failure rate (DORA): Measures the percentage of deployments that cause incidents, rollbacks, or degraded functionality. This indicator connects your release practices with real operational outcomes. The DORA 2025 report shows that only 8.5% of teams maintain a change failure rate between 0% and 2%.

- Mean time to recovery (MTTR): Also known as failed deployment recovery time or time to restore service, reflects how quickly systems return to normal operation after a production incident. Short recovery times usually indicate effective monitoring, clear ownership, and well-tested rollback procedures.

- Defect escape rate: Tracks how many defects pass through testing and appear in production. Higher escape rates usually signal gaps in automated validation or insufficient test coverage during development.

- Rework rate: Measures how often delivered work requires follow-up fixes or revisions. High rework usually appears when work batches are large or when code clarity is limited.

- Code review quality: Reflects how effectively reviewers identify risks before merging pull requests. Thorough reviews reduce hidden defects and maintain consistent review standards across the codebase.

Measuring AI’s Impact on Code Quality

AI tools now assist many parts of the development workflow. Yet faster code generation does not automatically improve reliability. For that reason, engineering leaders need visibility into how AI influences delivery and reliability metrics.

Axify evaluates several indicators so you can understand this relationship:

- AI trust (acceptance rate): Measures how often developers accept AI-generated code suggestions in the IDE or code assistant.

- Confidence level: Indicates how frequently developers rely on AI output without substantial modification before committing code.

- Frequency of AI interactions: Tracks how often engineers use AI tools during coding tasks.

To understand the real effect on quality, Axify also compares several metrics before and after AI adoption:

- Rework: Observes whether AI-assisted work leads to additional commits or revisions in the same PR.

- PR quality: Evaluates review outcomes such as requested revisions, rejected PRs, or follow-up fixes when AI-generated code is introduced.

- Change failure rate: Measures whether deployment reliability improves or declines.

- Production incidents: Tracks whether post-deployment incidents or rollbacks increase after AI adoption.

From our experience analyzing clients’ delivery data, the pattern becomes clear:

- If AI adoption rises while rework increases, quality may deteriorate because developers accept suggestions that introduce new defects.

- When AI usage increases and rework declines, AI is likely improving code reliability and helping teams manage complex development tasks more effectively.

All of this takes us to the next point.

How to Balance Speed and Quality in Software Development

Balancing speed and quality means adjusting how work moves through the PR, CI, and release pipeline.

Once you understand where PR wait times, CI failures, rework, or production incidents appear, you can make workflow changes that accelerate delivery speed without increasing defects.

We advise you to try the methods below.

Tactic 1: Use Engineering Intelligence to Identify and Prioritize Improvements

The fastest way to balance speed and quality is to use automation and the right tooling to understand how your delivery system behaves.

Manually analyzing multiple signals takes lots of time, while engineering intelligence tools like Axify help you see where to act and what to improve.

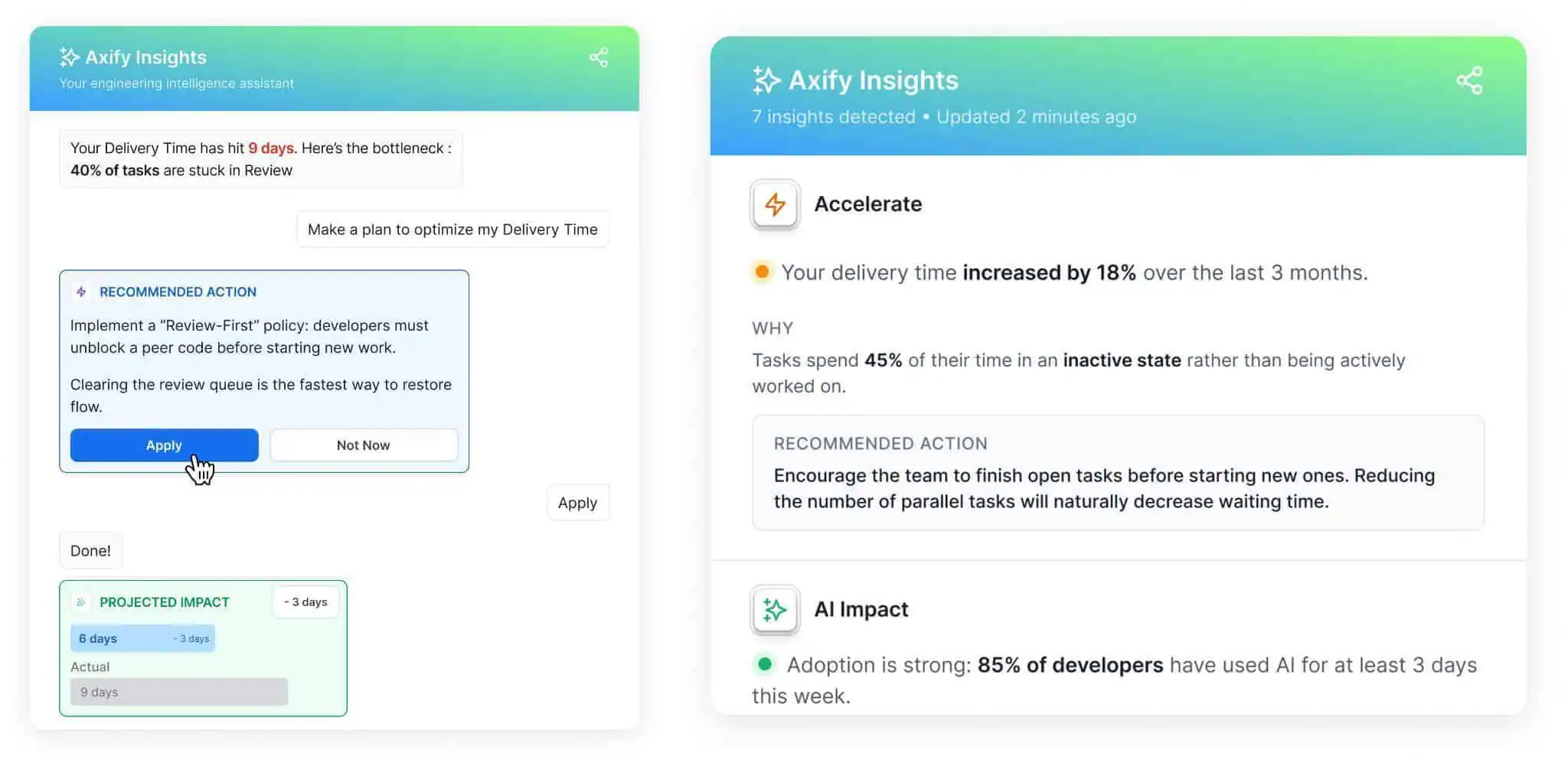

Axify Intelligence connects with your existing development tools, analyzes delivery performance continuously, and turns workflow data into decision support for engineering leaders.

Axify’s engineering intelligence helps by:

- Detecting anomalies: Identifying trend changes across commits, pull requests, and deployments so emerging issues become visible early.

- Surfacing bottlenecks: Analyzing each stage of the delivery workflow to pinpoint where work slows and the probable cause.

- Providing contextual insights: Connecting delivery signals with root causes, quality indicators, and AI usage patterns so changes are easier to interpret.

- Suggesting actions: Attaching practical recommendations to the detected issue so leaders can implement actions rather than simply analyze dashboards.

From our experience, visibility is important, but correct decision-making is essential. That’s the problem we’re solving for our clients at Axify.

If you prefer a more hands-on approach, the following tactics can help improve delivery speed and quality at the workflow level.

Tactic 2: Reduce Batch Size (MVP Thinking)

Once bottlenecks become visible, the next step is to reduce batch size. Batches are defined as the number of tasks, items, or units grouped for processing, development, or transmission.

Large batches reduce transparency, which leads to an increase in issues and, as a result, a higher cost of solving them. You’ll also see more integration effort and variability, plus delayed value.

Smaller increments solve these problems.

Each batch of work becomes easier to review, faster to validate in CI pipelines, and safer to deploy. In practice, this means delivering minimal viable increments that focus on a single improvement or feature adjustment.

These smaller changes surface issues earlier and reduce the likelihood of large failures during deployment. In turn, this leads to cost optimization, faster time to market, and more value delivered to end users.

Tactic 3: Automate to Protect Quality While Moving Fast

Faster delivery increases the amount of work moving through the delivery system. However, manual validation cannot keep pace with this higher throughput, which increases the risk of production defects.

Automation closes this validation gap. CI/CD pipelines automatically validate new code after commits, while automated test suites verify expected system behavior and detect regressions before deployment. Parallel test execution can further shorten feedback cycles by validating multiple work items simultaneously.

These practices allow teams to move work through development, testing, and release stages more quickly while maintaining consistent quality standards.

Tactic 4: Manage Technical Debt Intentionally

Even with strong testing practices, systems accumulate complexity over time. Refactoring postponed for too long eventually slows development because engineers must work around harder-to-change code paths or unclear dependencies.

Managing technical debt prevents that slowdown. Tracking rework patterns usually reveals where design limitations cause repeated fixes or extra validation effort. Allocating regular time for refactoring keeps systems easier to modify and prevents delivery friction in future releases.

Tactic 5: Optimize Code Reviews Without Creating Bottlenecks

Code reviews protect implementation quality, but poorly structured review practices can slow delivery. When reviewers receive large batches of work or frequently switch context, review queues grow and feedback arrives late.

A more effective approach focuses reviews on logic, risk, and the scope of each work item. Limiting the size of work batches and time-boxing review sessions reduces cognitive load for reviewers.

As a result, feedback arrives earlier, and developers can address issues before additional work items accumulate in the delivery system.

Tactic 6: Protect Team Sustainability

Delivery performance depends heavily on the people responsible for maintaining it. When workloads become unrealistic, or incident response dominates daily work, delivery quality drops as teams spend less time on careful review and validation.

Maintaining sustainable workloads prevents repeated delivery issues. Regular retrospectives help identify recurring sources of friction, while realistic planning reduces the pressure that usually leads to rushed changes or incomplete testing.

Tactic 7: Prioritize User and Stakeholder Feedback

Delivery success is not measured by technical execution alone. Ultimately, released features must address real user needs.

Continuous feedback from users and stakeholders helps teams adjust priorities quickly. Early input reveals whether a feature actually solves the intended problem or requires further refinement.

This feedback loop allows teams to validate direction earlier and avoid investing more effort in work that misses the requirement.

Align Speed and Reliability Through Clear Delivery Decisions

Speed and reliability improve together when teams manage how work flows through the delivery system. Smaller work batches shorten review cycles, automated validation protects reliability before code reaches production, and delivery metrics reveal constraints that slow reviews, testing, or deployment.

These signals tend to point to the same root causes: oversized work batches, delayed feedback loops, or overloaded review stages. When those patterns become visible, engineering leaders can make targeted workflow adjustments that improve both delivery speed and stability.

Axify supports those decisions by analyzing delivery data, identifying workflow constraints, and recommending improvements based on real delivery patterns.

If you want to analyze your delivery data and apply improvements directly from your engineering intelligence platform, schedule a demo with Axify today.

FAQ

Is code quality dropping across the industry, and if so, why?

Not necessarily. Many teams ship faster today due to automation, CI/CD pipelines, and AI-assisted development. However, higher delivery speed can expose weaknesses in testing practices, code readability, or review standards. When teams adopt new tools without updating validation practices, defect rates can increase.

Is speed of development more important for startups or organizations than quality?

Both matter. Startups may tend to prioritize speed to validate product ideas quickly, but poor quality can slow future development and increase operational risk. Sustainable delivery requires balancing speed and quality, typically through small work batches, automated tests, and disciplined review practices.

How do you measure and control software quality in modern software development?

Software quality is usually measured through indicators such as defect density, production incident frequency, and change failure rate. Teams maintain quality through practices like unit testing, pair programming, automated CI pipelines, test-driven development, and consistent code reviews that enforce code readability and maintainability standards.

Is speed the only way to measure a developer’s value?

No. Speed alone does not capture the full impact of a software developer. Effective developers also contribute to maintainable code, system reliability, and knowledge sharing within the team. Metrics such as review participation, code clarity, and architectural improvements tend to have a larger long-term impact than raw output.

Is it better to have programmers who work quickly with lower quality code or those who work slower but produce higher quality code?

Neither extreme works well in modern software engineering. High-performing teams aim for fast feedback cycles while maintaining strong quality controls. Practices like trunk-based development, automated testing, and small pull requests allow teams to move quickly while preserving code quality.