If you’re searching for GetDX alternatives, you’ve likely reached a point where your current setup isn’t fully aligned with how your teams operate or how you want to improve delivery. That doesn’t mean GetDX falls short, but your needs may have evolved toward deeper workflow visibility, different metrics coverage, or more actionable guidance.

In this roundup, we compare the top GetDX competitors and highlight what each platform offers so you can choose the solution that best supports your engineering goals.

So, let's get started!

Pro tip: If dashboards answer what happened but not what to change, you need decision support. Axify Intelligence analyzes your delivery history, surfaces bottlenecks, and recommends concrete actions. You can also interact with an Engineering Intelligence Assistant to explore root causes and next steps. Book a demo today

|

Tool |

Best for |

Focus area |

Metrics & flow coverage |

AI impact performance |

Insights support |

Integrations |

Typical pricing tier |

|

Axify |

Delivery decisions |

Flow + impact |

End-to-end SDLC |

Before/after AI |

Root-cause + actions |

Planning/CI/incidents |

$19 / user |

|

Faros AI |

Enterprise planning |

Org visibility |

Initiative + sprint |

Adoption tracking |

Planning signals |

Complex stacks |

Custom |

|

Hivel |

Scaling teams |

DORA + allocation |

Git + Jira flow |

AI recommendations |

Guided actions |

Git + PM tools |

$25 / user |

|

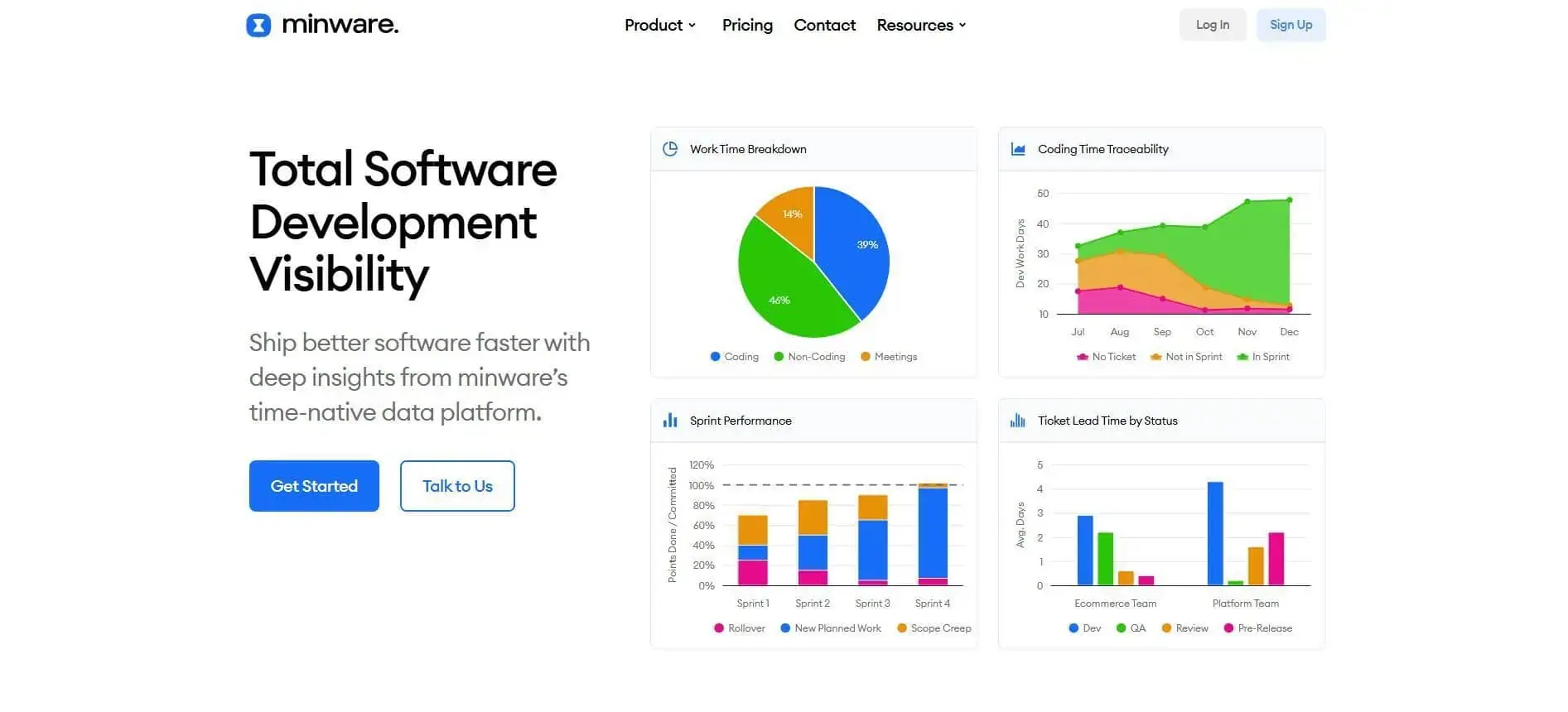

Minware |

Cost attribution |

Time tracking |

Hours + effort |

None/limited |

Effort reports |

Git + tickets + calendar |

$25 / user |

|

Jellyfish |

Eng + finance |

DevFinOps |

Delivery + cost |

AI + finance tie |

Exec reports |

Broad enterprise |

Custom |

|

LinearB |

Automation ops |

Workflow triggers |

PR + release flow |

AI reviews |

Auto fixes |

SCM + CI |

$29 / user |

|

Code Climate |

Benchmarks |

Team health |

SCM + tickets |

None/limited |

Structured reports |

SCM + on-prem |

$449 / seat/yr |

|

Waydev |

AI analytics |

Planning + goals |

Git + PM flow |

Usage tracking |

NL queries |

Git + PM |

$29 / user |

|

Allstacks |

Forecasting |

Predictability + ROI |

Delivery + finance |

Risk analysis |

Forecast + alerts |

Unlimited |

$400 / user/yr |

|

Port |

Dev portal |

Self-service ops |

Catalog only |

AI agents |

Workflow actions |

Toolchain wide |

$30 / seat |

How We Picked These GetDX Alternatives

When evaluating GetDX alternatives, we started from real operating constraints: limited review time, active delivery pressure, and budget scrutiny.

Each tool was assessed on how quickly it could answer concrete delivery questions, such as:

- Where is work stalled right now?

- Which initiatives are consuming the most capacity?

- Which changes altered lead time or failure rate?

We filtered out platforms that required heavy configuration, manual data reconciliation, or process redesign before producing usable signals.

These were the evaluation criteria:

- Delivery visibility: Clear, stage-level views of where work waits across planning, code, review, testing, and release. Leaders consistently cite context gaps as a source of delay. For example, 26% of engineering leaders report time spent gathering project context as a major productivity drain. Tools were favored when they surfaced this context directly from workflow data.

- Workflow-level context: The ability to trace delays to specific queues, handoffs, or ownership gaps, rather than presenting aggregated summaries.

- Decision-support signals: Outputs that translate trends into next actions, instead of requiring separate analysis and slide preparation.

- Prioritization clarity: Visibility into how capacity is allocated across initiatives, KTLO, and improvement work. This supports sequencing, staffing, and cost trade-offs.

- Low analysis overhead: Fast setup and stable defaults that produce signals without ongoing manual report building.

- Executive reporting: Structured summaries that remain consistent across engineering, product, and finance reviews.

Why People Look for GetDX Alternatives

Teams evaluate alternatives when the reporting structure becomes more complex than their current decision needs.

As delivery pressure increases, leaders need faster answers tied directly to lead time trends, initiative impact, and cost trade-offs. In those situations, tools that require significant configuration, interpretation, or survey coordination can slow decision cycles.

Common reasons teams explore alternatives (as noted in public reviews) include:

- Onboarding and integration require configuration across multiple tools and teams

- Full value depends on consistent survey participation and disciplined metric governance

- Infrastructure- or operations-heavy teams may find less relevance in experience-driven reporting

- Survey insights lose clarity when workflow data coverage is incomplete

- Reports require additional interpretation before clear priorities emerge

- Built-in recommendations focus more on analysis than on concrete next-step guidance

These constraints may prompt teams like yours to compare platforms that prioritize workflow visibility and direct decision support.

And that leads us to the 10 alternatives.

Top 10 GetDX Alternatives to Consider

The top GetDX alternatives to consider are Axify, Faros AI, Hivel, and Minware, followed by platforms that approach delivery questions from different angles. The choice ultimately comes down to how directly each platform supports planning, prioritization, and resource allocation decisions that impact productivity.

Here are the options, grouped by how well they meet those needs.

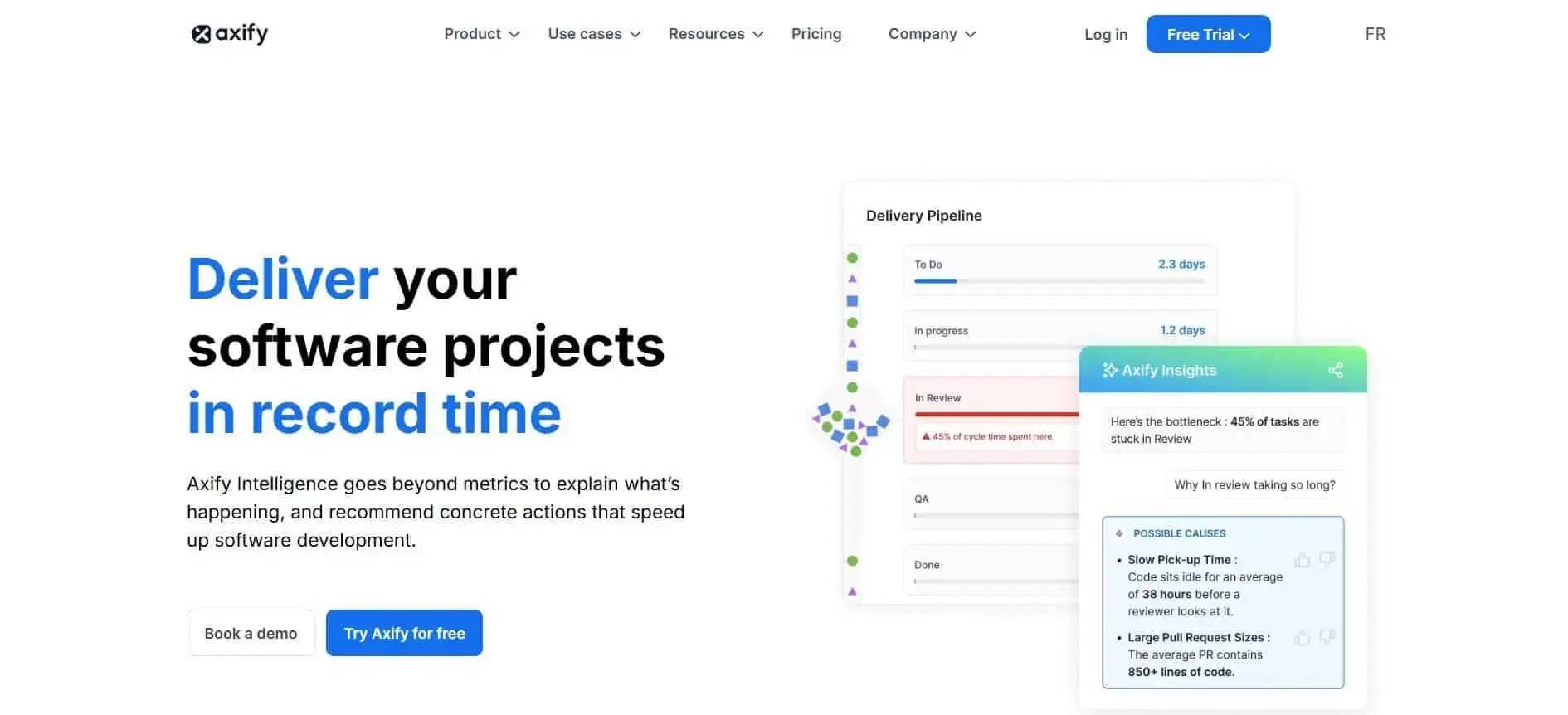

1. Axify

Axify is a software engineering intelligence platform that focuses on delivery flow, bottlenecks, initiative impact, and AI-assisted decision support. The platform connects delivery events across planning, code, CI, and incidents, then explains why metrics move and what to change next.

As a result, delivery signals stay tied to operational questions, such as which workflow stage is creating delays, which initiative is consuming capacity, and where AI adoption is changing throughput.

Pro tip: Unlike GetDX’s AI transformation layer, which blends survey inputs and framework-based correlations, Axify measures AI impact through structured before/after delivery comparisons tied directly to cycle time, review load, and rework rates.

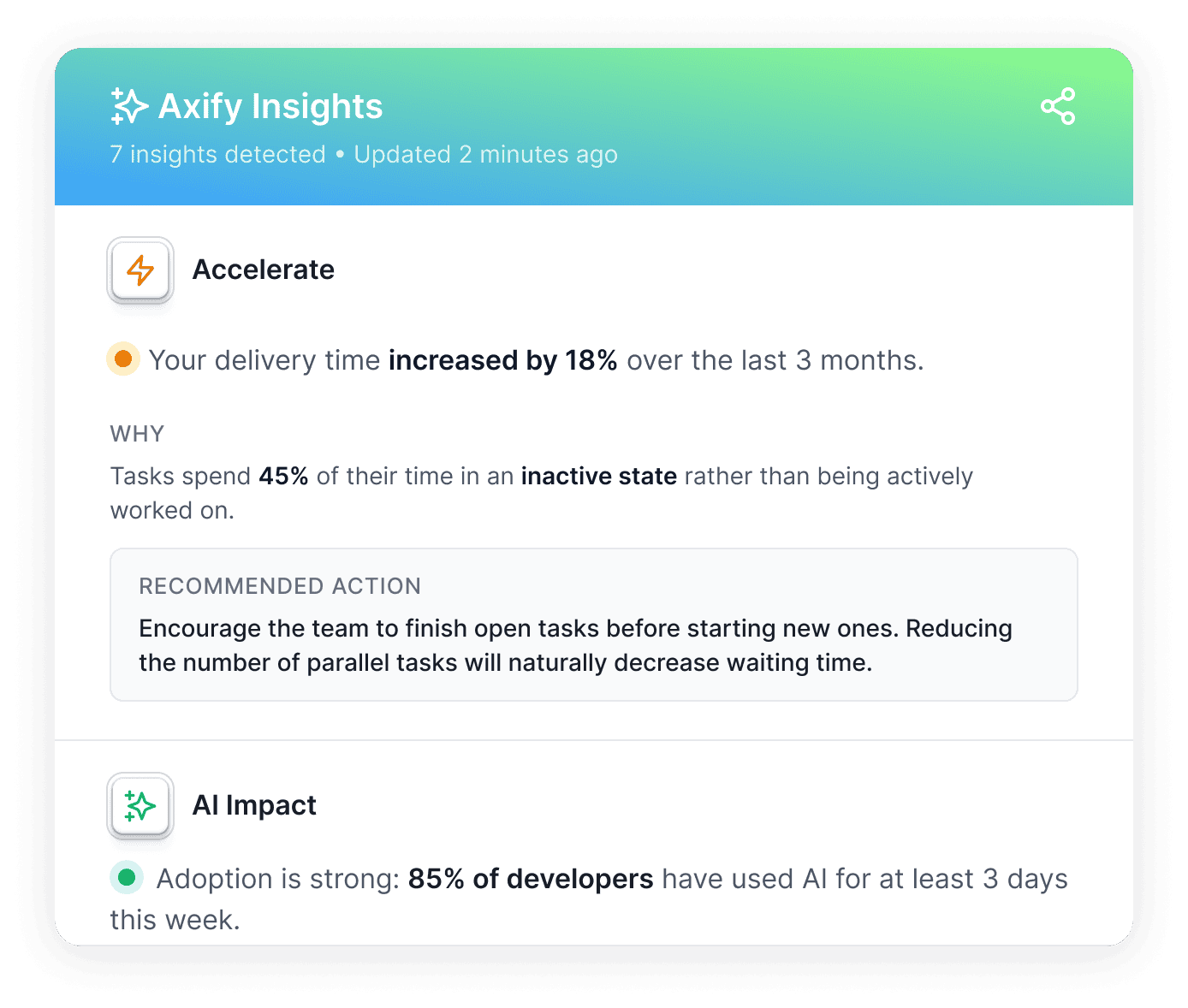

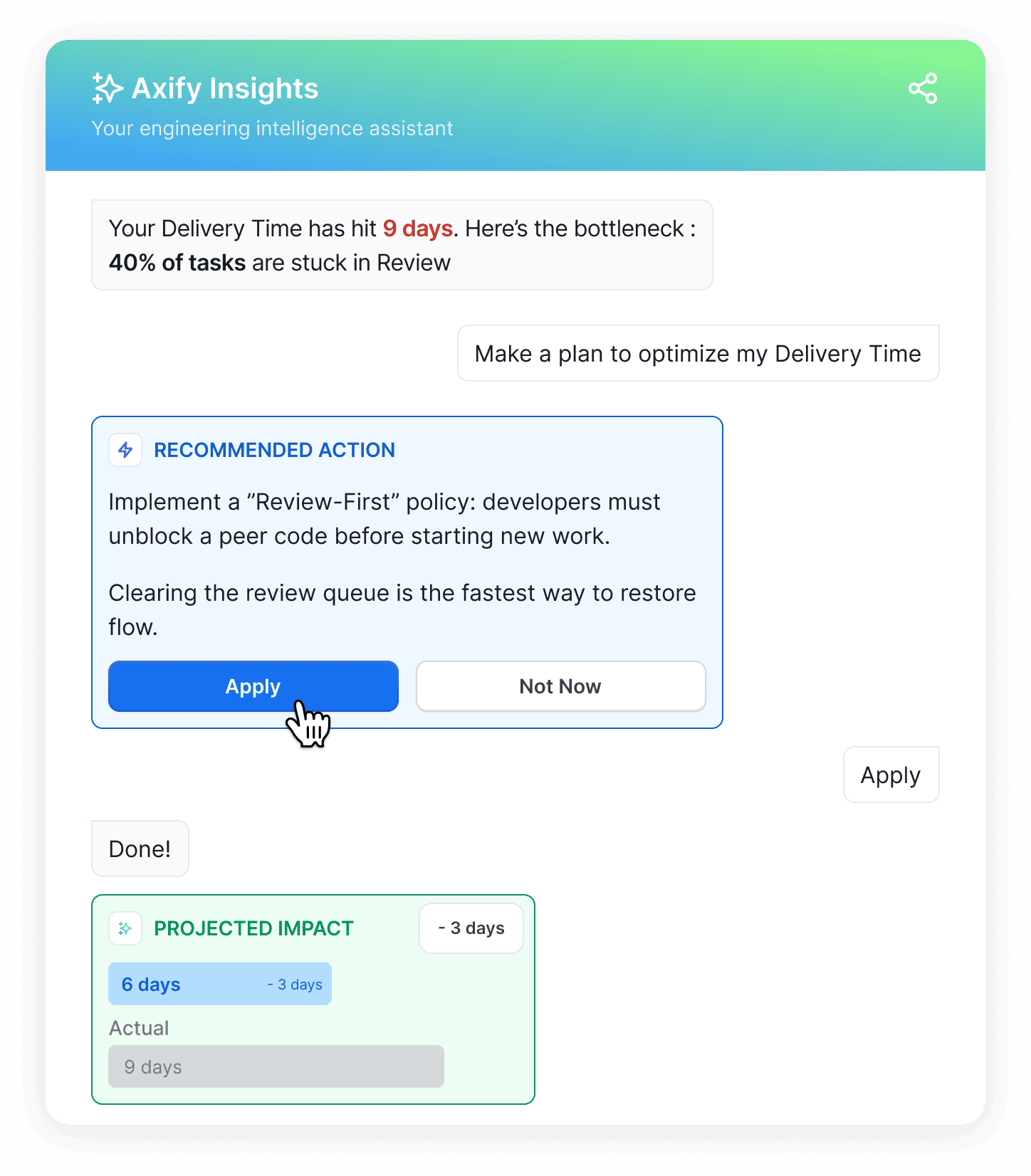

Also, Axify Intelligence acts as an AI decision partner embedded directly in your delivery data. It continuously analyzes trends across engineering metrics to detect meaningful shifts.

When anomalies appear, it surfaces the most likely bottleneck in the delivery system, maps how changes in one stage propagated across the workflow, and proposes specific actions (e.g., adjust review ownership, rebalance WIP, refine rollout strategy). Here’s what that looks like:

Leaders can then interrogate the data through a conversational interface to validate assumptions, test scenarios, and move from signal to decision like so:

And we have the results to prove our platform works.

At BDC*, Axify supported a three-month effort focused on delivery predictability and flow by surfacing where work was stalling and why. After those bottlenecks were addressed, delivery time improved by up to 51%.

* Note: This engagement took place before the release of Axify’s AI Impact and Axify Intelligence capabilities, which now extend that same delivery visibility with structured AI impact measurement and automated decision support.

Strengths:

- End-to-end delivery and value stream mapping let you see how work moves from planning through code, CI, and incidents.

- Initiative-based impact analysis lets you tie delivery changes to specific programs, priorities, or improvement efforts.

- AI explanations for metric movement show why numbers change, not just that they changed.

- Natural language querying lets you ask delivery questions directly without building reports or filters.

- Continuous insight generation keeps signals updated as work flows, not only during reviews.

- Root cause detection traces delays and regressions back to specific stages, teams, or handoffs.

- Recommended actions translate metrics into concrete next steps for delivery decisions.

- Opinionated defaults reduce setup work and keep focus on execution instead of configuration.

Limitations

- Less DevEx sentiment surveying compared to survey-first tools.

- Less academic benchmarking and research framing.

Best for: Leaders who want to make delivery decisions that improve delivery time and compare AI impact before and after rollout.

Pricing: There's a free plan available, and the paid plans start from $19 per contributor/month.

Website: Axify.io

2. Faros AI

Faros AI is an enterprise-grade engineering intelligence platform that turns SDLC data into planning and execution signals leadership can act on. The platform pulls structured data from planning, code, CI, and incidents, then organizes it around outcomes like sprint predictability, initiative progress, and AI impact.

As a result, delivery conversations move from reacting to misses to planning trade-offs with evidence. In one enterprise example shared by Faros, leadership used sprint-level delivery metrics to shift from reactive firefighting to proactive capacity planning tied to roadmap goals.

Strengths:

- Org-level visibility across initiatives, teams, and programs.

- AI adoption and impact measurement across coding assistants.

- Ingestion options for complex enterprise toolchains.

Limitations:

- Moderate learning curve for new teams.

- Dashboard load times can occasionally be slow.

- Earlier versions have limited self-service functionality.

Best for: Large enterprises that require cross-team planning aligned directly with measurable delivery performance and capacity impact.

Pricing: Custom quote.

Website: Faros.ai

3. Hivel

Hivel acts as a centralized command layer for engineering data. It does this by turning Git and work-tracking signals into delivery guidance that leadership can use.

The platform combines DORA metrics, allocation views, and AI-generated insights to surface bottlenecks, workflow drift, and planning risks. That means patterns in code review delays or rework show up with context rather than raw counts.

For example, Klenty reports improvements in cycle time, review time, deployment frequency, and time to recovery after using Hivel’s Git and Jira insights to guide process adjustments. The focus stays on identifying where work slows and how allocation shifts affect roadmap execution.

Strengths:

- Consolidated dashboards built for leadership visibility.

- AI insights with recommended actions for flow issues.

- Investment profile tracking across roadmap, KTLO, and code quality efforts.

Limitations:

- Flexible rule configuration requires upfront setup to get meaningful metrics.

- Initial experience can feel overwhelming.

- Limited guided onboarding and contextual prompts.

Best for: Scaling teams that want AI-assisted analytics tied to DORA-style signals and allocation views.

Pricing: There's a free plan available, and the paid plans start at $25 per contributor/month.

Website: Hivel.ai

4. Minware

Minware centers on a time-native model that estimates how much work time is actually spent across Git, tickets, and calendars, rather than relying on raw activity counts. The platform links commits to tickets and calendar signals to approximate effort distribution, which supports planning accuracy and cost allocation without manual time tracking.

That means work tied to the roadmap, maintenance, or internal initiatives becomes visible in hours. For teams that need defensible reporting, this approach ties engineering activity to project-level accountability and provides time-based inputs that can support R&D cost capitalization workflows handled outside the platform.

Strengths:

- Time-based attribution across Git, tickets, and calendars.

- Report library spanning workflow, quality, and cost views.

- Integrations with Jira, GitHub, GitLab, Bitbucket, Azure DevOps, and Google Calendar.

Limitations:

- Self-hosted and on-premise ingestion positioned in higher tiers

- AWS integrations are not yet available.

- Custom integrations framed as Enterprise capabilities.

Best for: Leaders who need time-based delivery and cost allocation signals without manual tracking.

Pricing: A free plan is available, and paid plans start at $25 per contributor/month.

Website: Minware.com

5. Jellyfish

Jellyfish is a multi-product engineering intelligence platform that connects delivery data, planning signals, DevEx inputs, and financial reporting into one system. The focus is on aligning engineering execution with business outcomes, including AI impact and capitalization workflows.

For example, Iterable reported a 98% reduction in time spent on software capitalization after implementing the Jellyfish engineering management platform. That outcome came from automating the collection of engineering signals and linking them with cost data, which reduced manual reporting cycles.

Strengths:

- Delivery health, DevEx, AI impact, and DevFinOps in one platform.

- Engineering-finance reporting by combining activity and cost inputs.

- Benchmarking, lifecycle visibility, and executive-ready reporting views.

Limitations:

- Limited dashboard customization and occasional slower data syncs.

- High data volume can feel overwhelming.

- Jellyfish shows AI adoption and impact at a team or aggregate level, but it does not clearly surface multi-year trends for individual contributors or cohort-based comparisons (e.g., how specific AI power users performed over time vs. non-users).

Best for: Leadership teams aligning engineering delivery, financial reporting, and AI impact under one vendor.

Pricing: Custom quote.

Website: Jellyfish.co

6. LinearB

LinearB is an AI productivity platform that links engineering metrics to operational change through automation. Instead of stopping at reporting, it connects delivery data to workflow triggers that reduce friction in reviews and releases.

The platform supports DORA-style measurement while embedding automation into daily workflows. So delivery improvements move from dashboards into enforced practices, backed by reporting leaders can use in executive reviews.

For example, Yum! Brands automated more than 35% of pull requests and saved hundreds of developer hours per month after implementing LinearB. That result came from closing the loop between metrics, automation rules, and measurable outcomes across teams.

Strengths:

- Connects metrics to workflow automation that drives operational change.

- AI code reviews to improve merge quality at scale.

- Structured benchmarking and project delivery tracking with a built-in velocity report.

Limitations:

- Occasional minor visual glitches in the interface.

- Limited forecasting views for delay and impact scenarios.

- Detailed reports can complicate executive summaries.

Best for: Leaders who want measurable delivery gains driven by automation.

Pricing: Paid plans start at $29 per month/contributor.

Website: LinearB.io

7. Code Climate Velocity

Code Climate Velocity is a software engineering intelligence platform focused on capacity, delivery, quality, culture, cost, and goal alignment in one system. It ingests data across SCM, tickets, and other systems, then structures it into role-based views with defined metric logic.

The platform also documents industry benchmarks based on aggregated data from several hundred organizations over a 12-month period. Detailed metric definitions and glossary documentation reduce ambiguity in reporting, especially around review influence and coverage.

TripleLift used Code Climate Velocity to track pull request throughput and remove delivery roadblocks. After standardizing performance tracking, the team reported increases in PR throughput, commit volume, and push count.

Strengths:

- Delivery metrics and team health signals in a single reporting model.

- Documented benchmarks from its service catalog.

- Optional on-prem connectivity through a deployable agent.

Limitations:

- API documentation lacks depth and clear descriptions.

- Cross-team metric comparisons might be misleading due to different workflows.

- Some metrics might lack transparent calculation details.

Best for: Engineering leaders who want benchmark-backed delivery measurement with structured reporting.

Pricing: Free tier for small teams; paid plans start at $449 per seat/year; enterprise tiers offering additional features and scale pricing based on team size.

Website: Codeclimate.com

8. Waydev

Waydev turns engineering data into actionable delivery insight without manual reporting. It connects Git and project management systems to surface delivery speed, planning alignment, and cost visibility in one place.

As a result, initiative scope, bug load, and roadmap execution become transparent through shared metrics directly tied to business goals and outcomes. Also, Waydev AI adds conversational querying, so leaders can ask direct questions about delivery trends, AI usage, or planning drift without building custom reports.

In a three-month comparison, Sovos reported measurable throughput and activity increases after using Waydev to monitor and adjust delivery patterns.

Strengths:

- Natural-language analytics for executive-level insight.

- Initiative and planning alignment with financial visibility.

- Supports delivery metrics aligned with the SPACE framework.

Limitations:

- AI summaries might load slowly and lack depth.

- DevEx surveys may feel limited.

- Historical data access for older project comparisons is limited.

Best for: Teams that want AI-native delivery analytics with initiative alignment and financial visibility.

Pricing: Starts at $29 per active contributor/month.

Website: Waydev.co

9. Allstacks

Allstacks turns delivery activity into forecasted outcomes, investment visibility, and decision-ready narratives for engineering and finance leadership. It connects planning, Git, ticketing, and financial signals to quantify delivery predictability and engineering investment in time and dollars.

The intelligence engine and AI-powered deep research analyze initiative risk, delivery variance, and survey feedback to surface patterns that affect roadmap commitments and reporting accuracy.

For example, Enverus reduced manual effort in preparing software capitalization reports after automating data collection and audit workflows through Allstacks.

Strengths:

- Combines delivery forecasting with financial reporting and DevEx surveys.

- AI-driven analysis that ties initiative risks directly to business outcomes.

- Unlimited integrations across tiers.

Limitations:

- Initial setup can feel overwhelming due to many integrations and metrics.

- Data updates may run daily rather than in real time.

- Limited report and alert customization.

Best for: Organizations that need delivery predictability, DevEx insight, and investment visibility in one platform.

Pricing: Starts at $400 per contributor/year.

Website: Allstacks.com

10. Port

Port is an internal developer portal that centralizes engineering context and transforms it into self-service workflows and governed automation. It is built around a software catalog, scorecards, access controls, and AI agents that execute actions across your toolchain.

Instead of measuring delivery metrics directly, it structures ownership, environments, and service metadata. This way, leaders can reduce ticket-driven friction and improve team collaboration through clear service boundaries.

In a Checkmarx example, developers gained autonomy to manage environments through the portal, which reduced DevOps workload and operational costs.

Strengths:

- Catalog-driven model with workflows, actions, and scorecards in one platform.

- Free proof-of-concept tier with no time limit.

- Enterprise governance features such as SCIM, IP allowlisting, and Private Link.

Limitations:

- Not ideal for complex, state-heavy operational use cases.

- Initial learning curve around concepts and terminology.

- Some feature inconsistencies and documentation gaps require clarification.

Best for: Platform teams building portal-driven self-service capabilities and structured DevEx frameworks.

Pricing: Free plan up to 15 seats, and the paid plans start at $30 per seat/month.

Website: Port.io

What Is GetDX?

GetDX is an engineering effectiveness platform that combines developer experience surveys with workflow and delivery data to support leadership reporting.

It collects self-reported DevEx inputs alongside system data from tools such as issue trackers, repositories, and CI/CD platforms. This allows leadership to review perception trends together with delivery metrics, including DORA indicators, when assessing throughput, quality, and recovery.

Survey programs are a central component of the platform. Because part of the dataset is self-reported, improvement priorities are informed by how teams describe friction, alignment, and tooling gaps.

GetDX also provides benchmarking based on aggregated data from millions of data points across hundreds of organizations. This allows leaders to compare internal results against external reference groups.

Here’s how teams describe working with GetDX:

This leads us to what the platform actually does.

What Is GetDX Used For: Core Capabilities

GetDX is used to measure and benchmark developer experience, perceived productivity, and AI adoption by combining survey data with workflow signals from engineering systems.

The platform supports leaders who need standardized reporting across teams and a structured way to compare results over time. Its capabilities typically fall into three areas.

Developer Experience

This area focuses on how engineers report friction in their daily work and how that perception aligns with observable workflow data.

Core components include:

- DXI (Developer Experience Index): This index rolls up 14 experience drivers into a single score that can be segmented by team, role, and seniority. To ground that score in evidence, DXI is built from research-backed inputs and validated at scale.

- DevSat & experience sampling: Short surveys and in-workflow prompts collect structured sentiment around tools, work environment, and collaboration. These responses can be reviewed alongside delivery metrics to identify potential correlation between reported friction and workflow delays.

- Workflow analysis: Time loss across the SDLC is quantified in hours and tied to roles and teams. That breakdown helps you see which bottlenecks strongly affect cycle time and planning reliability.

- Executive reporting: Board-ready reports and AI summaries support leadership reviews and recurring reporting cycles.

- Industry benchmarking: Aggregated benchmark data allows organizations to compare internal scores against external reference groups by industry or region.

Engineering Productivity

This area measures how work progresses from backlog to production and how output is evaluated beyond raw activity counts. The emphasis is on delivery speed, stability, and allocation of engineering time.

Main components include:

- SDLC analytics: Data from issue trackers, repositories, and CI/CD systems is consolidated into a centralized dataset that supports lifecycle reporting. Leaders can review metrics across planning, coding, review, testing, and deployment stages using standardized reports.

- DX Core 4 framework: Metrics are grouped into four categories: speed, effectiveness, quality, and business impact. This structure reduces reliance on single indicators such as deployment frequency or PR volume by presenting multiple performance dimensions side by side.

- TrueThroughput™: Output is measured at the pull request level, with adjustments for scope and complexity. The goal is to distinguish small changes from large feature work so that delivery volume is not treated as equivalent value. The platform reports higher productivity for teams adopting this model.

- Sprint analytics: Team-level views track review time, rework patterns, and workflow distribution to support sprint and quarterly planning discussions.

- Custom reporting: SQL access and configurable views allow advanced users to build additional analyses beyond standard dashboards.

- Engineering allocation: Work is categorized across features, maintenance (KTLO), and technical debt. This breakdown supports resource planning, budgeting, and capitalization analysis.

AI Transformation

This capability evaluates how AI usage affects measurable delivery outcomes such as cycle time, revert rates, and allocation of engineering time. It links AI adoption data to workflow metrics so leaders can compare performance before and after rollout.

Core components include:

- AI strategic planning: Usage data is mapped to the DX Core 4 categories (speed, effectiveness, quality, business impact) to structure evaluation and prioritization.

- Vendor evaluation: Cohort and before/after comparisons assess how different AI tools influence throughput, rework, and workflow stability.

- AI usage analytics: Adoption is measured by sustained usage patterns within repositories and workflows, rather than by license counts alone.

- AI code metrics: Commit- and line-level data identify where AI-assisted changes occur and whether those changes correlate with higher review time, rework, or revert rates.

- AI impact analysis: Before/after comparisons evaluate whether AI-assisted work differs in cycle time, defect signals, or feature output relative to non-AI work.

- AI workflow optimization: Workflow data and feedback signals highlight where AI reduces manual effort and where it introduces additional review or coordination steps.

This takes us to the advantages of using this platform.

GetDX Benefits: Why Do Engineering Leaders Pick It?

Engineering leaders choose GetDX when they want a single platform that combines developer experience surveys, workflow analytics, benchmarking, and AI adoption tracking.

This typically becomes relevant when leadership needs structured reporting across teams, especially during quarterly reviews or transformation initiatives.

You might consider GetDX if you:

- Want a combined view of developer experience signals and delivery metrics

- Need survey-based sentiment data alongside system-generated workflow data

- Prefer a predefined framework (such as DX Core 4) to guide discussions

- Value industry benchmarking across companies and regions

- Require board-ready reporting packages

Final Verdict: Which GetDX Alternative Is Best?

The best GetDX alternative depends on the decision you need to support.

- If your mandate is structured DevEx measurement, survey programs, and benchmarking across organizations, GetDX aligns with that objective.

- If your priority is improving delivery flow, tying initiatives to measurable impact, and receiving AI-driven decision support grounded in workflow data, Axify is the stronger fit.

- Other platforms address narrower needs such as cost tracking, DevFinOps reporting, or internal portal management.

The choice depends on scope and operating model.

If you want faster, data-backed delivery decisions with clear next steps, book a demo with Axify and see how it applies to your teams.