AI tools are now embedded in most engineering workflows. Teams use them for code generation, pull request reviews, incident analysis, and deployment monitoring. The question for engineering leaders is no longer whether AI exists, but whether it is improving delivery outcomes.

Increased activity does not automatically translate into higher productivity. Faster code generation can introduce rework. Automated reviews can shift bottlenecks downstream. Without clear measurement, it is difficult to determine whether AI is improving flow, quality, or predictability.

This guide provides a practical framework for using AI in developer workflows. It outlines where AI creates measurable value, how to evaluate its impact using delivery metrics, and how to adopt it without disrupting system stability.

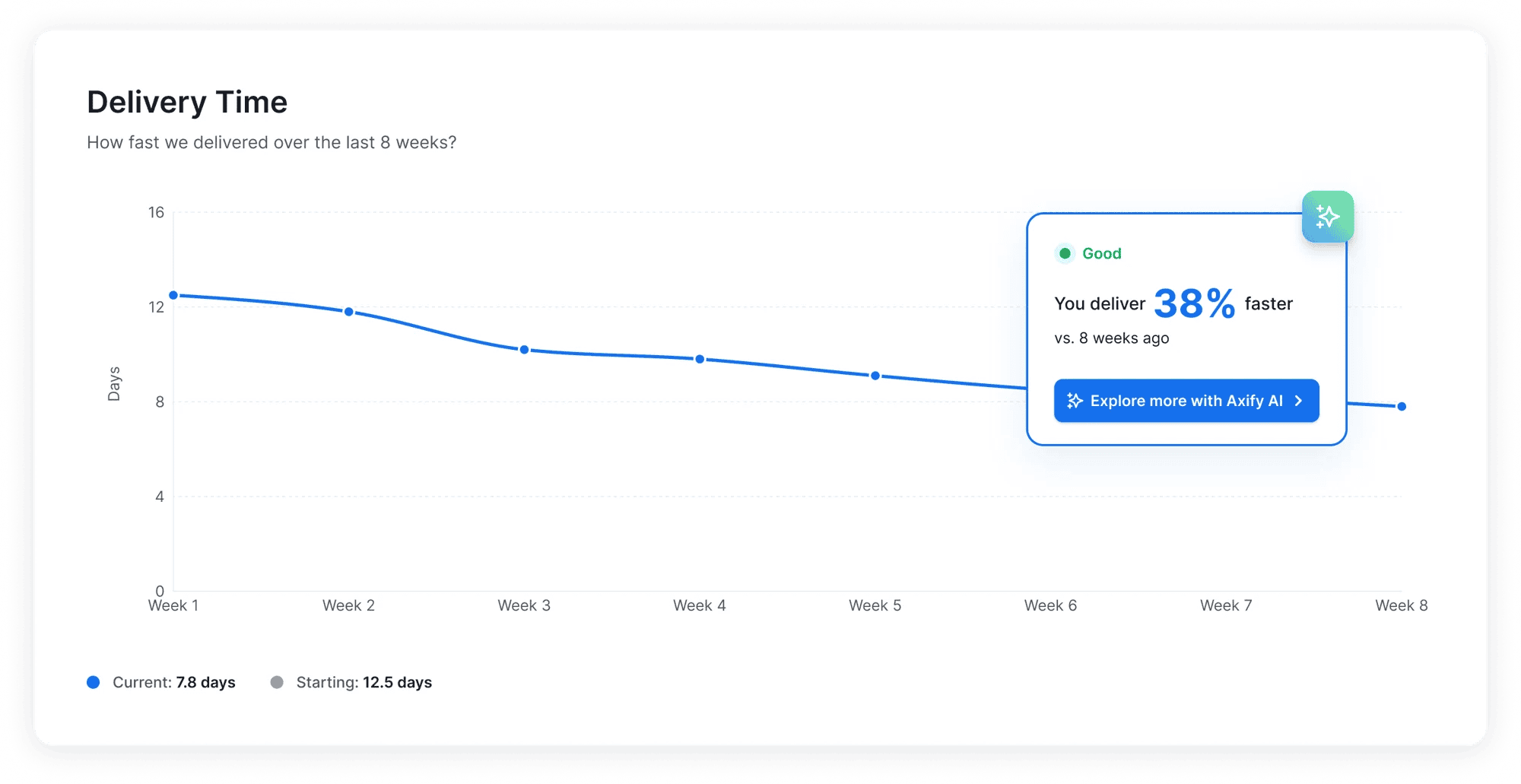

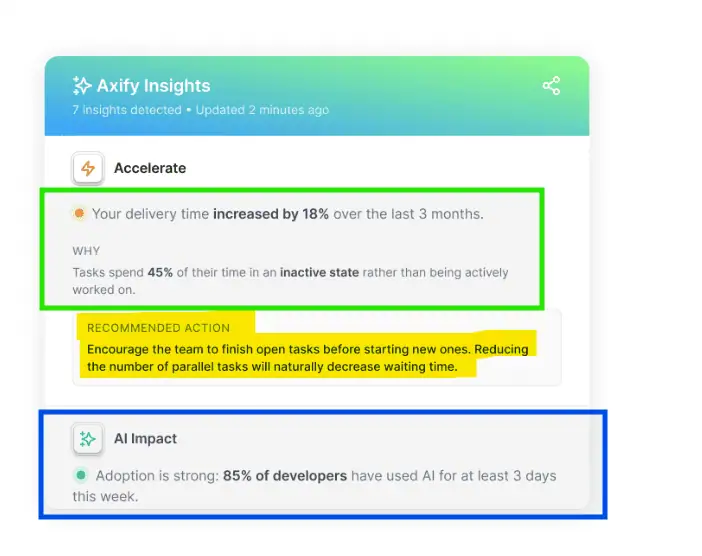

Pro tip: Set a baseline before introducing AI and compare performance after rollout. Axify’s comparison view lets you measure team productivity before and after AI adoption using consistent delivery metrics. Axify Intelligence also highlights bottlenecks, explains root causes based on your historical data, and recommends actions you can apply directly from the platform.

Contact Axify to see how the AI Decision Layer supports your next performance decision.

Should You Use AI for Developer Productivity?

From our experience, AI is not automatically good or bad for software developer productivity. Instead, you should treat it as a system change that affects and interacts with your workflow, review policies, and deployment pipelines.

Let’s review the data before we explain further.

- DORA 2024 research shows that AI coding assistants can increase individual developer output in some contexts, but it also shows that speed gains do not automatically translate into better delivery performance.

- Other studies admit that AI coding agents can make developers 19% slower, even if they believe they’re 20% faster.

- Still, according to the 2025 DORA research, over 80% of developers believe that AI makes them more productive. However, the report introduces some nuance: only teams with solid workflows and practices see improvements.

And we think that’s exactly the missing link you need to answer this question.

In other words, you will see gains when generative AI supports well-defined tasks, strong code review practices, and stable CI/CD pipelines. But you may see friction when AI-generated code increases review load, creates rework, or exposes gaps in organizational adjustment.

We believe that AI acts as an amplifier (and we explain all about it here).

If your software engineering practices are disciplined, AI coding tools can compress cycle time and increase throughput. But if your process already has bottlenecks, AI can accelerate one stage while slowing another. That is why you should evaluate AI capabilities in the context of your real delivery system.

Why Measure Developer Productivity with AI?

Measuring developer productivity with AI provides actionable insights into streamlining workflows, improving efficiency, and enhancing code quality while addressing key challenges in the development process.

AI and Developer Workflows

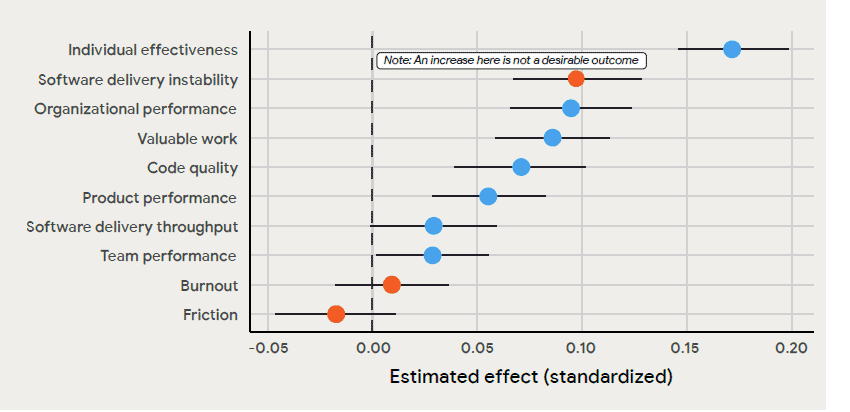

AI adoption is showing clear correlations with improved productivity and developer experience.

According to the 2025 DORA report, we see good scores in terms of individual effectiveness, organizational performance, and valuable work. Adidas, as one example, saw productivity gains of 20-30%.

The 2024 DORA report has more precise figures we’ll cite below:

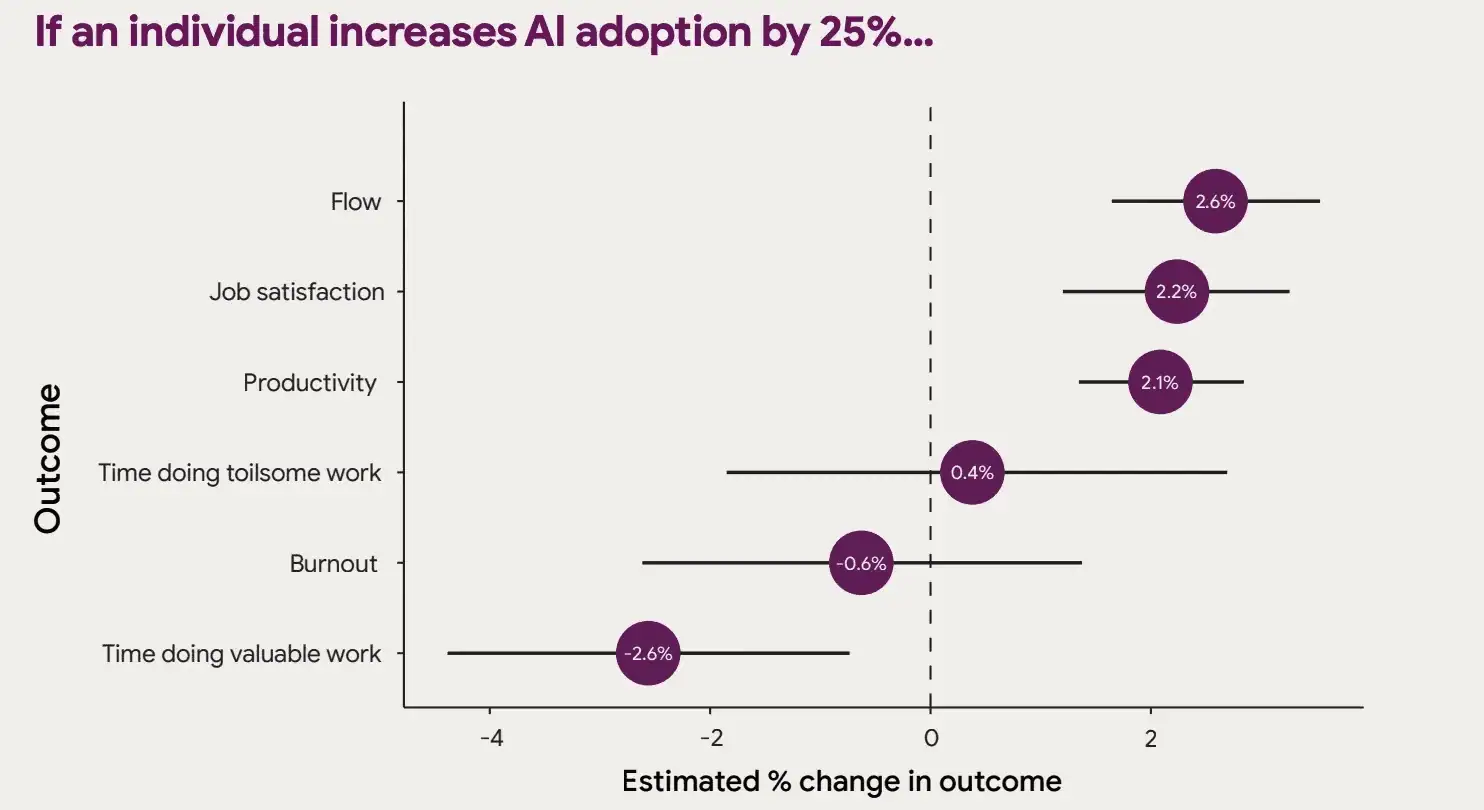

- A 25% increase in AI adoption is linked to a 2.1% rise in productivity. Basically, AI helps you accelerate daily tasks and optimize developer workflows.

- Developers also reported improvements in critical areas, including:

- Flow (+2.6%): They can achieve deeper focus and fewer interruptions, which leads to a smoother task execution.

- Job satisfaction (+2.2%): AI tools reduce repetitive tasks so that developers can focus on meaningful work. Adding this extra layer of meaningful work increases their job satisfaction.

- Code quality (+3.4%): Automated code suggestions and error detection leads to fewer code errors and more maintainable code.

AI's Mixed Results

Despite the advantages, AI adoption also brings challenges. Studies on GitHub Copilot reveal some unexpected results:

- 41% increase in bugs: While AI-generated code can significantly improve efficiency and even enhance code quality in some cases, it’s not without risks. AI might generate bugs when working with incomplete or ambiguous requirements, handling edge cases it wasn’t trained for, or integrating with legacy systems. For instance, auto-generated code might miss nuanced business logic, creating functionality that technically works but doesn’t align with real-world needs. Both the benefits of increased efficiency and the challenges of potential bugs are equally valid. That’s why you need careful human oversight and thorough testing.

- Burnout: Developers using Copilot experienced a 17% lower burnout risk, but this was less than the 28% reduction reported among those without access to Copilot. This suggests AI’s impact on burnout may vary depending on how it is integrated into workflows. For example, when used to handle repetitive or boilerplate tasks, AI can reduce cognitive load and free up developers for more creative work, helping to alleviate burnout. However, if the integration adds complexity or requires significant oversight to fix errors, it may diminish these benefits.

DORA's Key Observations

The DORA 2024 and 2025 reports provide a balanced view of AI’s impact on the SDLC. While there are notable improvements in certain areas, the challenges highlight that AI integration is still very complex.

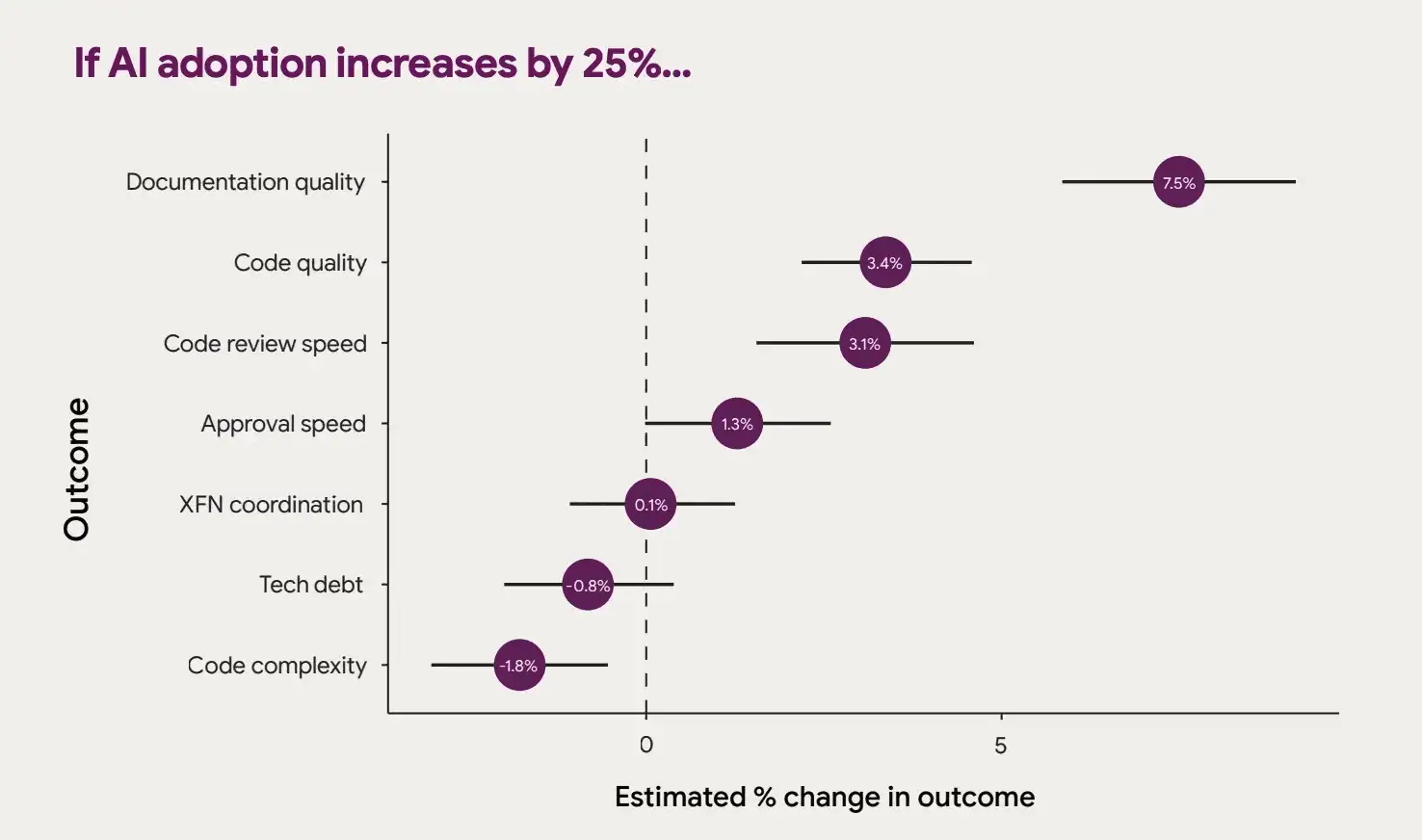

Improvements

According to DORA 2024, we’re seeing improvements in:

- Documentation quality (+7.5%): AI helps create clearer, more comprehensive code documentation by automating the summarization of complex code snippets and ensuring consistency across projects

- Code review speed (+3.1%): AI accelerates code reviews by detecting potential bugs, style inconsistencies, and security vulnerabilities.

- Approval speed (+1.3%): AI streamlines the review and documentation stages, enabling faster approvals and reducing bottlenecks in the development process.

The 2025 report showcases improvements in:

- Perceived impact on individual productivity: More than 80% of survey respondents believe their productivity increased by some measure, whereas only 5% believe it decreased.

- Code quality: 59% of respondents believe their code quality improved after implementing AI.

Negative Impacts

The DORA 2024 report focuses on negative impacts more closely than this year’s research.

- Delivery throughput (-1.5%): AI adoption slightly decreases delivery throughput, usually due to over-reliance, learning curve, and increased complexity.

- Delivery stability (-7.2%): It is significantly impacted because AI tools can generate incorrect or incomplete code, increasing the risk of production errors.

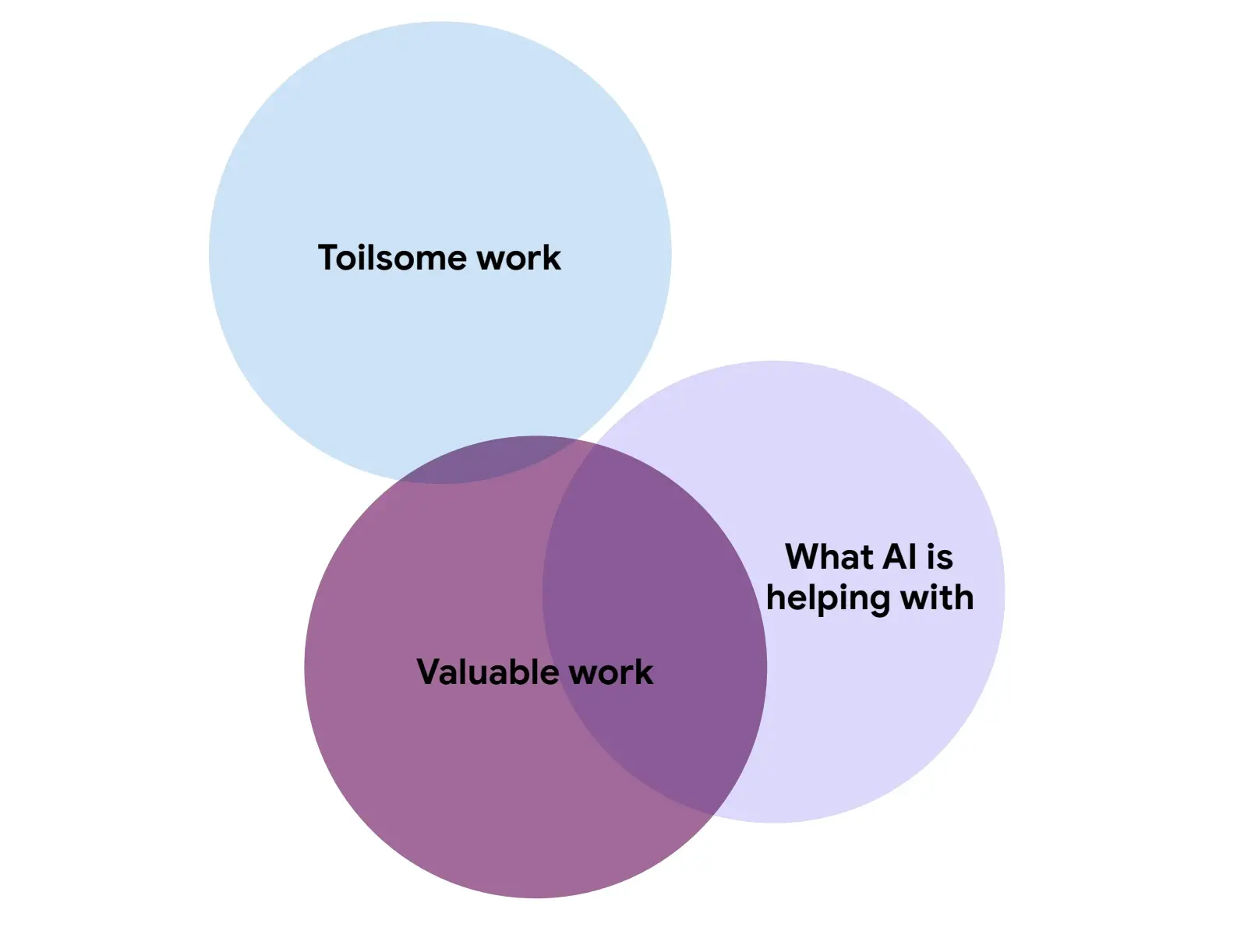

- Vacuum hypothesis: While AI speeds up tasks like coding and documentation, it doesn’t address toilsome work such as managing technical debt. As a result, toil levels remain largely unaffected.

Adoption Challenges

The adoption of AI tools remains uneven despite their transformative potential:

- According to the DORA 2025 report, trust in AI-generated code is still moderate. Only 24% of respondents trust AI-generated output a lot; 69% trust it somewhat or a little; and 7% don’t trust it at all.

- 30-40% of engineers refuse to use AI tools like Copilot in trials conducted by Microsoft, Accenture, and a Fortune 100 company, even when these tools are readily available.

- AI tools perform better with well-documented programming languages like Python. However, less common languages decrease their effectiveness. You’ll have limited adoption in diverse development environments.

Categories of AI Use Cases in Developer Productivity

The following categories show how AI is reshaping developer workflows:

1. AI-Assisted Code Reviews

AI‑powered reviewers scrutinize pull requests for bugs, vulnerabilities, and deviations from coding conventions. Tools such as Qodl (formerly CodiumAI), DeepCode AI, and CodeRabbit scan codebases, suggest improvements, and even generate unit tests.

Because these assistants spot potential issues before they integrate into the main branch, developers spend less time on manual reviews, and teams get faster feedback cycles.

We still believe that human judgment is needed to handle nuanced logic or hard‑to‑document codebases. But automated reviewers free engineers to focus on higher‑value work.

2. Enhanced Monitoring and Anomaly Detection

AI-powered monitoring systems can spot unusual patterns and anomalies in real-time. This significantly cuts down on the Mean Time to Detection (MTTD) and Failed Deployment Recovery Time – formerly Mean Time to Recovery (MTTR).

When combined with incident‑response workflows, these systems help teams act quickly on production issues. But they should be paired with human oversight and clear escalation paths to avoid false positives.

These systems are especially effective in environments where downtime can lead to serious consequences. The key is to integrate them with incident response workflows. That way, your team can react swiftly and maintain the reliability and stability of the production systems.

3. Predictive Insights For Deployment Risks

AI models can analyze historical deployment data to identify features or code changes that are likely to cause failures.

However, predictive risk analysis pays off when paired with strong engineering practices like CI/ CD and fast feedback loops.

Remember: AI amplifies existing strengths and weaknesses. That’s why the DORA 2025 report underlines that teams without solid practices see little benefit from AI adoption.

4. AI in Pipeline Automation

Continuous integration and continuous deployment (CI/CD) systems now leverage AI to adjust build configurations, test ordering, and deployment strategies based on repository size and complexity.

In the DORA 2025 findings, researchers stress the need for feedback loops that keep pace with accelerated code generation. Dynamic pipeline tuning helps avoid bottlenecks, supports independent deployments, and contributes to smoother releases.

5. Adaptive Testing with AI

AI helps you scale testing processes by generating test cases automatically based on recent code changes. This approach reduces manual effort, mainly when used for regression testing, where repetitive tasks can be time-consuming.

With AI handling these tests, human testers can concentrate on exploring edge cases or unique scenarios. This ensures broader test coverage, which makes software releases more robust and reliable while maintaining high product quality.

6. AI Pair Programming and Code Generation

The most visible use of AI in development is as a coding partner. Tools such as GitHub Copilot, Tabnine, and Amazon CodeWhisperer predict the developer’s next move and propose full functions or boilerplate structures.

In some cases, these assistants can help cut down time spent writing repetitive code and reduce typos.

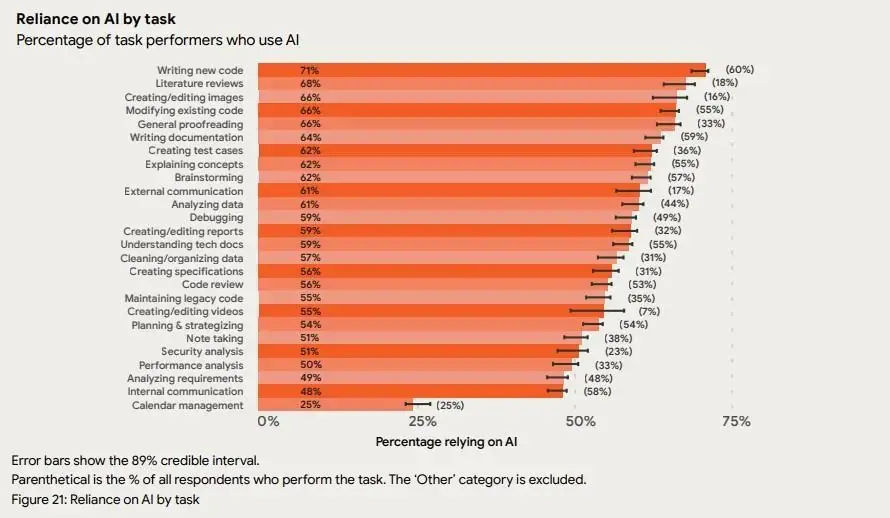

DORA’s 2025 survey supports this trend: writing new code is the top use for AI, with 71% of respondents who write code relying on AI for this purpose. While generative assistants accelerate development, engineers still need to validate the output and maintain context.

7. Automated Documentation and Knowledge Summarization

Documentation typically gets deprioritized, but AI can draft technical descriptions, API docs, and even diagrams directly from a codebase. Tools like Mintlify and Notion AI can help you summarize meeting notes and create project briefs. These tools keep documentation synchronized with code changes and speed up onboarding.

Apart from code, large language models can summarize literature reviews, modify existing text, and proofread. In fact, DORA’s 2025 report notes that about two‑thirds of respondents use AI for literature reviews, modifying existing code, and proofreading (see the figure above).

This broad adoption shows how AI is becoming a staple for information‑heavy tasks.

8. Intelligent Debugging and Troubleshooting

It's well-known that debugging consumes substantial developer time. AI-powered coding tools can analyze stack traces, trace execution paths, and propose fixes within seconds.

For example, if you introduce a typo into a React application and trigger a runtime error, an AI assistant can identify the incorrect import and suggest the correct file almost instantly. This type of intelligent troubleshooting shortens feedback loops, especially when you are working in an unfamiliar or complex codebase.

9. AI‑Powered Continuous Learning

Modern developers must constantly learn new technologies. But with generative AI, you can summarize long videos, articles, or podcasts into concise bullet points.

In the DORA 2025 survey, 68% of respondents who perform literature reviews use AI to assist them. These tools help engineers stay current without sifting through hours of content, so they can support professional growth alongside product work.

10. Customized AI Experiences

Our experience is that AI does not suit every task equally. And DORA’s research shows that while generative assistants aid mechanical tasks, they can impede interpretive ones.

To reduce friction, developers should customize AI tools to align assistance with task complexity. This includes toggling inline suggestions, using on‑demand modes, and configuring repository settings.

That customization transforms AI from an occasional distraction into a more productive partner.

How to Measure AI’s Impact on Developer Productivity

AI tools can make you feel faster. Code suggestions appear quickly, boilerplate disappears, and tasks seem easier. But, as we saw above, real productivity is not about perception. It is about whether work moves through your system with less delay, less rework, and fewer failures.

To measure AI’s true impact, you need a structured approach. Here are the principles you should follow.

Pick the Right Metrics

Developers usually report higher confidence when using AI-powered coding tools, yet delivery time, rework, or stability can still worsen. So you need to distinguish a confidence boost from an actual throughput gain.

As such, track key metrics like lead time for changes, change failure rate, and failed deployment recovery time rather than activity signals (e.g., lines of code or how fast you write new code).

Track Flow Efficiency

More pull requests or more lines of code do not mean higher productivity. What matters is where time is spent: waiting, reviews, blocked states, and handoffs. According to Scrum.org, most product and engineering teams operate at only 15-25% flow efficiency, which means 75-85% of total lead time sits in queues and delays rather than active work. If AI accelerates coding but review queues grow, overall delivery will not improve.

Measure Quality Alongside Speed

Faster output becomes costly if it increases defects or reverts. Maintainability, incident rates, and failed deployment recovery time must move in the right direction as coding speed increases.

Compare Before and After Changes

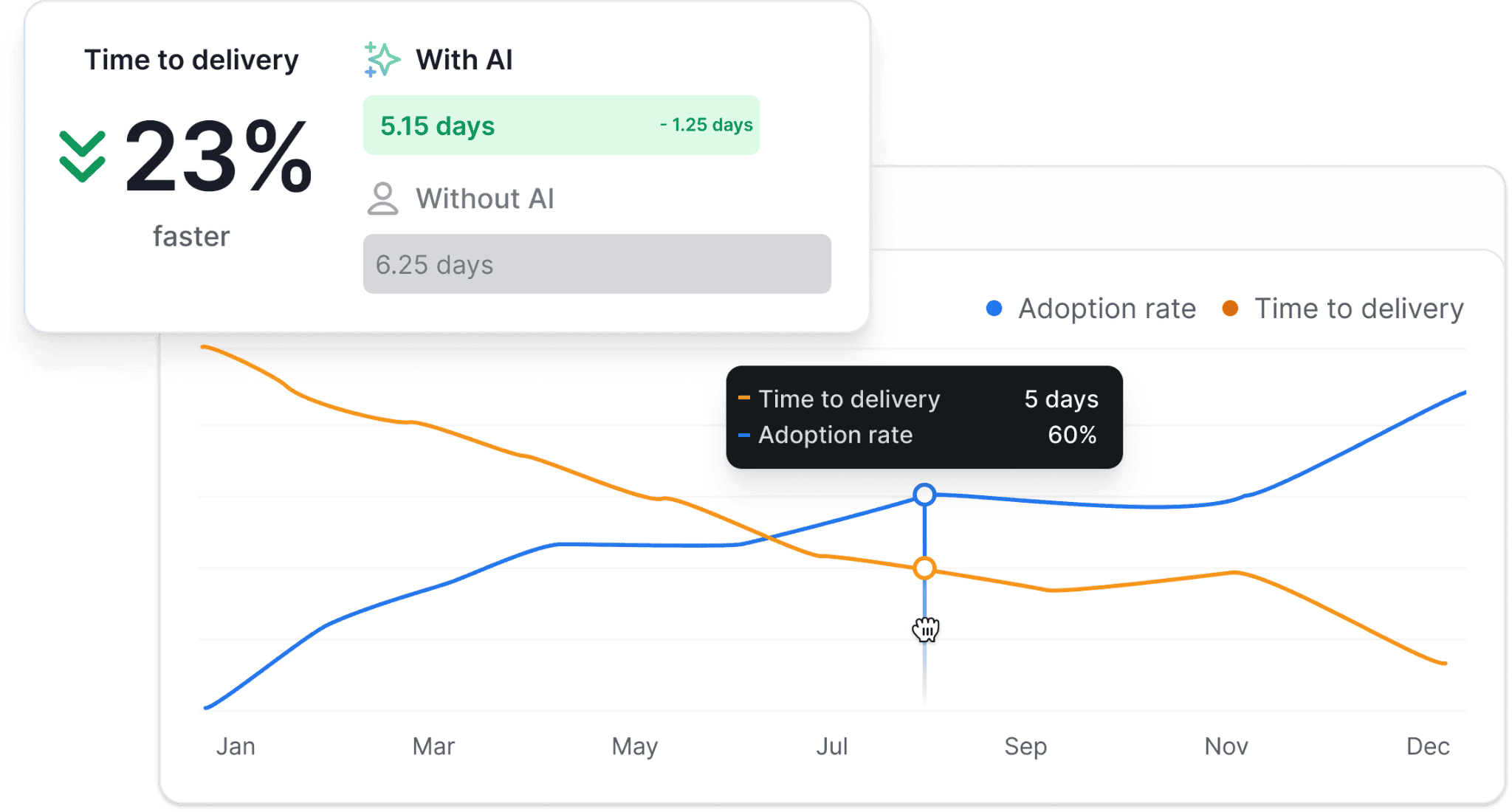

When you introduce an AI assistant, you are changing how work is performed. So the cleanest way to evaluate impact is to use tools that measure the same workflows under two conditions: without AI and then with AI enabled.

That removes selection effects and differences in team maturity from the equation. In longitudinal studies that measured developers before and after adoption, well-scoped coding tasks were completed 21–36% faster.

This kind of within-team comparison isolates the effect of the tool itself. In contrast, cross-team or per-individual comparisons usually create gaming behavior, distort incentives, and weaken trust in the data.

Detect Bottleneck Migration

AI may accelerate coding, yet review queues, testing, or deployment stages can slow down as a result. Without system-level measurement, you risk assuming improvement while constraints simply move downstream. Your metrics should make those shifts visible so you can address the real limiting stage.

Tie AI Usage to Delivery Outcomes

Adoption rates, token counts, and AI-written code percentages do not prove business value. You need to see whether higher adoption correlates with shorter delivery time or improved DORA metrics.

And this is where Axify’s AI Impact feature fits.

It connects the three dimensions reported by the DORA group in 2025 (actual usage, acceptance rate, and habitual use) to delivery metrics across your entire workflow.

Instead of tracking merely the number of active licenses, you can compare performance with and without AI and quantify its effect on speed, quality, and productivity across the development lifecycle.

Besides, you can see:

- Whether AI truly makes your teams productive

- Types of AI tools used

- Ineffective adoption

- Untapped potential

That kind of visibility turns AI from a tool into a measurable, strategic lever.

The DORA Perspective on Using AI For Developer Productivity

After thoroughly exploring the DORA Reports, we found that these are the most important aspects of using AI for developer productivity.

AI’s Role in Key Metrics

- Flow and productivity: Productivity increases by an estimated 2.1% for every 25% increase in AI adoption (according to DORA 2024), while the DORA 2025 report claims over 80% of respondents believe AI makes them more productive. That’s why 47% of respondents use AI tools every day.

| “Comparing some of 2025’s findings to last year’s, we get a sense that language models, tools, and workflows are evolving along with the people and organizational systems that interact with them. People have found ways to use AI to redirect their efforts to work they consider more valuable, and we’re starting to find ways for AI adoption to level up to better delivery throughput and product performance” (DORA 2025 Report) |

- Burnout: While AI enhances productivity, it has a limited impact on reducing toilsome tasks. However, the cause may lie with inefficient workflows and practices.

| “The stubborn persistence of some issues, including the rise in instability, the flat levels of friction, and burnout, is not entirely a failure of the tool, but also a failure of the system to adapt around it (...) and possibly a failure of some of those systems to adapt to the new paradigm.” (DORA 2024 Report) |

- Code quality: AI contributes to better codebases by simplifying and improving them.

| “Connect your AI tools to your internal systems to move beyond generic assistance and unlock boosts in individual effectiveness and code quality.” (DORA 2025 Report) |

Challenges Identified by DORA

- The vacuum hypothesis: AI accelerates valuable tasks, such as coding and reviewing, but often leaves tedious and repetitive work unresolved.

| “By increasing productivity and flow, AI is helping people work more efficiently. This efficiency is helping people finish up work they consider valuable faster. This is where the vacuum is created; there is extra time. AI does not steal value from respondents’ work, it expedites its realization.” (DORA 2024 Report) |

- Delivery performance trade-offs: Increased AI adoption can negatively affect delivery performance, particularly with larger batch sizes. That’s why at Axify, we always advise teams to use small batches. The S.P.I.D.R. technique and the Walking Skeleton are both solid approaches.

| “Drawing from our prior years’ findings, we hypothesize that the fundamental paradigm shift that AI has produced in terms of respondent productivity and code generation speed may have caused the field to forget one of DORA’s most basic principles—the importance of small batch sizes.” (DORA 2024 Report) |

A Practical Framework for Using AI Without Breaking Delivery

The following steps explain a clear strategy for using AI to improve developer productivity:

Step 1: Define Clear Objectives

The first step in building a solid AI-driven productivity strategy is to define clear and measurable objectives. Start by identifying areas where AI can have the most impact, such as reducing repetitive tasks or improving code quality.

Pro tip: Set success metrics, like a reduction in cycle time or an increase in the percentage of high-quality code, to track progress. Realistic goals ensure that AI adoption aligns with team priorities and contributes meaningfully to the software lifecycle.

Step 2: Pick the Right AI Tools for Developer Productivity

Tool selection shapes your outcomes. Different AI-powered coding tools support different workflows, such as code generation, review assistance, documentation, or test creation. Choosing a tool without understanding your bottlenecks can create more issues.

So, you can start by mapping tools to constraints.

- If review queues are long, review-focused AI assistants may help.

- If onboarding is slow, documentation and code explanation tools may add more value.

Clear alignment between tool capabilities and workflow pain points reduces the risk of fragmented adoption, too.

At this stage, you can also document evaluation criteria. You should also document clear evaluation criteria when comparing tools, including integration with the existing toolchain, impact on workflow stages, and measurable delivery outcomes.

Pro tip: Curious which AI tools are best for each use case? We discussed that in more depth here.

Step 3: Experimentation and A/B Testing

Conducting real-world experiments helps you gauge the effectiveness of your AI tools. For example, ANZ Bank’s trial with GitHub Copilot highlights the value of iterative testing.

Let’s explain.

Over six weeks, the bank assessed developer satisfaction, productivity gains, and error rates by comparing outcomes between teams using Copilot and a control group. The trial revealed significant improvements, such as a 42.36% reduction in task completion time for Copilot users and better code maintainability.

Step 4: Focus on Downstream Impacts

AI’s effects extend beyond immediate productivity improvements. Tools that accelerate coding may unintentionally introduce bugs, affecting delivery performance if left unchecked.

That’s why we advise engineering leaders to monitor downstream workflow stages after introducing AI tools. Faster coding can shift bottlenecks to code review, testing, or deployment. In some cases, increased coding speed also leads to larger batch sizes, as teams bundle multiple changes together before pushing them through the pipeline.

To manage this risk:

- Track metrics across the full delivery workflow. Monitor batch size, review queue time, change failure rate, and deployment stability after introducing AI-assisted development tools.

- If these metrics show downward trends, adjust workflows accordingly. For example, enforce smaller pull requests, strengthen review practices, or increase automated testing coverage.

AI adoption should improve the delivery system as a whole, not just accelerate one stage of the workflow.

Step 5: Build Guardrails For Safe Adoption

To ensure the safe integration of AI, you can establish centers of excellence to guide your best practices. These hubs can define those practices, provide training for development teams, and address ethical concerns related to AI-generated code.

They can also guide your teams in monitoring and validating AI outputs. This way, they avoid unintended consequences, such as code errors or security vulnerabilities.

Step 6: Measure Before and After Impact

Finally, measure impact longitudinally. Compare the same teams before and after AI adoption to isolate change. Avoid cross-team comparisons, which often distort incentives and reduce trust.

Track lead time for changes, change failure rate, and failed deployment recovery time to see whether AI improves both speed and stability. This is where tools like Axify’s AI Impact feature fit naturally.

It connects AI adoption and acceptance data with delivery metrics. The tool can help you quantify how AI affects cycle time, quality, and overall performance across your development lifecycle.

Step 7: Turn Insights into Decisions

AI adoption should not end with visibility. It should lead to controlled, system-level optimization.

As such, the real value comes from acting on what the data reveals.

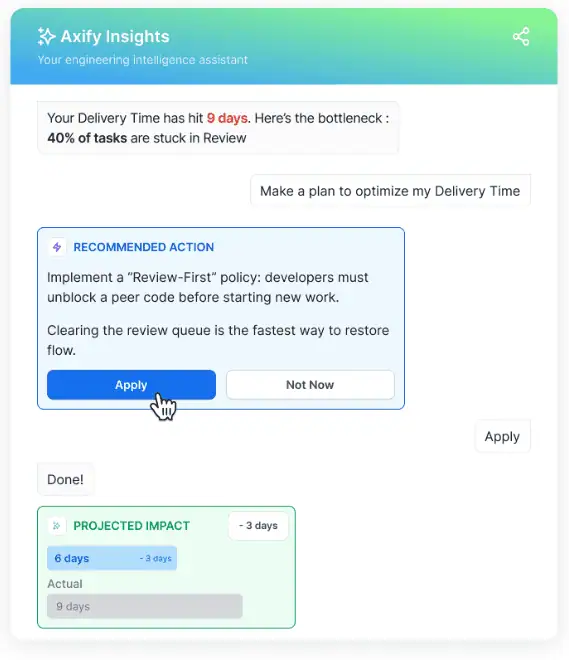

Once you identify shifts in cycle time, quality, or flow efficiency after AI adoption, the next step is diagnosis.

- Are improvements consistent across teams?

- Have bottlenecks migrated to review or testing stages?

- Is stability declining as speed increases?

This is where you need high-quality decision support.

Axify’s Intelligence layer analyzes workflow history, highlights emerging constraints, and recommends targeted interventions based on your actual delivery patterns.

Instead of manually interpreting dashboards, engineering leaders can investigate root causes through a built-in AI assistant and apply corrective actions directly from the platform.

Here’s what that interface looks like:

Best Practices for Using AI in Engineering Teams

AI can support your workflow, but only if you apply it with discipline. Without structure, faster code generation can translate into larger review queues, hidden defects, and unstable releases. So instead of broad adoption, you need operational best practices that protect delivery.

Here’s what we recommend.

Use AI Mainly for Small, Well-Scoped Tasks

AI performs best when the task is clearly defined.

Small refactors, test generation, boilerplate code, and documentation updates create contained environments where model capabilities align with the request. In controlled settings like these, developers complete coding tasks 55% faster when AI is applied to clearly scoped work.

However, broad architectural changes or ambiguous requirements usually require deeper system understanding. Limiting AI use to well-scoped work reduces rework and prevents oversized pull requests that strain review capacity.

Treat AI Output as a Draft That Requires Review

AI-generated code should enter your workflow as a first draft rather than as a final decision. Even when suggestions look correct, they may ignore edge cases, internal conventions, or security constraints.

According to TechRadar, only 48% of developers always check AI output before committing changes. This increases the risk of bugs, duplicate code, and other vulnerabilities entering production.

As such, we recommend mandatory peer review and automated validation to protect stability. The goal is to keep quality aligned with speed rather than trading one for the other.

Adapt Prompts Continuously Based on Your Codebase

AI results improve when prompts reflect real context. You may already know that generic instructions produce generic code.

So, as your repository evolves, prompts must reference naming conventions, architecture patterns, and dependency structures. And that has shown difficulties with devs. In fact, ITPro states that developers report substantial iteration, with 61% saying AI responses require significant prompting and refinement before reaching acceptable quality.

We believe that you should treat prompting as a skill. Structured, context-rich prompts reduce back-and-forth cycles and improve output reliability.

Validate AI-Generated Code Through Tests

AI suggestions should pass through the same testing standards as human-written code. Unit tests, integration tests, and CI validation protect against regressions.

Faster code generation increases throughput only when test coverage keeps pace. Without automated validation, velocity gains can convert into delayed failures.

Maintain Small Batch Sizes

AI typically increases coding speed, which can unintentionally expand batch size. Larger pull requests raise review time and increase change failure risk. Keeping changes small preserves flow predictability and protects deployment stability.

Monitor Workflow Impact at the System Level

AI may improve one stage while slowing another.

For example, coding may accelerate while review or testing becomes constrained. So, measuring lead time for changes, review time, and failed deployment recovery time helps you detect these shifts early.

System-level visibility keeps productivity grounded in delivery performance rather than activity metrics.

How Engineering Leaders Can Support Developers

AI adoption changes how work is done, but tools alone do not improve delivery. The environment you create determines whether productivity gains hold or break under pressure. So your role shifts from tool selection to system design.

Here are the areas you should focus on:

- Autonomy: Give developers space to decide when and how to use AI. Clear objectives combined with local decision-making increase ownership. When teams control their workflow, adoption becomes intentional rather than forced.

- Trust: Build psychological safety around experimentation. If mistakes are punished, developers hide issues or start gaming metrics. When trust is present, risks surface early, and fixes happen before deployment.

- Workflow design: Align AI usage with your CI and CD stages. Faster coding without review capacity creates queues. Balanced workflow design prevents bottlenecks from shifting downstream.

- Guardrails: Define review standards, testing requirements, and security checks for AI-generated code. Guardrails protect stability without blocking innovation.

- Adoption enablement: Provide structured onboarding, shared prompt examples, and peer learning sessions. Clear enablement reduces uneven usage across teams.

- Not weaponizing metrics: Use delivery metrics to diagnose system constraints, not to rank individuals. When metrics become targets, behavior distorts and trust erodes.

- Protecting deep work: Reduce interruptions during coding blocks. Focused time improves code quality and reduces rework.

- Upskill and training: Invest in prompt design, code review practices, and architectural thinking. Skill development increases the quality of AI-assisted output and strengthens long-term delivery performance.

Conclusion: Streamline AI Developer Productivity with Axify

AI can increase developer productivity, but only when you treat it as a system change rather than a shortcut. Coding speed alone does not guarantee better delivery performance, especially when review load, batch size, and stability are ignored.

Instead, disciplined objectives, structured experimentation, small batches, and longitudinal measurement protect both speed and quality.

What’s more, even strong adoption requires measurable outcomes to justify investment.

Axify helps with all that.

It connects AI adoption data to delivery metrics and adds an AI-powered decision layer that identifies bottlenecks, explains root causes, and recommends concrete actions based on your workflow history.

FAQs

Is AI making devs faster?

Yes, AI is making developers faster on clearly defined coding tasks. However, speed at the coding stage only improves delivery if bottlenecks aren’t transferred to the review, testing, and deployment stages.

Can AI reduce developer burnout?

Yes, AI can reduce developer burnout when it removes repetitive work and shortens feedback loops. However, burnout increases if faster coding leads to higher review pressure, larger batch sizes, or constant context switching. The DORA 2025 report suggests that AI reduces burnout in teams where AI is implemented correctly.

How do you measure AI for developer productivity?

You measure AI for developer productivity by comparing the same teams before and after adoption using delivery metrics. Focus on DORA and flow metrics to see whether speed improves without harming stability and workflow.

Should we mandate AI developer tools?

No, you should not mandate AI developer tools without context. Adoption works best when teams understand the goal, receive training, and retain autonomy in how they integrate tools into their workflow.

Will AI replace developers?

No, AI will not replace developers. Instead, it changes how work is performed, which means your role shifts toward architecture, review, validation, and system design.

Compendium of Axify Resources

- BDC Case Study

- Axify Intelligence

- Throughput

- Mean Time to Recovery (MTTR)

- Continuous Integration

- Continuous Deployment

- Failed Deployment Recovery Time

- DORA Metrics

- Axify’s AI Impact

- Lead Time for Changes

- Delivery Metrics

- AI for Developer Productivity

- AI Developer Tools