Software delivery can slow down between SDLC stages, not just in the development phase. Pull requests wait for reviews, test pipelines queue for capacity, and releases pause while teams coordinate changes.

Because of this, many engineering leaders start examining how work actually moves through their CI/CD pipeline. Apart from following sprint metrics (e.g., sprint burndown, completion rate, commitment vs completion) and backlog data (number of issues, backlog size, issue age, priority), they start doing delivery flow analysis.

The goal is simple: understand how requests, changes, and feedback move across the system so delays become visible.

A clear example of this comes from Newforma.

After analyzing delivery data with Axify, the team identified friction in the review and validation stages. Once those constraints were addressed, lead time for changes dropped by 63%, pull request cycle time fell by 60%, and delivery frequency increased significantly, eventually leading to 22x more frequent deliveries.

This kind of insight is exactly what value stream analysis helps you achieve in software-intensive product development.

So, in this guide, you will learn how value stream analysis works in modern software delivery. You will also see how leaders apply it to identify bottlenecks and guide system-level decisions.

P.S. Axify Intelligence extends this analysis further. It helps you examine delivery data, explains why metrics change, and recommends workflow adjustments you can implement directly from the platform. Contact us today to learn more.

What Is Value Stream Analysis?

VSA or Value Stream Analysis examines how work moves through a system from request to delivery to identify delays, bottlenecks, and activities that do not contribute to customer value. In software organizations, this means studying how work flows across planning, development, review, testing, and release stages.

The concept originates from Lean manufacturing, where engineers analyzed the flow of materials through production systems to understand where time and effort were wasted.

That same thinking later expanded into software development.

The difference is that software systems move information rather than physical goods. Instead of tracking parts through a factory, you analyze the information flow of work as it moves through a continuous delivery pipeline. This may include feature requests, bug fixes, and infrastructure changes.

This perspective matters because, as we explained above, delivery delays usually appear between stages. But lean value stream analysis helps you observe where time actually accumulates across the system.

Understanding what “value” means in software also clarifies why this analysis is important.

Value is not activity or effort. Instead, value appears when a change improves the finished product for users or the business. That includes delivering new functionality, fixing defects, learning from production feedback, or improving reliability. This is reflected through faster software deployments, fewer bugs, or shorter failed deployment recovery time.

From our experience, most delivery systems contain significantly more activity than actual value creation. Much of the work in a software delivery pipeline consists of waiting, coordination, or validation steps rather than direct product improvements.

Value stream analysis makes this imbalance visible, showcasing bottlenecks like unjustifiable idle times or unexplained increases in cycle times.

Once those constraints become visible, you can decide which workflow improvements will reduce delays and improve delivery performance.

You can watch this video to learn more:

Value Stream Analysis vs. Value Stream Mapping

Value stream analysis is the analytical discipline used to evaluate system behavior. Meanwhile, value stream mapping or VSM is the visualization technique used to represent that system.

The table below clarifies the distinction:

| Aspect | Value Stream Analysis | Value Stream Mapping |

| Scope | Evaluates the entire delivery system from request to release. | Visualizes how work currently moves through the system. |

| Purpose | Identify bottlenecks, delays, and non-value work. | Show workflow stages and interactions. |

| Focus | Analytical reasoning about system behavior and improvement decisions. | Diagramming the flow of work and dependencies. |

| Outcome | Insights that guide workflow continuous improvement. | A map that helps teams understand the system structure. |

Value Stream Analysis vs. Process Mapping

Value stream analysis studies the entire delivery system end-to-end, while process mapping documents how a single activity operates.

We explain the difference in the comparison below:

| Aspect | Value Stream Analysis | Process Mapping |

| Scope | Takes a broad, end-to-end view of the system and tracks how value moves from request to delivery. | Takes a narrow view, examining a specific task or workflow. |

| Purpose | Identifies delays, bottlenecks, and handoff stage friction across the whole system. | Breaks down individual steps to clarify how a task operates. |

| Focus | Strategic analysis of delivery behavior across teams and tools. | Tactical understanding of operational activities. |

| Outcome | System-level insights that guide workflow changes and improvement outcomes. | Process documentation used for training or optimization. |

Now that we’ve clarified the semantics of value stream analysis, let’s see what makes it so difficult in practice.

Why Value Stream Analysis Is Hard in Software

From our experience, value stream analysis is hard in software because work flows through distributed systems, teams, and tools. Delays may appear between activities as well as during them.

The most common challenges include:

- Invisible queues: Some traditional tracking tools do not show clearly where work sits idle. Pull requests can sit in review queues, test environments may be unavailable, and deployment approvals can pause releases. These waiting stages increase process time, but the delay only becomes visible when you undertake the full flow mapping process.

- Asynchronous work: Modern software delivery does not move through stages sequentially. Development, review, testing, and deployment often occur in parallel across multiple teams and systems. Automated pipelines may trigger independently, while pull requests, tests, and releases progress at different speeds. Because work moves asynchronously across these stages, it can pause, loop back, or accumulate before reaching production, making delays harder to diagnose.

- Cross-team dependencies: Delivery typically depends on changes from other teams, shared libraries, or infrastructure updates. When those dependencies are unmanaged, delays propagate across the system. And unfortunately, 68% of initiatives experience delivery disruptions due to unmanaged cross-team dependencies.

- Tool sprawl: Development workflows rely on many systems, such as source control, CI pipelines, ticketing platforms, and monitoring tools. When teams switch constantly between them, context breaks down. In fact, 75% of teams lose between 6 and 15 hours per week due to tool sprawl.

- Knowledge work vs. physical flow: Manufacturing processes move physical items through predictable stages. Software work “moves” ideas, decisions, and code. Because those steps depend on judgment from experienced people, delays might appear in review discussions, design clarification, or debugging.

But let's see what a modern VSA looks like next.

What a Modern Value Stream Analysis Looks Like

Value stream analysis looks different in software delivery systems than in traditional production lines.

Development work depends on distributed tools, asynchronous collaboration, and automated pipelines. Traditional value stream analysis usually focused on static process representation, with techniques developed for manufacturing environments.

This type of VSA included:

- Waste categories: Analysis centered on identifying inefficiencies such as waiting time, rework, or handoff waste between stages. For example, a delay between development and testing might be labeled as waiting waste, but the underlying cause typically remained unclear.

- Manual observation: Mapping usually depended on workshops or interviews with teams. Engineers described how work moved through stages, and analysts translated those descriptions into flow diagrams. While useful, this method relied heavily on perception and less on automatically collected system data.

- Process diagrams: Teams documented delivery stages in diagrams that showed steps like development, review, testing, and release. These diagrams helped illustrate the process, but they rarely captured how work actually behaved under real workloads.

However, we believe that modern software delivery environments produce large amounts of operational data. CI pipelines, pull request systems, and issue trackers continuously generate signals about how work moves through the system.

Because of that shift, value stream analysis in Agile approaches must rely on real-time observation. Modern VSA, therefore, requires a different operating model:

- Use telemetry: Delivery systems produce detailed signals from repositories, CI pipelines, and issue tracking tools. These signals reveal where process items slow down, such as pull requests waiting for review or deployments paused in release pipelines.

- Measure flow continuously: Instead of analyzing delivery only during workshops, teams can observe value stream mapping metrics over time and in real time. Continuous measurement helps reveal patterns such as recurring review queues or environment bottlenecks.

- Detect patterns automatically: Delivery systems generate more signals than humans can analyze manually. Automated analysis helps identify problematic trends, such as slow review pickup times or growing work-in-progress queues.

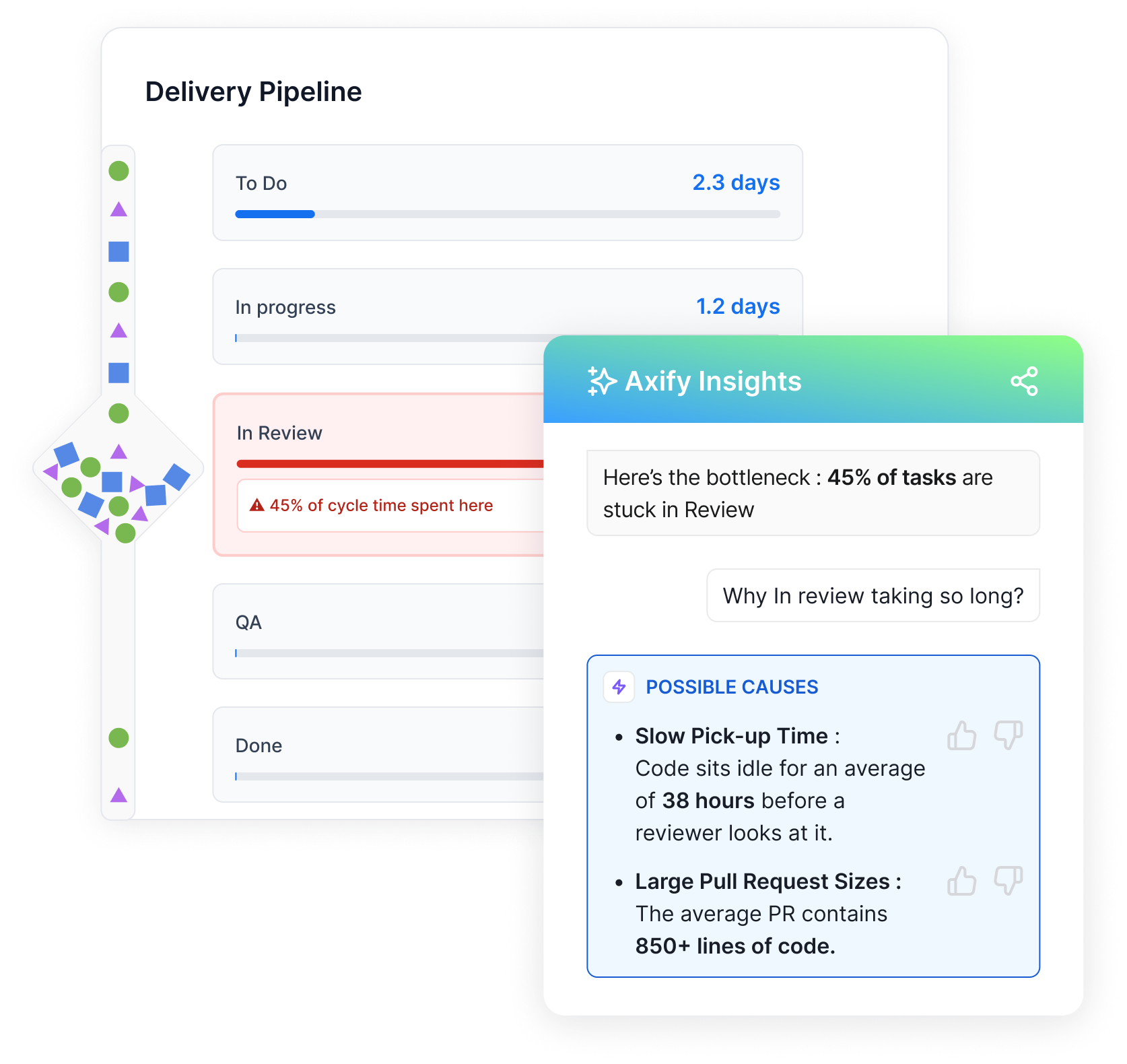

- Support decisions: Ultimately, the goal of modern VSA is not simply to visualize delivery but to guide operational decisions. Axify Intelligence can extend analysis beyond visualization. It examines delivery metrics, identifies bottlenecks, and recommends concrete actions you can apply to improve flow.

ALT: Axify delivery pipeline showing review bottleneck and workflow insights.

The Value Stream Analysis Process (for Software Teams)

A structured value stream analysis process helps you understand how work actually moves from request to production. The process examines how the full delivery system behaves under real workload conditions.

To make that analysis practical, you can follow the value stream analysis steps below. They mirror traditional mapping techniques but adapt them to modern software delivery environments.

Step 1: Define the Value Stream

First, we recommend defining the boundaries of the system you want to analyze. This usually includes a product, service, or user journey that produces customer value. For example, a feature request may move from product specification to development, testing, deployment, and user feedback.

Defining the stream creates a shared scope so every team understands which stages and dependencies belong to the analysis.

Step 2: Observe Real Flow (Not Intended Flow)

Next, examine how work actually moves through your delivery system.

Teams sometimes assume their workflow follows the stages defined in their boards or process documentation. In practice, modern software delivery is not strictly sequential: development, testing, review, and deployment happen asynchronously and across multiple teams.

Because of this, changes rarely move through stages in a straight line. Pull requests may wait in review queues, changes may return for rework after testing, or multiple updates may accumulate into larger batches before deployment.

Real flow analysis focuses on how work moves across these overlapping stages. If you want to gain a more accurate view of how your delivery system operates in practice, you can move to the next step:

Step 3: Measure Flow Behavior

Once the stream is visible, measurement reveals how the system behaves under load. Here, operational metrics provide the evidence needed to analyze delivery performance.

- Flow speed describes how quickly work moves through the system. Typical signals include lead time, cycle time, and deployment frequency.

- Flow stability shows whether releases remain reliable during change. Signals include change failure rate, mean time to recovery (MTTR), and escaped defects.

- Flow efficiency evaluates how much time work is spent actively moving versus waiting. This analysis often reveals work-in-progress limits across the system, such as pull request queues, deployment pipelines, or overloaded review stages.

- Flow predictability examines delivery variance, such as growing queues or unpredictable completion times. These signals help leaders identify systemic patterns rather than isolated delays.

Step 4: Identify Systemic Constraints

At this point, our team thinks that your goal shifts from observation to diagnosis. Instead of focusing on local inefficiencies, you identify constraints that affect the entire system.

For example, you can examine:

- Queue time between stages (for example, how long pull requests wait for review or deployment)

- Rework loops, such as changes that return from testing or review multiple times

- Batch size patterns, including unusually large pull requests or releases

- Workflow handoffs between teams, which often introduce coordination delays

- Stage-level cycle times, to identify where work spends the most time

Step 5: Design Improvements

Next, you design targeted changes that address the constraint. Improvements usually involve small, testable adjustments, such as reducing pull request size, introducing review rotation, or limiting batch size.

This iterative mindset reflects the Lean approach, where teams experiment with changes and observe the system response.

Step 6: Validate with Before/After Data

Finally, you validate improvements using delivery data. Trend lines show whether lead time shortens, queues shrink, or stability improves.

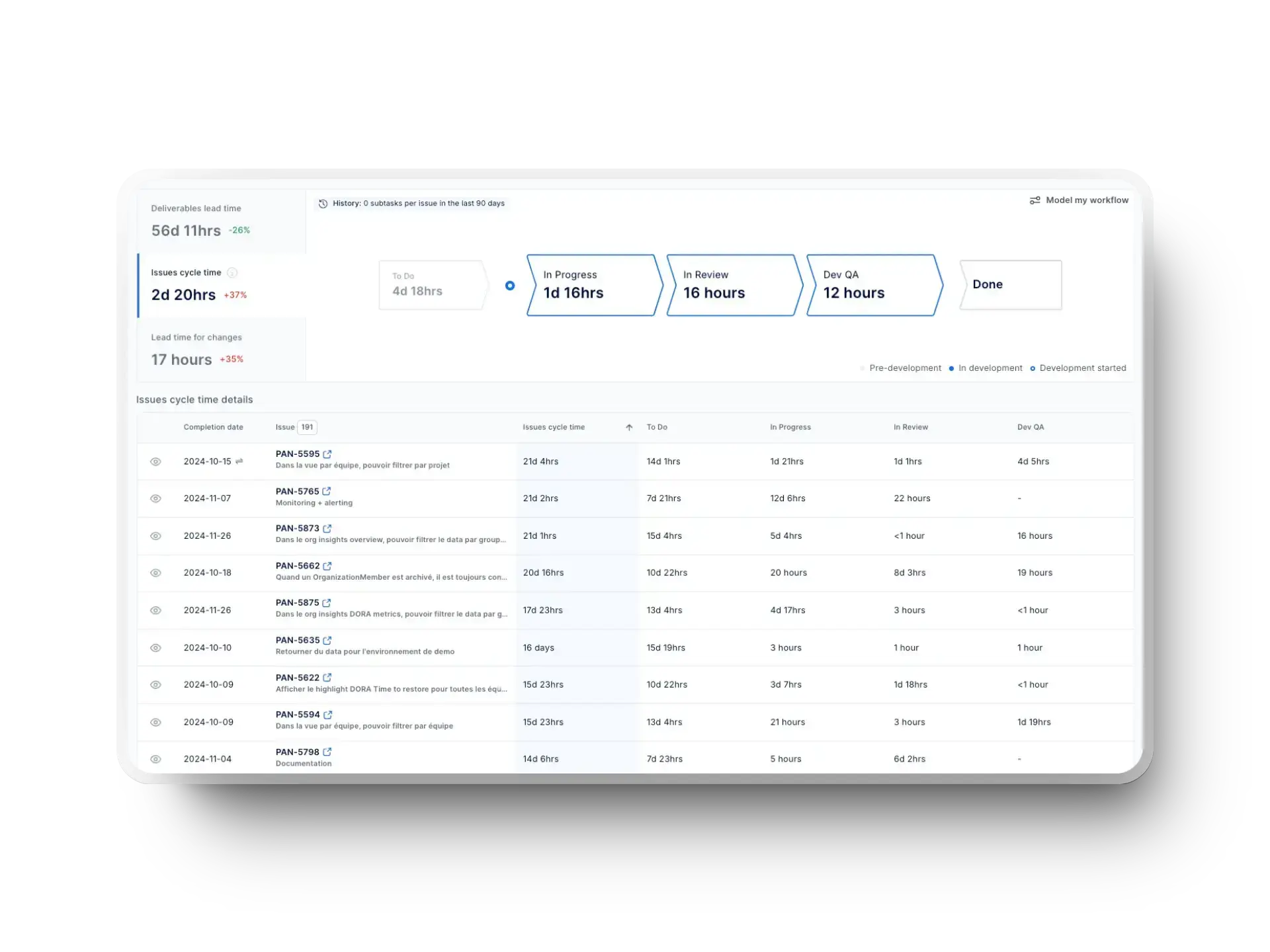

Axify’s value stream mapping (VSM) feature shows these trend lines across the delivery pipeline. This way, you can compare delivery behavior before and after adjustments and confirm whether the system actually improved.

Value Stream Analysis Example

To understand how analysis works in practice, it helps to assess real examples.

Example 1: BDC Engineering Transformation With Axify

Two teams at the Delivery Bank of Canada examined how work moved through planning, development, testing, and release, using Axify’s value stream mapping. This delivery flow analysis exposed delays in pre-development coordination, quality control, and task planning.

The teams significantly improved flow with targeted improvements: data standardization, earlier QA involvement, smaller backlog items, and work-in-progress limits.

Within three months, delivery time accelerated by 51%, development capacity increased by 24%, and recurring productivity gains reached $700K annually. These results came from identifying system bottlenecks and making operational adjustments based on continuous delivery metrics.

Example 2: Feature Delivery Value Stream

A typical feature delivery stream starts with a request and moves through planning, development, review, QA, and finally release. On paper, this sequence appears straightforward. Yet real systems behave differently once work enters the pipeline.

Let’s say delivery time accumulates in queues between stages.

You may see pull requests sitting idle in review queues while reviewers finish other work. QA environments may be unavailable when testing begins. Release coordination may pause deployments until multiple teams align their schedules.

Post-factum, manual diagrams become outdated quickly.

But if you use continuous delivery data, you can see issues happening in real time. For example, you may notice an increase in lead time even when coding time remains short because queues build in the review and testing stages. Rework may also spike when defects return from QA after delayed feedback.

These observations lead to operational changes.

As such, you can decide to change review ownership, automate test environments, or shift release coordination to smaller deployment batches. In other words, analysis moves the focus from individual productivity to system behavior.

Example 3: Pull Request Review Bottleneck

Another common scenario appears in the pull request workflow. Let’s say your code moves quickly from development to submission, yet progress slows once the review stage begins.

In many teams, that happens because reviewers balance multiple responsibilities. As a result, pull requests enter a queue where they wait for attention. Over time, that waiting period expands the cycle time even though the actual review activity remains short.

This pattern has become even more common with AI coding assistants.

Tools that accelerate code generation allow developers to produce changes faster, which increases pull request volume. If review capacity does not grow at the same pace, queues form more easily and delivery flow slows despite faster development.

Delivery metrics expose this pattern clearly.

Lead time increases while coding duration stays stable, which may indicate a delay inside the review queue. Or, you may notice that rework also grows because feedback arrives late in the cycle, and that can force additional revisions.

This data helps you make better leadership decisions.

For example, you may decide to rotate review ownership between engineers, introduce review limits, or implement automation to reduce manual checks. Therefore, the answer is not faster coding, but smoother system flow.

Example 4: Release Coordination Constraints

Another common scenario occurs during release coordination. Let’s say your development teams complete changes regularly, but deployments happen only during fixed release windows.

In that situation, work usually accumulates at the final stage of the delivery system. Completed changes may wait for release approval, scheduled deployment windows, or cross-team synchronization.

Over time, this batching behavior increases delivery variability and operational risk.

Delivery metrics make this pattern visible. Deployment frequency may remain low even while development activity stays steady, and completed work may accumulate before the release stage.

This data helps inform leadership decisions.

For example, teams may decide to move toward smaller, continuous deployments or reduce approval gates that do not add operational value.

Benefits of Value Stream Analysis for Software Teams

Value stream analysis shows how work actually moves through your delivery system. Once those signals become visible, the benefits appear across leadership decisions and daily team workflows.

These are the main advantages for each group.

For Engineering Leaders

We've seen first hand that engineering leaders rely on delivery signals to guide operational decisions. Value stream analysis improves those signals by showing where work slows down and why.

First, prioritization becomes more precise.

Work items that appear urgent may not actually influence delivery speed. Analysis usually shows that delays come from batch sizes, review queues, test bottlenecks, or release coordination. Once those patterns become visible, leaders can shift effort toward the stages that constrain flow.

Next, predictability improves.

Delivery systems become difficult to forecast when queues grow unpredictably between stages. When the analysis identifies where variability appears, leaders can adjust processes such as review ownership or release batching to stabilize the system.

Finally, capacity planning becomes grounded in data.

Instead of estimating output based on headcount alone, leaders can observe how work progresses through the pipeline and adjust staffing or workflow policies accordingly. This perspective aligns delivery decisions with outcome mapping, where system behavior connects directly to organizational goals.

For Teams

For engineers and delivery teams, value stream management rooted in solid analysis reduces operational friction inside daily workflows.

What may initially appear as “chaos” usually reflects specific system conditions.

Pull requests may wait for review while engineers move to other tasks. Test environments may block validation work. And deployment windows may delay completed features. These interruptions fragment focus and slow progress.

Frequent context switching intensifies the problem.

In fact, frequent interruptions can erase up to 82% of productive work time. When the delivery system produces constant interruptions, deep work becomes difficult to sustain. This includes review requests, urgent bug fixes, or pipeline failures.

Value stream analysis reveals these patterns through delivery data.

Once leaders and teams see where queues form or feedback slows down, they can introduce targeted improvements such as review rotation, smaller changesets, or clearer deployment policies.

For the Business

Finally, we also noticed that value stream analysis improves outcomes at the organizational level.

Faster time-to-value occurs when work moves steadily from request to production instead of waiting between stages. Shorter queues and clearer workflows allow teams to deliver improvements to customers sooner.

The cost of delay also decreases.

When delivery slows because of hidden bottlenecks, value sits idle in backlogs or unfinished work. Removing those constraints allows features and fixes to reach production more quickly.

At the same time, operational risk declines.

Continuous observation of delivery patterns reveals instability early. That allows teams to respond before issues affect production systems.

Common Mistakes in Value Stream Analysis

Value stream analysis can reveal how work moves through your delivery system, but only when applied correctly. Several common mistakes prevent teams from seeing real system behavior.

These are the issues you should watch for:

- Treating it as a workshop: Many organizations run a single mapping workshop and assume the analysis is complete. Yet delivery systems change constantly. Pull request queues grow, release coordination shifts, and dependencies evolve. Without continuous monitoring, any static map quickly stops reflecting how work actually flows.

- Optimizing local steps: Teams sometimes improve individual stages instead of the system. For example, development speed may increase while review queues expand. The result is a longer lead time, even though one stage improved.

- Measuring outputs instead of flow: Counting commits, story points, or tickets closed does not reveal how work moves through the system. Flow signals such as queues, delays, and rework show where delivery slows.

- Ignoring variability: Delivery instability usually appears as unpredictable review times, test delays, or release batching. When variability goes unmeasured, planning becomes unreliable.

- Weaponizing metrics: If metrics are used to judge individual engineers instead of diagnosing system behavior, teams quickly lose trust in them. From our experience, developers prefer metrics that track how work actually flows through the delivery system. Developer experience metrics also matter, with almost half of respondents in one study wishing their CTOs would measure burnout and deep work (focus) time.

When You Should Run a Value Stream Analysis

From our daily experience, these are the situations where running the analysis makes sense for your organization:

- Delivery slowing down: Lead time increases even though the development effort stays constant. Work usually accumulates in review queues, QA environments, or release coordination stages.

- Scaling teams: As organizations add teams or services, dependencies multiply. Without visibility into how work moves across teams, handoffs and coordination delays begin to expand.

- Introducing AI: AI tools change how engineers write and review code. In fact, 90% of engineering teams now use AI tools in daily work, which alters review cycles, change size, and delivery patterns.

- Frequent incidents: Recurring production issues usually signal deeper system problems. Analysis reveals where instability enters the delivery pipeline.

- Missed commitments: When planned releases slip repeatedly, delivery flow typically hides structural bottlenecks that require system-level changes.

- Continuous improvement: Even when delivery performance looks stable, periodic analysis helps teams identify emerging constraints before they become visible delays. Engineering systems evolve as codebases, teams, and tooling grow, so regular flow analysis supports ongoing optimization.

Tools for Value Stream Analysis

Different tools support value stream analysis at different levels of depth. Some help visualize workflows, others measure delivery behavior, and some guide leadership decisions.

These are the main categories you will encounter.

Diagramming Tools

Diagramming tools help you visualize how work moves through a delivery process. Teams may use them during workshops to map stages such as request intake, development, review, testing, and release.

This approach helps create a shared understanding of the system. For example, mapping a pull request workflow may reveal how code moves from development to review and then into testing environments. The diagram clarifies each step and exposes handoffs between teams.

On the downside, diagrams represent a static snapshot of how teams believe workflows. They don’t capture real-time variability and trends across real delivery cycles. Because of that limitation, diagramming tools work best as a starting point rather than a complete analysis method.

Metrics Dashboards

Metrics dashboards extend the analysis by measuring how work behaves over time. Instead of relying on assumptions, dashboards track signals such as lead time, deployment frequency, and failure rates across delivery pipelines.

These metrics show where bottlenecks occur. For example, dashboards may reveal that pull requests remain in review for several days or that testing environments frequently block deployment. That information helps teams focus on the stages that actually slow delivery.

Even so, dashboards’ main purpose is to visualize data.

Leaders still need to interpret patterns and decide which operational changes should follow.

Decision-Support Systems

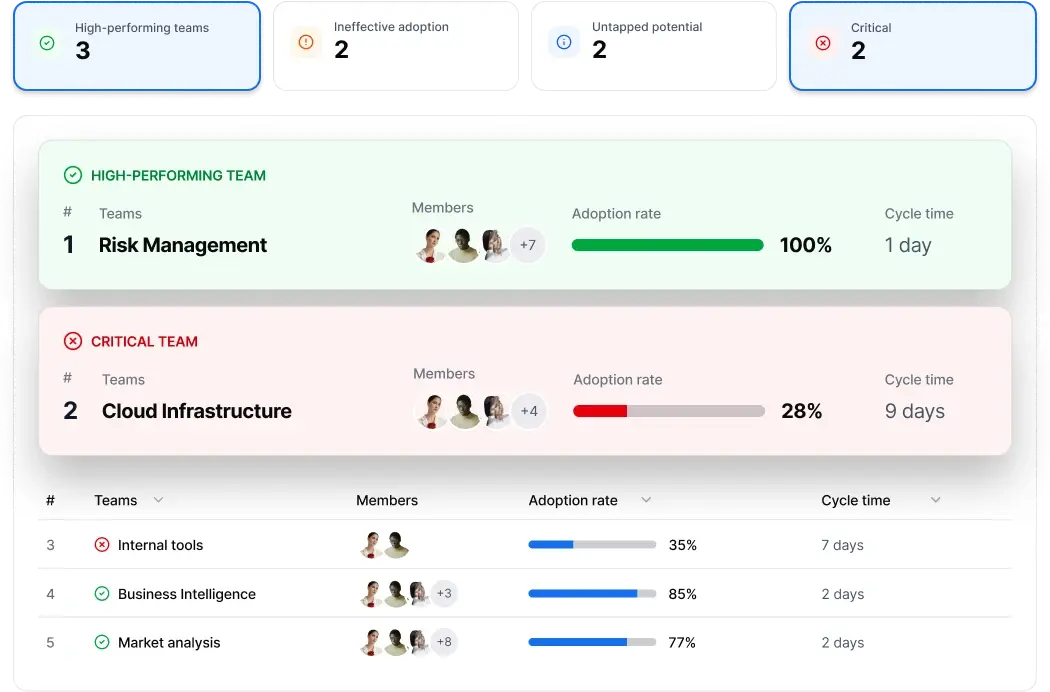

Decision-support systems go further by connecting delivery signals to leadership decisions. These platforms analyze development workflows continuously and identify patterns across repositories, pipelines, and issue tracking systems.

Next, they give you actionable insights and, in some cases, even action plans.

This approach helps leaders understand how system behavior affects their strategy and implement the right course of action.

For instance, Axify works as a decision-support platform that connects delivery telemetry across tools such as GitHub, CI/CD pipelines, and issue trackers.

The value stream mapping tool visualizes how work moves from request to production and shows queue growth, delays, and handoffs.

AI Impact shows you the before-and-after metrics following AI tool implementation. So, you can see if and how AI-assisted development influences your delivery speed, stability, and team capacity.

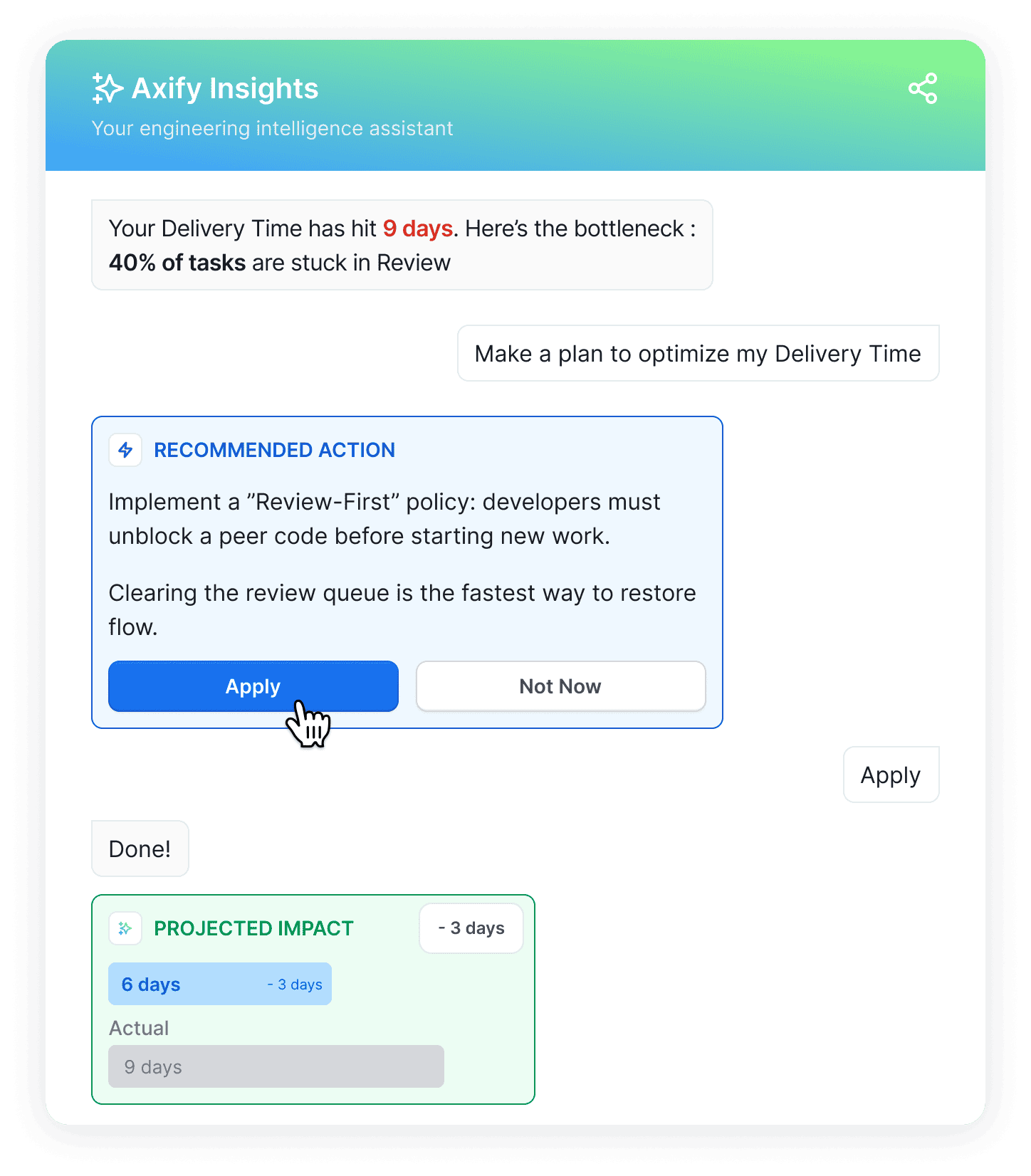

Then, Axify Intelligence analyzes those patterns and shows constraints affecting delivery. It also suggests action plans according to your delivery history that you can implement right from the platform. Besides, you can chat with an AI intelligence assistant to understand your situation and make plans.

Here’s how easy it is to use it:

As a result, your analysis shifts from visibility toward continuous system insight that supports ongoing improvement.

Turn Delivery Flow Into Clear Engineering Decisions

Value stream analysis helps you understand how work actually flows through your software delivery system. Instead of focusing on isolated activities, the analysis shows where queues, delays, and coordination gaps slow delivery.

Because of that visibility, engineering leaders can prioritize system improvements that shorten lead time, stabilize releases, and improve delivery predictability. The examples in this guide show how analyzing real workflow signals leads to practical changes in review practices, testing pipelines, and release coordination.

If you want to observe these delivery patterns across your repositories and pipelines, you can schedule a demo with Axify to see how value stream mapping and AI features can support better engineering decisions.