Imagine this:

You have delivery data, but your review meetings are still slow because each team measures work differently, so you cannot compare reports.

Enter DX Core 4.

This framework provides a shared structure for how engineering metrics are measured and grouped, so delivery reviews focus on what changed and what action to take. With consistent definitions and data sources, teams can connect execution signals to business outcomes without introducing another layer of reporting.

In this article, we'll break down the framework at the definition and data-source level. You will see how it compares to other models and how to apply it without driving reporting noise or metric gaming.

Let's dive in.

What Is DX Core 4?

DX Core 4 is a measurement framework that standardizes how delivery performance is defined, compared, and reviewed across teams. It establishes shared metric definitions, clear data sources, and consistent interpretation rules, which reduces debate during planning and executive reviews.

At its core, the DX Core 4 framework groups signals into four dimensions: Speed, Effectiveness, Quality, and Impact. These dimensions act as a common structure (not a scoring system). We encourage you to use them together instead of optimizing them in isolation.

Remember: DX Core 4 is not an operating model, and it does not replace team-level diagnostics or workflow analysis. Instead, it provides a stable measurement layer that sits above existing tools and processes.

The DX Core 4 was developed by Laura Tacho and Abi Noda, with input from DX advisors including Dr. Margaret-Anne Storey, Dr. Nicole Forsgren, Dr. Thomas Zimmerman, and others involved in SPACE, DORA, and DevEx research.

You can check out this YouTube video if you want to dive even deeper:

This leads us to our next point.

DX Core 4 Metrics List

When reviewing delivery performance across our clients’ teams, we frequently see one problem repeated:

The data exists, but it is not organized in a way that supports clear decisions.

DX Core 4 metrics address this by grouping signals into distinct dimensions, each tied to a specific type of review or planning question.

Here, we organized the metrics by dimension so it is easier to scan what each group measures and why it matters.

Speed Metrics (DX Core 4)

Speed metrics show how quickly work moves from intent to production and where flow slows down. These signals are most useful when start and end events are fixed and applied consistently across teams:

- Lead time: Measures the total time from when a stakeholder request is created until the change is running in production and usable by end users. Tracking this metric exposes queue time and handoffs that delay value, which directly affects planning accuracy and release risk.

- Deployment frequency: Tracks how frequently code reaches production. This metric validates whether shorter lead times result in actual releases or if work accumulates upstream. According to the 2025 DORA report, top-performing teams deploy multiple times per day on demand, with 16.2% reaching continuous releases and another 22.7% shipping at least hourly.

- PRs per engineer: Captures review and merge activity volume. On its own, this is an easy metric to distort, so it works best as a supporting signal alongside lead time and review latency.

- Perceived rate of delivery: Reflects how quickly delivery feels to the team, based on developer experience or pulse survey data. This signal helps identify coordination friction, unclear requirements, or excessive context switching that may not appear in raw throughput metrics.

Effectiveness Metrics (DX Core 4)

Effectiveness metrics focus on whether teams can deliver meaningful work without excess friction. These metrics typically combine system data with calibrated survey input:

- Time to 10th PR: Measures how long it takes a new engineer to reach sustained contribution. A longer-than-usual time to 10th PR shows onboarding gaps that affect capacity planning and hiring ROI.

- Ease of delivery (perceived): Captures how difficult it feels to ship changes given current tooling and processes. A rising score usually precedes slower throughput.

- Regrettable attrition: Tracks the loss of engineers whose departure materially impacts delivery capacity or domain knowledge. When analyzed alongside tenure, role, and recent workload patterns, this metric helps identify systemic issues that may be affecting team effectiveness or sustainability. For example, losing key engineers can reduce throughput because new or reassigned team members need time to rebuild context.

- Developer Experience Index (DXI): A composite, survey-derived score built from 14 standardized experience drivers. This provides a structured way to interpret perception data without relying on one-off questions.

Quality Metrics (DX Core 4)

Quality metrics track whether production deployments introduce issues that affect users or operations, and how quickly teams restore normal service when something goes wrong.

These metrics make release risk and recovery performance visible, so teams can detect patterns (for example, rising rollback volume or slower incident recovery) and adjust how they build, review, test, and release changes.

- Change failure rate: Measures the percentage of production deployments that lead to a production incident, rollback, or urgent fix. A sustained increase often points to issues like incomplete testing, unclear requirements, risky change size, or inadequate review.

- Failed deployment recovery time: Tracks the time from when a deployment-related incident starts until service is restored to normal operation. This metric reflects incident response capability and system resilience, not just release quality. Longer recovery times can indicate slow detection, unclear ownership, insufficient runbooks, or hard-to-roll-back architectures.

- Perceived software quality: Captures how confident engineers and adjacent teams (for example, support or operations) feel about the stability of recent releases, typically through standardized survey questions. This is a qualitative signal that helps explain why teams may be slowing down (or why they are uneasy) even when deployment volume looks healthy.

- Operational health and security metrics: Track system strain and risk exposure that influence release safety. These metrics help teams assess whether current release pace is sustainable or whether risk is accumulating. For instance, high alert volume, growing incident backlogs, elevated error rates, or unresolved high-severity vulnerabilities signal that new changes introduce instability.

Impact Metrics (DX Core 4)

Impact metrics connect delivery performance to business outcomes that are reviewed at the product, portfolio, or company level. These metrics are typically defined outside the engineering tooling itself, so they must use consistent definitions and reporting periods to avoid misinterpretation:

- % time on new capabilities: Estimates how much engineering effort is spent building new features versus maintaining or improving existing systems. This is usually calculated by tagging work items (for example, feature, maintenance, reliability) in the planning system and reviewing the trend over time rather than setting a fixed target. For instance, a shift from 60% new work to 35% may indicate growing maintenance load or accumulated technical debt.

- Initiative progress and ROI: Tracks whether planned initiatives reach their defined milestones and whether expected outcomes (like increased adoption, reduced support volume, or cost savings) are realized after release. This requires clearly defined start dates, completion criteria, and success measures in the planning system.

- Revenue per engineer: Divides total company revenue by the number of engineers to provide a high-level view of how engineering investment relates to business output. A 2025 benchmarking report places the median at roughly $892,000 annually. However, this number is influenced by pricing, market conditions, and company size, so it’s useful only for organization-level trend analysis and should not be used to evaluate individual teams.

- R&D as % of revenue: Shows how much of total revenue is reinvested into product and engineering work. Changes in this ratio affect hiring plans, investment capacity, and the organization’s ability to take on long-term initiatives.

This list establishes how delivery outcomes can be connected to business results. Next, we’ll focus on the DX Core 4 metrics that teams can act on directly.

Which DX Core 4 Metrics Are Most Actionable?

The most actionable DX Core 4 metrics are the ones with clearly defined start and end events and a direct link to delivery outcomes. These metrics help teams decide when to release, how large changes should be, and how much delivery risk is acceptable.

High-Signal DX Core 4 Metrics

In most organizations, the clearest operational signals come from the DORA metrics and from business measures tied to shipped work.

Lead time for changes and deployment frequency show how quickly code moves from commit to production and how often value is actually released. As such, they can help you shape release planning and batch size.

- Example: If lead time for changes increases from two days to six while deployment frequency drops, release batches are likely getting larger or work is waiting in review queues. That can signal you to reduce change size or adjust review capacity.

Change failure rate and recovery time indicate how often releases cause production issues and how long it takes to restore service.

- Example: A rising failure rate combined with longer recovery times may trigger stricter release criteria, additional automated tests, or changes to rollback procedures.

Revenue-linked metrics connect delivery activity to business results by tracking outcomes associated with shipped work.

Examples include:

- Revenue generated by a product line after a feature launch

- Margin on a service after a pricing change

- ARR movement following a major release

Reviewing these trends alongside delivery risk and throughput helps leadership decide where to continue or pause investment.

Where Axify Fits

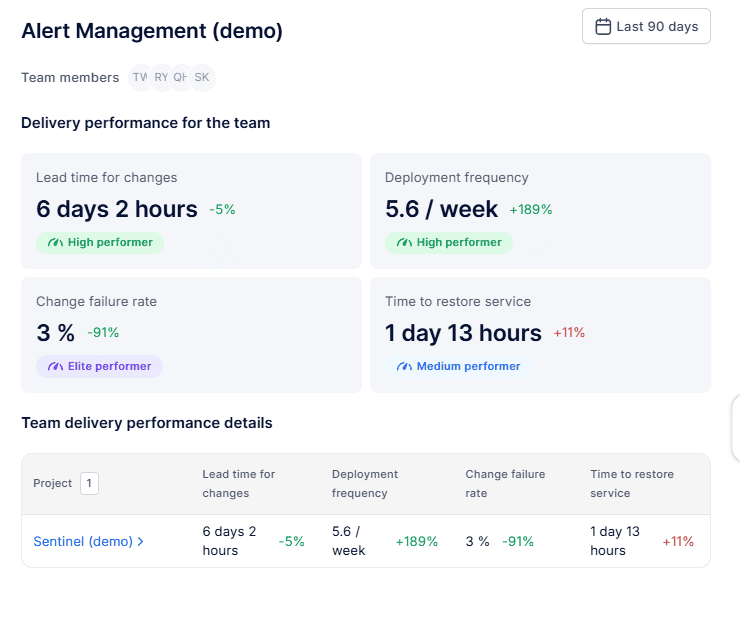

Axify collects delivery data directly from systems such as Jira, Azure DevOps, GitHub, and GitLab to calculate DORA metrics as well as flow metrics (cycle time, WIP, throughput, etc.) using consistent definitions.

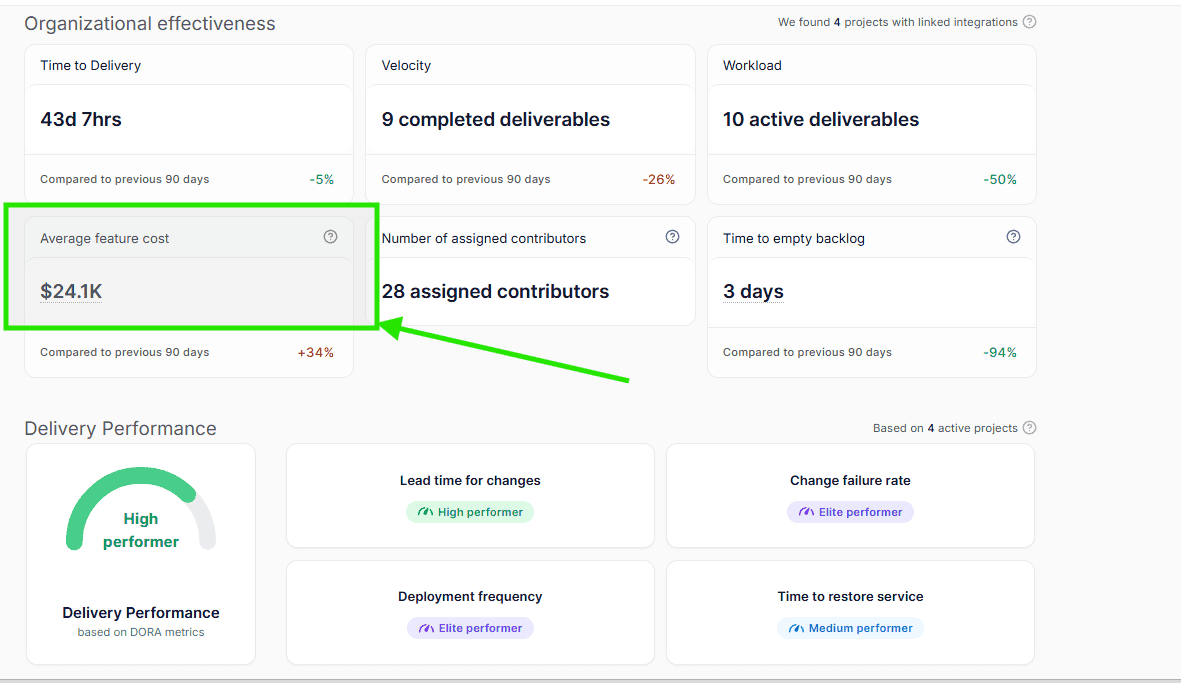

Axify also lets you configure an average engineering cost to translate delivery performance into financial outcomes. This includes feature cost changes, cost deltas, and FTE-equivalent capacity gained as flow and throughput improve during operational reviews.

You can see it in the “Organizational Effectiveness” view, like so:

DX Core 4 Metrics That Can Become Vanity Metrics

Some DX Core 4 metrics are useful only when interpreted with strict context, while others should be reviewed exclusively at the organizational level. When these metrics are treated as direct performance targets, changes in the reported values may reflect reporting artifacts or external business factors rather than actual improvements in delivery performance.

In those cases, teams can end up optimizing numbers that do not correspond to faster, safer, or more predictable delivery.

These include:

PRs per Engineer

This metric becomes misleading when it is counted without normalizing for repository structure, expected review depth, or change size. A team can increase the number of pull requests simply by splitting one large change into several smaller ones. The reported PR count rises, but lead time, review effort, and defect risk may remain unchanged.

Revenue per Engineer

This metric does not hold meaning at the individual team level because revenue is primarily influenced by pricing strategy, sales execution, and market demand. A team may deliver features consistently while revenue fluctuates due to external factors that engineering does not control.

At the organization level, it can be reviewed as a long-term trend, but it should not be used to evaluate day-to-day delivery decisions.

Proprietary Composite Scores

Composite metrics become difficult to act on when the calculation method is not visible. If a score drops from 72 to 65 but the underlying inputs are unknown, teams cannot determine whether the change came from longer cycle times, increased incident volume, or reduced developer experience. Without traceability to specific workflow events, the metric cannot guide corrective action.

Remember: Without explicit guardrails (such as fixed definitions, consistent time ranges, and supporting operational metrics) these metrics absorb variation they cannot explain. Reviews then shift toward debating the reported numbers instead of identifying specific changes to release planning, staffing, or workflow. This slows decision-making rather than improving it.

DX Core 4 vs. DORA vs. SPACE vs. DevEx

Choosing between DORA, SPACE, and DevEx is rarely a tooling decision. It is usually a governance question: which metrics are reviewed, how often they are reviewed, and which audience is responsible for acting on them.

Engineering leaders often struggle to decide which model to use in recurring delivery reviews. DX Core 4 was proposed as a framework that combines operational delivery metrics with experience and business outcome signals into a single review structure. A short overview of that intent is discussed here:

The differences below clarify how each model is typically applied.

DX Core 4 vs. DORA

DX Core 4 and DORA share the same operational foundation based on four metrics:

- Lead time for changes

- Deployment frequency

- Change failure rate

- Time to restore service

These metrics are calculated from the same underlying events in version control, CI/CD pipelines, and incident management systems.

The distinction appears during review and decision-making.

- DORA focuses on delivery speed and service reliability. This makes it effective for monitoring release performance and identifying instability in production.

- DX Core 4 adds experience and business outcome signals along with those delivery metrics. That way, leaders can review delivery speed, delivery risk, and investment impact together. Expanding the scope in this way requires clear separation between team-level delivery metrics and organization-level outcome measures to avoid incorrect comparisons.

DX Core 4 vs. SPACE

The difference between DX Core 4 and SPACE is primarily about measurement depth versus comparability across teams.

- SPACE includes signals related to collaboration, communication, satisfaction, and cognitive load. These inputs help explain why work slows down even when system metrics appear stable.

- DX Core 4 intentionally limits the number of experience signals so that results can be compared across teams with different architectures, repositories, and workflows.

Therefore:

|

SPACE is typically more useful when diagnosing friction within a specific team. |

DX Core 4 is more useful when running standardized delivery reviews across multiple teams or business units. |

DX Core 4 vs. DevEx (Developer Experience)

The difference between DX Core 4 and DevEx frameworks is mainly scope.

- DevEx approaches analyze sources of friction such as tooling reliability, environment setup time, interruptions, and context switching. That level of detail helps teams identify specific workflow improvements.

- DX Core 4 summarizes performance into a smaller set of comparable metrics that can be reviewed at the portfolio or executive level. This makes trend analysis easier but does not replace deeper diagnostic work.

In practice:

|

DX Core 4 can surface where delivery performance is degrading. |

DevEx analysis is used to identify the specific causes and corrective actions. |

How to Implement DX Core 4

Implementing DX Core 4 is less about adding new metrics and more about locking down definitions, data paths, and review rules. Here, we'll break down implementation into concrete steps, from sourcing data to avoiding common failure modes that reduce trust.

Data Sources Needed for DX Core 4

DX Core 4 uses data that already exists in delivery systems. To keep results comparable across teams, each system must record events with reliable timestamps and ownership.

Typical data sources include:

- Git provider (GitHub, GitLab, Bitbucket): Used to capture commit, pull request, merge, and repository events. These timestamps define when work starts and when it is integrated.

- Issue tracker (Jira, Azure Boards, Shortcut): Provides the original request, scope, and initiative context so changes can be tied back to planned work.

- CI/CD systems (GitHub Actions, CircleCI, GitLab CI): Record build, test, and deployment events, including whether a deployment succeeded or failed.

- Incident and observability tools (PagerDuty, Datadog, similar platforms): Identify when production incidents occur and when service is restored.

- Recurring surveys: Collect standardized feedback on delivery friction, release confidence, and developer experience using the same questions each cycle.

- HR and finance systems: Provide organization-level data such as attrition rates or revenue trends. These inputs are typically reviewed at the organization or product-line level rather than by individual teams.

Do You Need to Track Every DX Core 4 Metric?

No.

DX Core 4 is a structured framework, not a requirement to instrument every metric at once. The value comes from selecting a small, balanced set of metrics that answer the specific question your team is trying to solve.

Start with the review goal to establish the right metrics to track.

If the problem is delivery speed, focus on:

- Lead time

- Deployment frequency

- Review or PR cycle time

If the concern is release risk, prioritize:

- Change failure rate

- Recovery time

- Incident trends

If leadership questions onboarding or sustainability, begin with:

- Time to 10th PR

- Regrettable attrition

- Developer Experience Index

Impact metrics are typically added once delivery and quality signals are stable, since they require consistent definitions and longer reporting windows.

The principle is balance. At minimum, teams should track:

- One speed metric

- One quality metric

- One effectiveness or experience signal

That combination prevents over-optimizing a single dimension (for example, pushing speed while degrading reliability).

As definitions mature and trust increases, additional metrics can be layered in without destabilizing review processes.

Remember: If you cannot explain why you’re tracking a metric, don’t add it yet.

DX Core 4 Setup Checklist

Before reviewing any metrics, teams must define how each metric is calculated and ensure every group uses the same rules. If these definitions are left to individual tools or teams, reports will not match during executive reviews.

The required setup decisions include:

- Define start and end points for lead time: For example, measure from the first commit associated with a tracked work item to the first successful production deployment.

- Standardize what counts as a deployment and a failure: Decide in advance whether partial rollouts, configuration-only releases, or feature flag activations are included. Also define what events qualify as a failed deployment (for example, rollback or incident creation).

- Decide the measurement level: Use team-level metrics for execution reviews and organization-level rollups for portfolio planning.

- Set survey cadence and governance: Define how often surveys run, which roles respond, and how results are interpreted so scores remain comparable over time.

- Document metric definitions: Publish the exact formulas, event sources, and aggregation windows so reviewers do not need to debate definitions during planning meetings.

Tools for DX Core 4 Measurement

DX Core 4 measurement depends on connecting operational data sources into a single, traceable reporting layer. A suitable tool must capture events from code, planning, deployment, and incident systems, apply consistent calculation rules, and preserve historical definitions so trends remain comparable.

What to Look for in a DX Core 4 Tool

1) A DX Core 4 tool should make metric definitions visible and verifiable. For each reported value, reviewers must be able to see:

- which system produced the underlying event

- which timestamps were used

- how the calculation was performed

Without that traceability, teams cannot confirm whether changes in a metric reflect real workflow changes or differences in data collection.

2) Visibility across workflow stages is also necessary.

For example, if lead time increases from two days to five, the tool should show how much time was spent in coding, review, testing, and deployment queues. Total duration alone does not indicate where intervention is required.

3) Normalization rules must also be explicit.

When comparing teams, the tool should account for factors such as team size, repository structure, and service ownership. Without those adjustments, differences in architecture or staffing can appear as performance gaps.

4) Changes to metric definitions must be recorded and controlled.

If the definition of “deployment” or “failure” is modified, the tool should log when the change occurred and which reports are affected. This prevents historical comparisons from becoming invalid.

5) The metrics should be linked directly to the workflow steps teams can influence.

Examples include review time, deployment frequency, or incident recovery time. If metrics are presented only as high-level summaries, review meetings focus on describing trends instead of deciding which process to adjust.

Tooling Options

- Engineering intelligence platforms: These platforms collect delivery, quality, and survey data from multiple systems and apply consistent calculation rules. Because data is centralized, teams spend less time reconciling reports during review meetings.

- DevOps analytics platforms: These tools measure delivery speed and reliability effectively using data from version control, build, and deployment systems. However, you may need additional integrations or manual analysis to track perception and business outcome signals.

- Survey and business intelligence stacks: General analytics tools allow flexible reporting across many data sources. The trade-off is ongoing maintenance. Custom pipelines must be monitored, and metric definitions must be manually updated when workflows change, which increases the risk that reports diverge across teams.

DX Core 4 Implementation Traps

Most DX Core 4 implementations lose credibility because teams apply different definitions or rely on incomplete data, not because the required data is unavailable. The same issues appear repeatedly:

- Inconsistent definitions across teams: If teams measure lead time or define deployments differently, the reported numbers cannot be compared. For example, one team may start lead time at the first commit, while another starts when a ticket moves to “In Progress.” Industry surveys show that 67% of engineering leaders struggle to select and standardize performance metrics, which often results in conflicting reports during review meetings.

- Tooling gaps that require manual reporting: When data must be copied between systems or combined in spreadsheets, errors accumulate and teams stop trusting the numbers. In surveys of large engineering organizations, 75% practitioners report losing 6-15 hours each week reconciling data across multiple delivery tools.

- Survey fatigue and low-quality responses: If perception surveys are sent too frequently or do not lead to visible changes, response rates decline and answers become less reliable. Over time, the data no longer reflects actual developer experience.

- Executive-level metrics that teams cannot influence: Some business metrics, such as revenue or margin, are influenced by engineering delivery but also depend on pricing, sales execution, and customer purchasing cycles. For example, a new capability may be released in one quarter, while the associated revenue is recognized later after contracts are signed or renewed. Because revenue is affected by multiple teams and external factors, an increase or decrease does not indicate whether the engineering team delivered faster, safer, or more predictably.

When definitions are consistent and data sources are traceable, DX Core 4 provides a stable way to review delivery performance over time. If definitions vary or data is incomplete, review meetings tend to focus on reconciling reports rather than deciding what to change next.

That brings us to the next question:

Is DX Core 4 Worth It?

DX Core 4 is worth adopting when a consistent, decision-ready measurement layer is missing across teams. The framework pays off when delivery data already exists but cannot be compared or trusted at the organizational level. In that case, standard definitions and fixed review rules reduce debate and shorten planning cycles.

When DX Core 4 Works Well

DX Core 4 works best when DORA practices are already stable, and instrumentation is reliable, so your DORA metrics are captured using consistent timestamps.

The framework becomes effective when these elements are documented and applied consistently:

- The exact start and end events used for lead time for changes and mean time to recovery

- What counts as a deployment

- What qualifies as a failed change

- Which level of aggregation is used (team, product line, or company)

When these rules remain stable, reports stay comparable as more teams are added. Review meetings can then focus on deciding which initiatives to fund, where delivery risk is increasing, and where throughput is improving.

DX Core 4 is particularly useful when leadership needs a small, consistent set of indicators that show delivery speed, reliability, and business outcomes together without re-analyzing raw data every review cycle.

When DX Core 4 Is Not the Right First Step

DX Core 4 is less effective when:

- The immediate goal is to diagnose why work is slow or unstable. Because the framework summarizes performance into a limited set of metrics, it does not provide enough detail to identify specific causes such as environment setup delays, coordination overhead, or unclear ownership.

- These metrics are used to evaluate individual engineers or rank teams. In that situation, people may adjust how work is reported (such as splitting changes or delaying deployments) to protect their results rather than improve the workflow.

- You don’t have consistent instrumentation. If teams use different branching strategies, deployment paths, or incident definitions, aggregated reports cannot be compared directly.

In those cases, the first step should be to fix the issues we outlined. Applying the framework before those foundations are in place will create conflicting reports instead of clearer decisions.

How to Use DX Core 4 Without Hurting Developer Experience

DX Core 4 metrics should be reviewed in a way that improves delivery decisions without pressuring teams to change how they report work.

The practices below address the most common misuse patterns:

- Do not use Core 4 as a ranking system: Comparing teams by certain metrics can push them to adjust how work is recorded instead of improving delivery reliability. For example, a team might split one change into multiple pull requests to appear more active without reducing cycle time or defect risk. When comparisons are required, review results at the portfolio or company level where differences in system complexity and service ownership are documented.

- Review trends instead of single measurements: A single weekly value for change failure rate or deployment frequency does not show whether performance is improving or declining. Reviewing the same metric over several weeks (for example, a rolling six-week trend) makes it easier to see sustained shifts such as gradually increasing incident rates.

- Pair metric changes with team-provided context: Metrics show what changed, but not the operational reason. Each review should include short notes from the responsible team describing events that influenced the data. For example, a temporary increase in lead time for changes may coincide with a planned database migration or a large refactoring effort.

- Publish a written policy that defines how metrics are used: The organization should document which metrics guide planning decisions, which are used to monitor delivery risk, and which are excluded from individual or team performance evaluation. For instance, change failure rate may trigger reliability work, while revenue per engineer is reviewed only at the company level for long-term investment discussions.

- Use metric trends to trigger specific corrective actions: When change failure rate increases or recovery time lengthens, the response should be an explicit action such as expanding automated test coverage, adjusting release size, or allocating time for incident reduction work. Acting on early signals typically prevents larger production disruptions later. In fact, problems caught early through feedback loops cost up to 100x less to fix than issues found later in production.

Axify’s Approach to Engineering Productivity

DX Core 4 defines how engineering performance should be reviewed across delivery speed, reliability, effectiveness, and business impact. Implementing that framework consistently is most effectively achieved with a Software Engineering Intelligence (SEI) platform that can unify workflow data under shared definitions.

Axify is an SEI platform that operationalizes DX Core 4 using recorded workflow events and consistent calculation rules.

Rather than introducing a new set of delivery metrics, Axify connects planning, development, deployment, and incident data so the same delivery signals can be reviewed across teams and over time.

Here’s how:

Axify Turns DX Core 4 Dimensions into Operational Signals

DX Core 4 groups performance into four categories, but those categories depend on reliable event data to remain comparable.

Axify calculates the underlying delivery metrics directly from recorded workflow events:

- Lead time for changes is measured from the first associated commit through successful production deployment.

- Deployment frequency is derived from production deployment events.

- Change failure rate is based on deployments followed by incident creation or rollback.

- Time to restore service is measured using incident open and resolution timestamps.

Because each metric uses consistent event boundaries, changes in delivery speed can be reviewed alongside stability and reliability without reconciling definitions between teams.

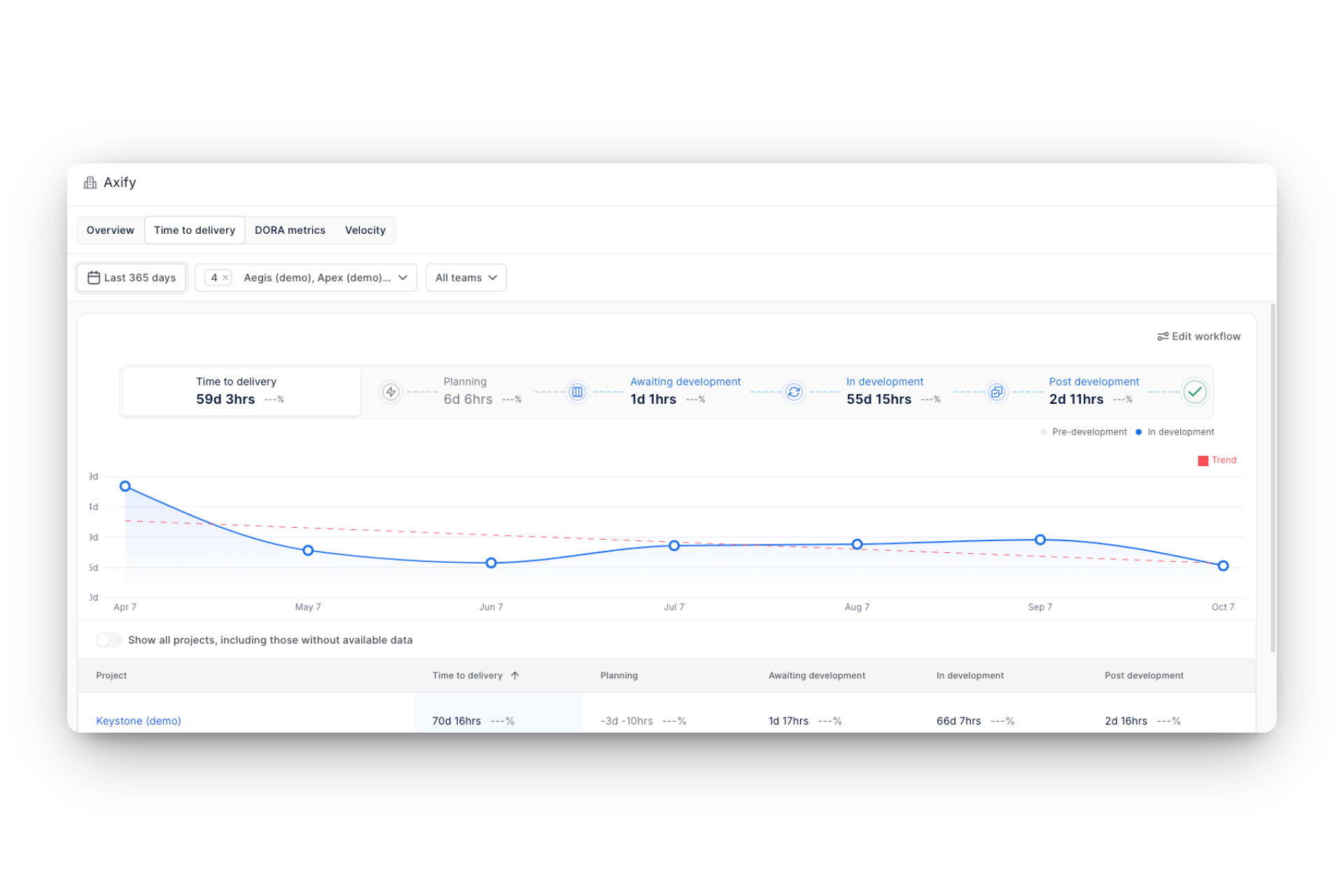

Axify Extends DX Core 4 with Execution-Level Flow Signals

DX Core 4 identifies what should be measured, but teams also need to see where work is slowing inside the delivery process.

Axify adds flow analysis that explains changes in delivery metrics:

- Cycle time distribution across workflow stages

- Aging work in progress at the issue level

- Throughput trends over defined periods

These flow signals are calculated from the same underlying workflow events used to compute the DORA metrics, such as commits, pull requests, deployments, and incident records. Because both sets of metrics rely on identical source events, teams can trace problematic changes in certain metrics back to specific stages in the delivery process without reconciling separate data models.

Moreover, the Value Stream Mapping feature in Axify shows you those stages from request to release, making it clear where work is waiting (e.g., extended review queues, delayed approvals, or testing bottlenecks).

Instead of seeing only that lead time increased, you can see which stage caused the increase.

Historical comparisons then confirm whether process adjustments reduce those delays over time.

Axify Uses Context-Aware Intelligence to Explain Metric Changes

Axify Intelligence analyzes delivery history and recent workflow activity to highlight where constraints are emerging and where changes will have the greatest impact on delivery performance.

Instead of generating generic recommendations, Axify Intelligence:

- Operates on the organization’s internal delivery events.

- Produces an executive-level summary that highlights recent changes in performance.

- Identifies which constraints are most affecting delivery speed or reliability.

- Proposes concrete next steps teams can implement to address those constraints

- Allows deeper investigation through dashboards and a conversational interface.

Teams can use it to:

- Identify where work is waiting; for example, extended pull request review time or growing queues before deployment

- Understand which constraint caused a recent change in lead time for changes or throughput

- Evaluate the likely effect of adjustments such as changing ownership, sequencing work differently, or modifying review policies

- Build an action plan directly from surfaced insights

- Implement the recommended actions right from the platform

Because the insights are tied directly to recorded workflow events, each recommendation can be traced back to the commits, pull requests, deployments, or incidents that influenced the metric.

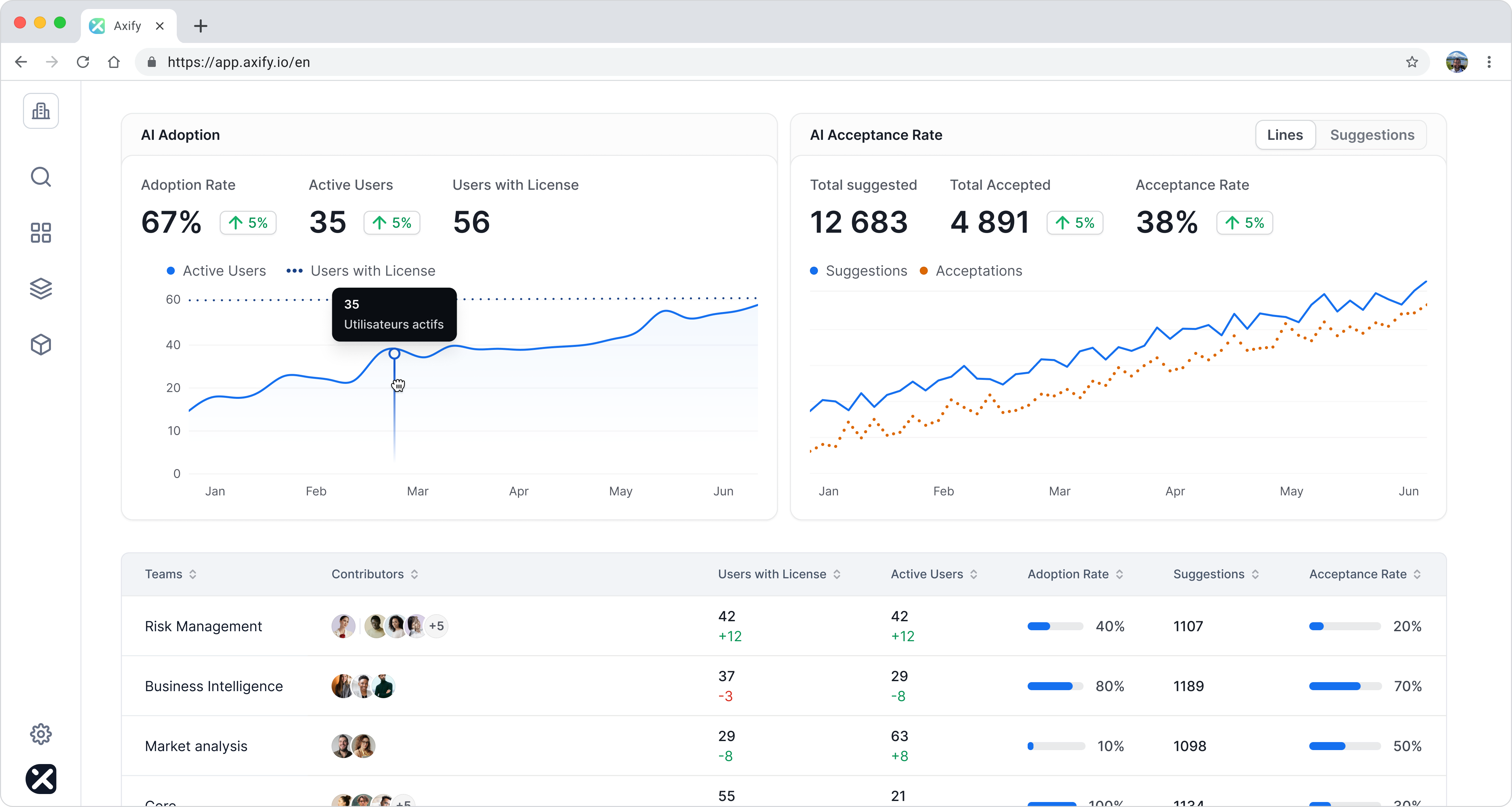

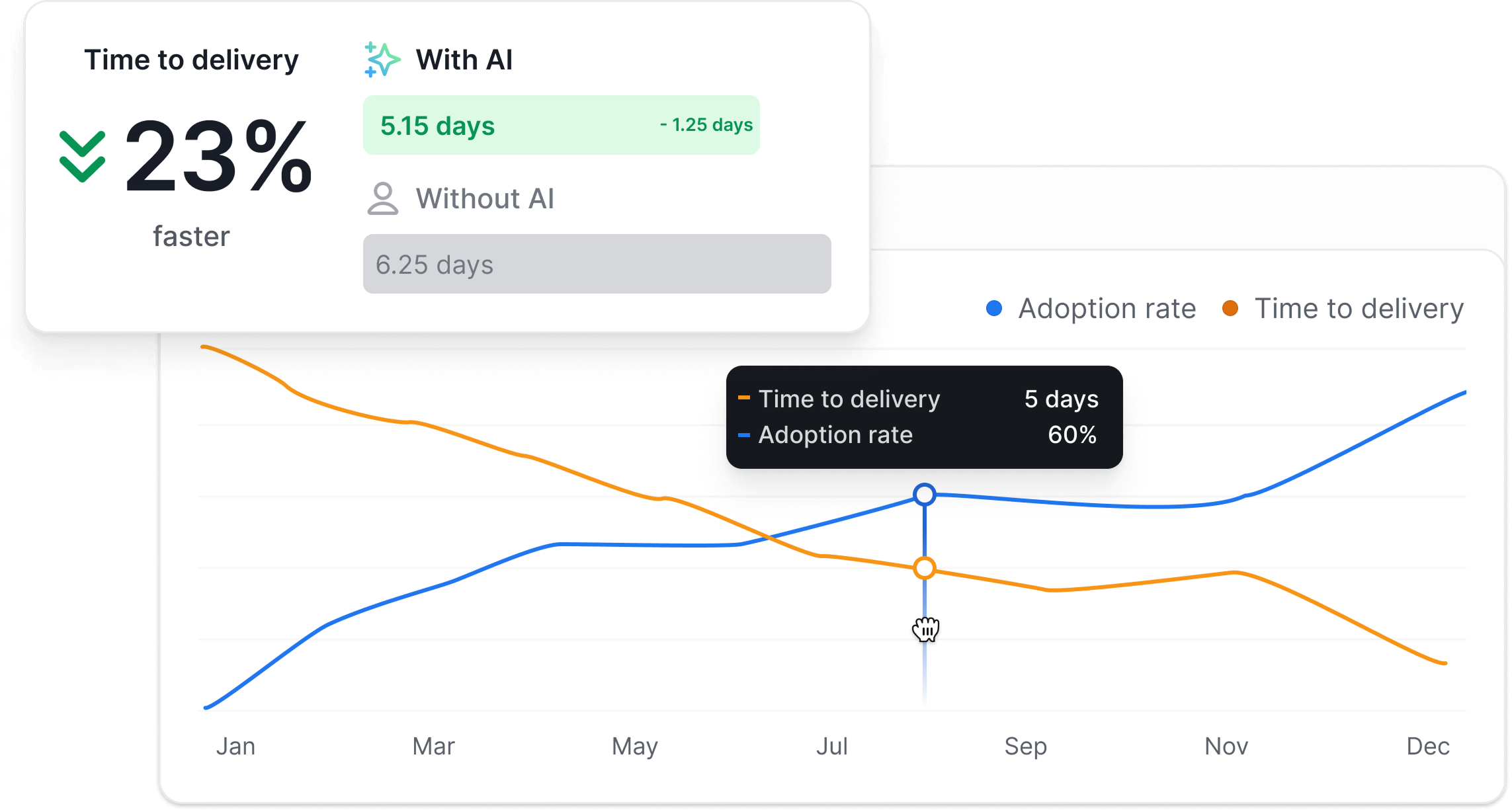

Axify Measures the Business Impact of AI Adoption

Axify’s AI Performance Comparison feature allows organizations to evaluate how AI tools affect delivery outcomes using observable metrics.

The current components include:

- AI adoption tracking: Measures how many licensed users actively use AI development tools and how frequently those tools are used. Adoption can be filtered by team or project, and individual usage patterns can be reviewed across tools such as GitHub Copilot or Claude Code.

- AI acceptance rate: Calculates the proportion of AI-generated suggestions that developers accept. This helps you distinguish between tools that are enabled but rarely trusted and those that meaningfully contribute to development work.

- Before-and-after delivery metrics: Compares delivery performance before and after AI adoption, including lead time for changes, deployment frequency, and other reliability indicators. This allows teams to determine whether AI usage correlates with measurable improvements or increased rework.

Together, these views provide a data-driven way to evaluate whether AI tools are improving delivery speed and stability in practice, rather than relying on anecdotal feedback.

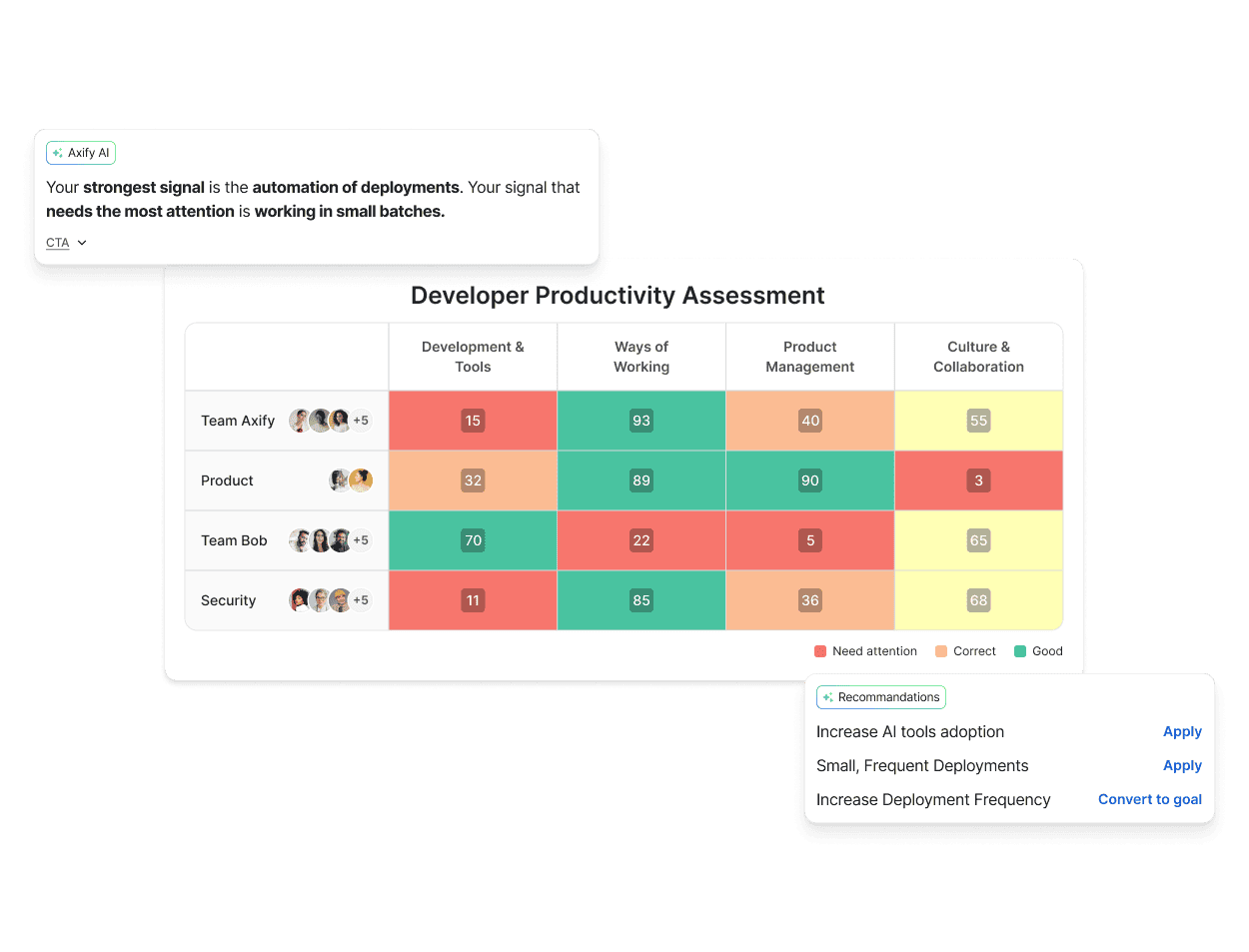

Axify Establishes a Baseline with the Developer Productivity Assessment

Before improvements can be measured, teams need a consistent starting point.

The Developer Productivity Assessment provides a structured evaluation of how teams deliver software across four operational pillars:

- Development and tooling

- Ways of working

- Product management alignment

- Culture and collaboration

The assessment combines system data with a short questionnaire to establish a consistent starting point. The resulting report highlights strengths, identifies constraints, and tracks changes over time using the same measurement criteria.

This baseline helps organizations:

- Compare teams using standardized indicators

- Target improvement initiatives where they will have the greatest effect

- Monitor whether process changes produce measurable gains in delivery performance

Conclusion

DX Core 4 gives engineering leaders a practical way to review how work actually moves from idea to production and how those changes affect reliability, cost, and outcomes.

Axify builds on that structure by turning everyday delivery activity into a clear view of where work slows down, where risk is increasing, and which improvements are actually making a difference. With flow analysis, AI-driven insights, and side-by-side comparisons of new tools like AI coding assistants, teams can see what is changing in their delivery process and decide what to adjust next.

If you want to understand how your own teams are performing today (and where the biggest gains are likely found), book a demo with us today and review your delivery data together with a product specialist.

FAQs

What is DX Core 4?

DX Core 4 is a measurement framework that groups engineering performance into four dimensions: Speed, Effectiveness, Quality, and Impact. It provides shared definitions for how delivery performance is reviewed across teams so results can be compared using the same calculation rules.

What are the DX Core 4 metrics?

DX Core 4 includes both operational and perception-based signals. Common examples include:

- Lead time for changes

- Deployment frequency

- Change failure rate

- Time to restore service

- Time to tenth pull request (onboarding contribution speed)

- Perceived ease of delivery

- Regrettable attrition

- Business outcome indicators such as initiative progress or revenue trends

These metrics are intended to be reviewed together rather than optimized individually.

Is DX Core 4 the same as DORA?

No. DX Core 4 includes the four DORA metrics as its delivery foundation but extends them with additional signals related to developer experience, effectiveness, and business outcomes. DORA focuses on speed and reliability, while DX Core 4 provides a broader review structure.

How do you measure developer productivity with DX Core 4?

Developer productivity is evaluated by reviewing multiple indicators together, such as lead time for changes, throughput, reliability, and developer experience signals. Instead of relying on a single output metric, DX Core 4 looks at how consistently teams deliver working software and how much friction exists in the workflow.

Which DX Core 4 metrics are vanity metrics?

Metrics such as pull requests per engineer, revenue per engineer, or opaque composite scores can become misleading if used as performance targets without context. These values can change because of reporting practices or external business factors rather than improvements in delivery performance.

Can you implement DX Core 4 without a vendor tool?

Yes. DX Core 4 can be implemented using existing systems such as version control, issue tracking, deployment pipelines, and incident management tools, as long as metric definitions and data sources are standardized. However, maintaining consistent calculations across teams usually requires automation to avoid manual reporting and definition drift.