When delivery outcomes are hard to explain, the problem is rarely a lack of data. It’s the gap between engineering metrics and actionable diagnosis. BlueOptima offers detailed activity and output metrics, but teams often struggle to connect those numbers to specific execution problems.

That gap is why teams evaluate BlueOptima alternatives. The real test is whether a platform can link PR behavior, CI throughput, and work-in-progress to identifiable constraints in the delivery flow, such as review latency, environment contention, or rework after failed releases.

The same issue shows up in reliability reviews. DORA metrics summarize outcomes, but they don’t explain where delays or failures originate. Without visibility into the underlying workflow, teams are left debating numbers instead of fixing bottlenecks.

Below, we compare 10 alternatives to BlueOptima based on how well they help engineering leaders understand and change execution.

|

Tool |

Best for |

Focus area |

Metrics & flow coverage |

AI impact measurement |

Integrations |

Decision support |

Typical pricing tier |

|

Axify |

Leaders improving delivery execution |

End-to-end delivery flow |

Full SDLC flow, VSM, DORA |

Axify Intelligence: AI powered insights with users context data |

GitHub, GitLab Jira, Bitbucket and Azure DevOps. AI tools : Github Copilot, Claude Code, Cursor and Lite LLM |

Root-cause analysis, initiative comparisons |

Free trial and paid plans starting from $19 per contributor/month. |

|

Jellyfish |

Execs linking engineering to spend |

Investment & portfolio view |

High-level delivery + planning |

Yes, AI adoption tracking |

Dev tools + finance + HR |

Scenario planning, cost tradeoffs |

Custom enterprise pricing |

|

Swarmia |

Teams focused on healthy delivery |

Continuous improvement |

Flow, DORA, SPACE |

Yes, AI tool impact |

Git, Jira, surveys |

Improvement signals, trend reviews |

Free and paid plans starting at €28 ($33) per contributor/month. |

|

Harness SEI |

DevOps-mature orgs |

Pipeline efficiency |

CI/CD-centric SDLC metrics |

Indirect via Trellis signals |

Harness ecosystem + DevOps tools |

Bottleneck isolation, risk flags |

Custom quote. |

|

Code Climate Velocity |

Managers fixing review flow |

PR & cycle time control |

Code → review → deploy |

No native AI ROI |

Git, Jira |

Before/after workflow analysis |

Custom quote. |

|

Waydev |

Orgs wanting one analytics layer |

Broad engineering analytics |

Delivery, code, planning |

Yes, AI forecasts & guidance |

Very wide tool coverage |

Predictive timelines, risk alerts |

$29+ per contributor/month |

|

Allstacks |

Leaders managing many initiatives |

Delivery risk & forecasting |

Cross-project flow signals |

Indirect (AI diagnostics) |

Git, PM, CI, incidents |

Forecasting, investment risk |

Starts at $400 per contributor/month. |

|

GitClear |

Code-centric engineering teams |

Code impact & reviews |

Deep PR and code metrics |

Limited (usage visibility) |

Git, Jira |

Review efficiency, churn control |

Free and paid plans starting at $29 per contributor/month |

|

DevDynamics |

Teams mixing quality + cost |

Delivery + AI code review |

DORA + PR + cost views |

Yes, AI review & metrics |

20+ dev & ops tools |

Meeting-ready reports, cost splits |

Free and paid plans starting at $19 per contributor/month |

|

Oobeya |

Enterprise exec oversight |

Engineering command center |

End-to-end SDLC signals |

Yes, AI coding impact |

Dev, CI/CD, security tools |

Symptom detection, early warnings |

Custom quote. |

What Is BlueOptima and Why Do Engineering Leaders Use It?

BlueOptima provides engineering analytics focused on developer activity signals, code output volume, and cross-team comparison in large-scale organizations. It aggregates data across repositories to support executive-level reporting and benchmarking, rather than day-to-day delivery decisions such as scope adjustment, flow control, or release readiness.

BlueOptima’s appeal stems from having a standardized measurement across teams and roles.

When leadership asks for comparisons across orgs, regions, or roles, the system gives a consistent benchmark to anchor those conversations.

That matters in environments where delivery data is fragmented across many tools and teams. In Stack Overflow’s 2025 survey, 35% of developers report using 6-10 distinct tools to get their work done, which makes unified reporting harder without a centralized analytics layer.

So, more specifically, BlueOptima covers:

- Developer performance analytics that quantify output trends and activity over time.

- Code quality and security signals tied to vulnerabilities and secret exposure.

- AI impact measurement that correlates tool usage with changes in output and activity trends.

- Global benchmarking across teams, organizations, and geographies.

- Talent intelligence for hiring signals, internal mobility, and skill mapping.

- Multisite version control analysis across each repository, including Git, Gerrit, and SVN.

- Heavy reporting built for executive review, usually centered on a centralized dashboard.

As a result, the platform is well-suited for oversight, comparison, and executive alignment. That structure works when the goal is standardized reporting. It also means the product emphasizes measurement depth over direct guidance on execution, flow constraints, or delivery decisions.

So, if it's that good, why do some companies look for alternatives? Well, that leads us to our next point...

Why People Look for BlueOptima Alternatives

People look for BlueOptima alternatives because the platform typically answers executive reporting and benchmarking questions, but offers limited support for day-to-day delivery decisions. Metric coverage is a good start, but tech leaders need decision signals that translate directly into workflow changes.

Here are different reasons that push leaders to evaluate other options:

- Interface and cognitive load: The dashboard structure exposes a large number of metrics at once, especially in early adoption. When reviews require interpretation rather than clarity, teams spend time learning the tool instead of acting on signals.

- Training overhead: Meaningful use typically depends on extended onboarding and continued oversight. That investment delays time-to-value and limits scalability across multiple teams.

- Reporting over execution: A strong focus on benchmarks and reports can limit actionable insights. When delivery slows, the system explains what changed, but does not indicate which workflow decision would remove the constraint.

- Metric risk: Heavy reliance on quantitative output measures can incentivize metric optimization when goals are unclear. Without tight links to delivery outcomes, numbers drift away from real progress.

At that point, alternatives become less about feature coverage and more about decision confidence. So, that's why we're going to talk about the best BlueOptima alternatives next.

Top 10 Competitors and Alternatives to BlueOptima to Consider

The top BlueOptima alternatives to consider include Axify, Jellyfish, Swarmia, Harness, and others. Teams typically explore alternatives when leadership asks for evidence tied to delivery outcomes.

Besides, Gartner projects that by 2027, 50% of software engineering organizations will rely on SEI platforms. As adoption increases, the practical differences between these tools matter more: what data they expose, how quickly signals translate into decisions, and how directly they support delivery execution.

The following options represent the most relevant BlueOptima alternatives to consider going into 2026.

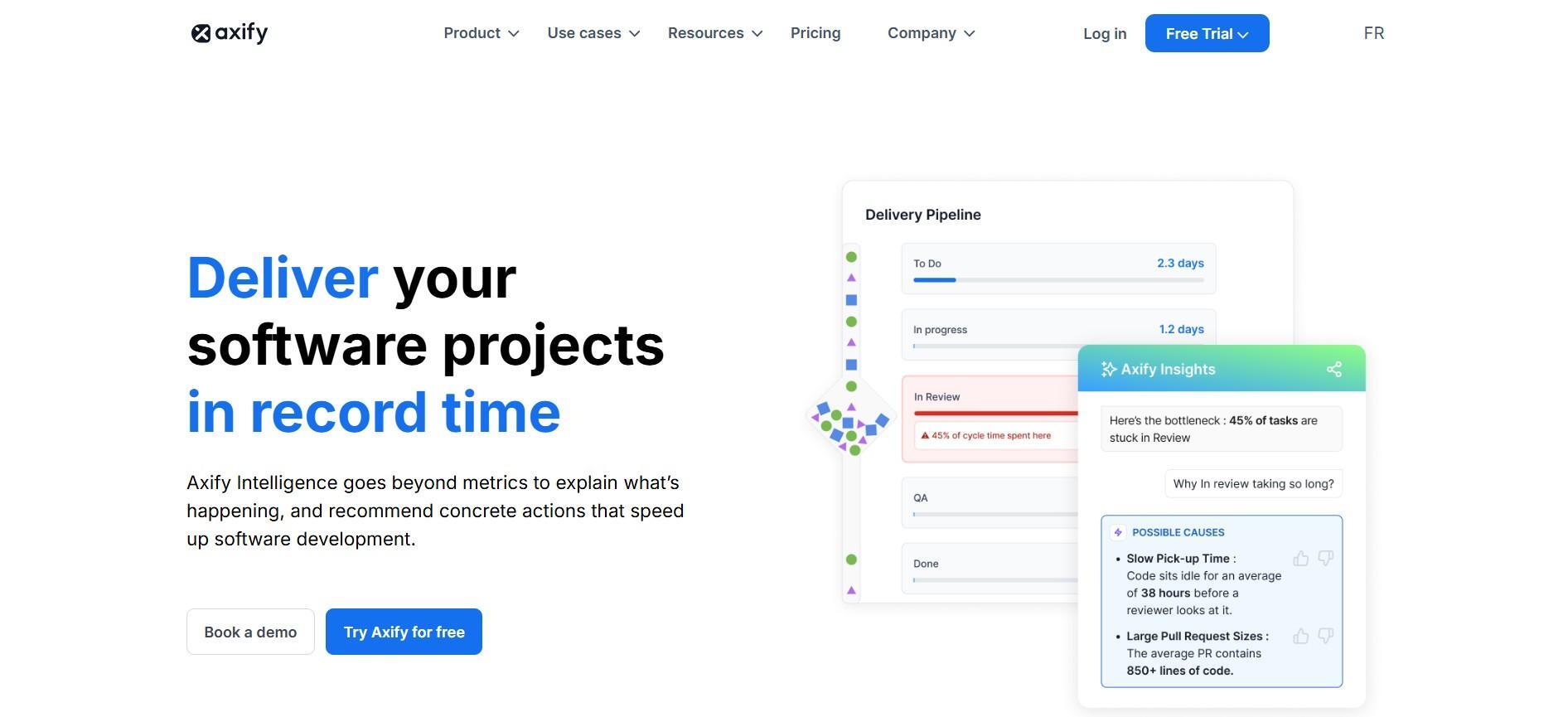

1. Axify

Axify connects software delivery signals directly to execution decisions. The platform focuses on how work flows through code, review, testing, and release, and where time, risk, or rework are introduced.

In practice, Axify provides:

- Visibility into core delivery and reliability signals, including DORA and flow metrics.

- End-to-end analysis of work movement using value stream mapping to surface bottlenecks and handoffs.

- Explanations of delivery changes by linking metric movement to workflow stages, ownership, and constraints.

- Decision support for software engineering leaders, with or without the use of AI-assisted analysis.

Axify extends this foundation with two AI-driven capabilities that focus on measurement and decision support rather than automation.

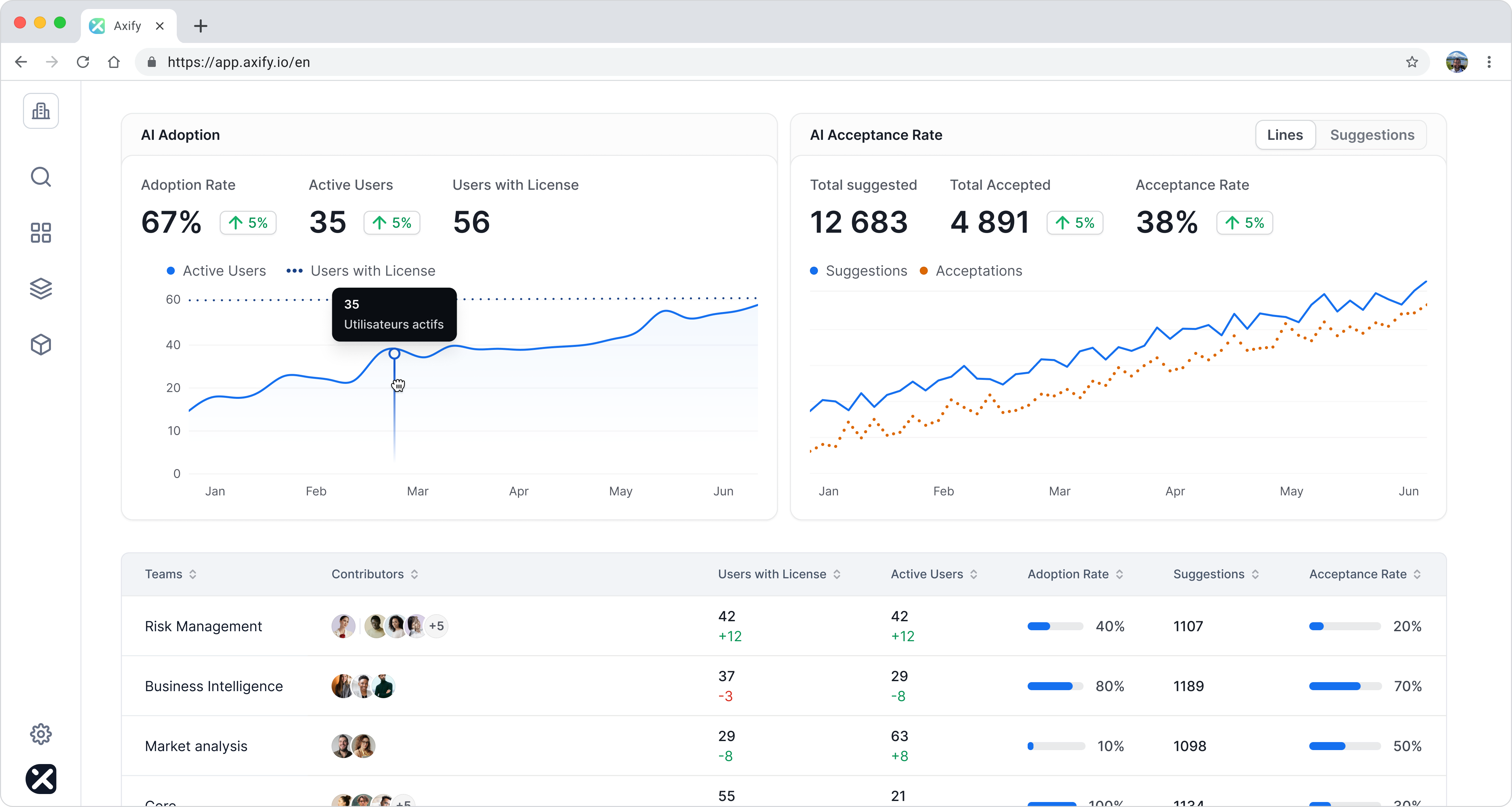

AI Impact

AI Impact measures how AI tool adoption affects software delivery. Using before-and-after comparisons, it shows whether AI usage correlates with changes in cycle time, throughput, rework, or review delay.

This allows teams to:

- See which AI tools are actually used, by which teams, and how consistently.

- Identify friction introduced by AI-assisted changes.

- Compare delivery performance with and without AI support.

- Assess ROI by linking AI adoption to observable delivery outcomes.

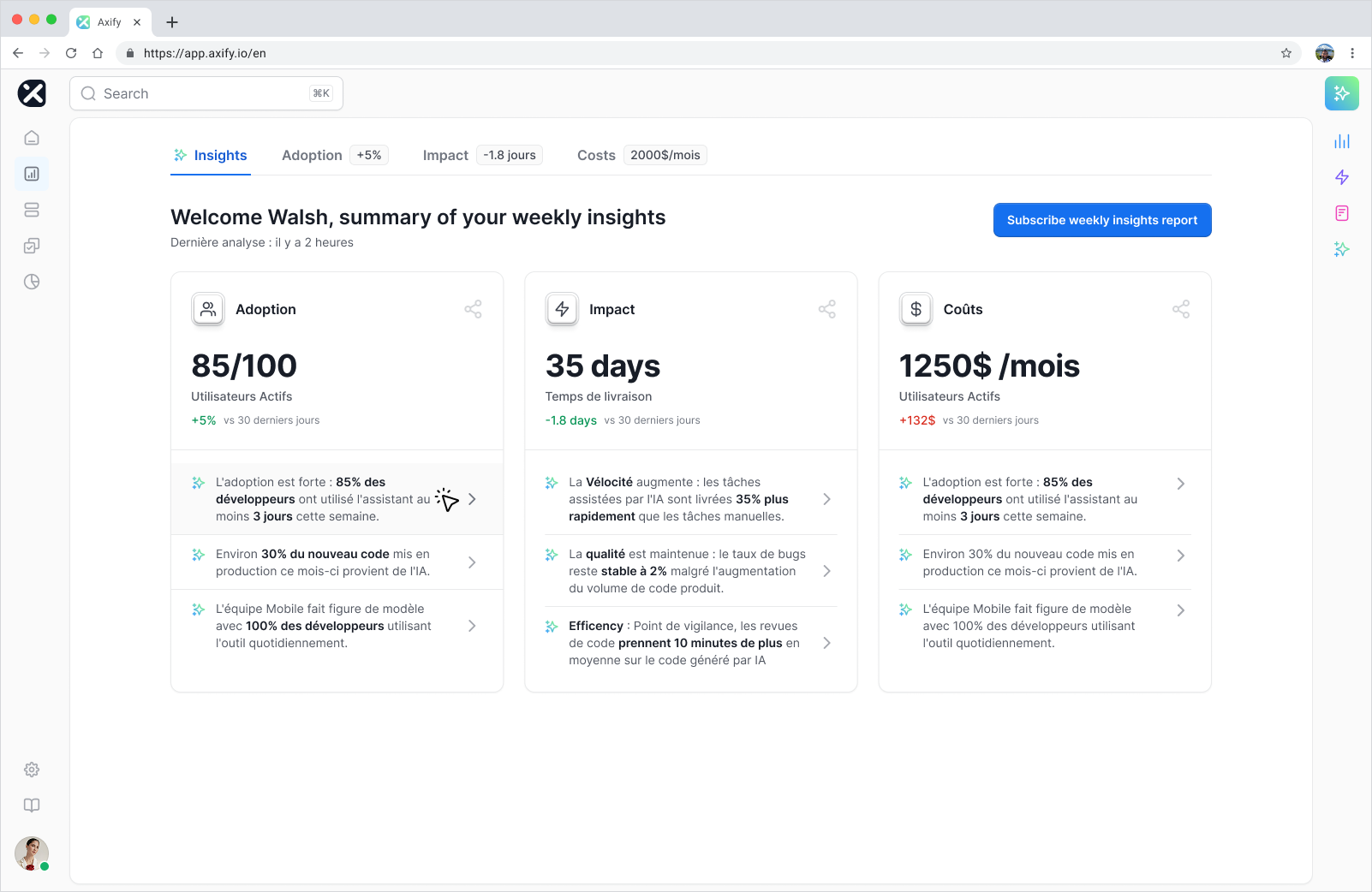

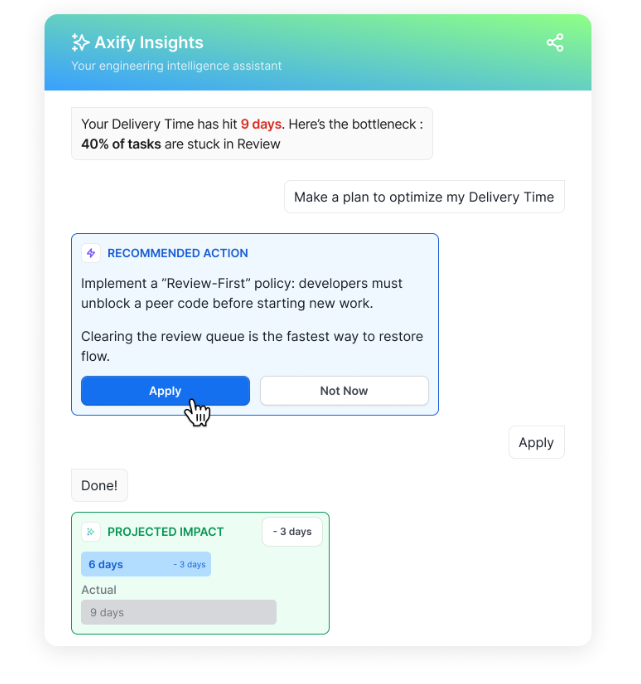

Axify Intelligence

Axify Intelligence analyzes delivery data to surface bottlenecks, explain their causes, and suggest actions tied to workflow decisions. Unlike general-purpose language models, it operates on your internal delivery data and system context.

Axiy Intelligence will surface relevant insights in a sort of executive summary. Then the user can dive deeper into the data with dashboards and a conversational agent to:

- Identify where delivery slows and why.

- Understand which constraints are driving recent metric changes.

- Explore the expected impact of adjusting ownership, sequencing, or review policies.

- Get actionable action propostions based on generated insights.

Axify Case Studies

Prior to the release of Axify’s AI-based capabilities, two teams at the Business Development Bank of Canada (BDC) used Axify to analyze delivery flow across teams.

Based on those delivery insights, BDC made targeted changes to review ownership and work sequencing. Following those changes, delivery time improved by 51%, while time spent in quality control dropped by 81%.

This example reflects outcomes driven by delivery visibility and workflow analysis, prior to the introduction of Axify’s AI Impact and Axify Intelligence features.

Those newer capabilities build on the same delivery signals by adding structured before-and-after comparisons, AI adoption visibility, and decision-level recommendations. Together, they extend this foundation by helping teams understand not just what changed, but why it changed and where to act next as AI usage scales.

Strengths

- End-to-end delivery visibility across the full development lifecycle.

- Flow metrics combined with value stream mapping to identify structural constraints.

- AI-assisted explanations that link metric movement to workflow causes.

- Before-and-after impact analysis for tooling and process changes.

- Natural-language querying over delivery data for faster decision making.

Limitations:

- No HR or talent-focused tooling.

Best for: Leaders who want to improve delivery.

Pricing: Plans start with a free trial, then $19 per contributor per month.

Website: Axify

2. Jellyfish

Jellyfish offers a management layer that ties engineering effort to business priorities, budgets, and outcomes. The platform shows how time and cost are spread across features, maintenance, and initiatives, which can help you align engineering work with strategy.

Jellyfish pulls data from different delivery tools and finance systems, so planning discussions stay grounded in actual spend and capacity. It also covers surveys and signals around developer experience, which adds context when output shifts without obvious delivery blockers.

At Jobvite, Jellyfish helped clarify where engineering time went as the company scaled. That visibility supported earlier intervention on key initiatives and contributed to roughly an 80% increase in throughput.

Strengths:

- Scenario planning that helps optimize capacity and portfolio tradeoffs.

- Data coverage that can integrate engineering and financial views.

- Reporting for growth and investment discussions.

Limitations:

- Limited code-level analysis for PR flow and review detail.

- Broader scope can slow the time to value for smaller orgs.

- Focus stays at the org level rather than individual delivery mechanics.

Best for: Leaders managing scale who need to connect delivery, cost, and planning.

Pricing: Custom quote.

Website: Jellyfish

3. Swarmia

Swarmia provides a metrics system built around healthy delivery behavior and steady improvement. The platform focuses on flow, collaboration, and outcome balance, which can give engineering leaders signals without pushing teams into metric games.

Metrics, on the other hand, cover delivery speed, review flow, and satisfaction, so execution conversations stay grounded in how work actually moves.

At Learnship, Swarmia supported a 20-25% cycle time reduction after leaders addressed review queues and handoff delays. Open pull requests dropped from 80 to 15, which improved throughput and team confidence.

Strengths:

- Clear use cases tied to flow, reviews, and collaboration.

- Support for continuous improvement frameworks without custom setup.

- Metrics designed to protect trust and team dynamics.

Limitations:

- No hiring or financial cost analytics.

- Limited code security or static analysis coverage.

- Insight quality depends on a disciplined workflow data.

Best for: Teams focused on delivery health and sustainable improvement.

Pricing: Free for small teams and then €28 ($33) per developer per month.

Website: Swarmia

4. Harness

Harness’ engineering analytics track the delivery pipeline. Its Software Engineering Insights feature pulls data from source control, CI, CD, and planning tools to show where work slows before it reaches production.

Also, the Trellis framework analyzes more than 20 delivery signals to surface concrete causes behind review delays, flaky tests, or sprint volatility. Planning scorecards flag scope drift and estimation gaps early, so delivery risk shows up before a release slips.

At Sensormatic, Harness SEI supported a 60% improvement in coding hygiene and a 30% reduction in testing time. Teams deployed faster because quality issues moved upstream instead of surfacing during release.

Strengths:

- Pipeline-level analytics tied directly to CI and CD behavior.

- AI-driven Trellis signals that isolate specific delivery drag.

- Cost and effort breakdowns across feature and maintenance work.

Limitations:

- Most value appears inside the Harness ecosystem.

- Broader DevOps scope than some analytics-only tools.

- No people or satisfaction metrics.

Best for: Teams already operating mature DevOps pipelines.

Pricing: Custom quote.

Website: Harness

5. Code Climate Velocity

Code Climate Velocity provides a focused view into how work flows through code and reviews. The platform connects repository activity and work tracking data to show cycle time, PR size, review delay, and rework patterns at the team and workflow level.

That structure supports concrete conversations about review load, change risk, and sequencing, rather than abstract delivery health. The tooling also supports before-and-after comparisons, which tie process changes to measurable outcomes during growth.

At VTS, Velocity replaced fragmented Jira reporting and exposed review and flow constraints across 20+ teams. Cycle time dropped by about 30%, and deployment frequency doubled once leaders addressed the specific workflow issues the data surfaced.

Strengths:

- Visibility into PR flow and review delay patterns.

- Industry benchmarks that add external context to delivery metrics.

- Developer360 reports that support 1:1 coaching.

Limitations:

- Limited guidance on root cause without manual analysis.

- Narrow focus on delivery flow rather than cost or planning.

- Lighter integration beyond Git and Jira.

Best for: Teams improving review flow and delivery consistency.

Pricing: Custom quote.

Website: Code Climate Velocity

6. Waydev

Waydev offers a single system that pulls delivery, code, and planning data into one view, with AI features layered on top. The platform connects repositories, boards, CI, and quality tools so daily signals reflect how work actually moves.

Built-in forecasting and AI guidance help frame delivery risk and timeline changes before reviews happen. Lastly, its custom dashboards and ad-hoc delivery queries let teams adapt metrics to their delivery model, tooling stack, and planning cadence.

At Sky.One, Waydev surfaced delivery patterns that native tools never showed. Daily reports shaped management decisions, while sprint-level metrics helped teams focus support without turning data into control.

Strengths:

- Coverage across delivery, code, and planning signals.

- AI-based forecasts that flag timeline risk early.

- Flexible dashboards and custom metrics.

Limitations:

- Feature depth can raise setup effort.

- Advanced modules increase total cost.

- Insight quality depends on clean input data.

Best for: Leaders who want a single analytics layer across the stack.

Pricing: Starts at $29 per active contributor per month.

Website: Waydev

7. Allstacks

Allstacks is a value stream intelligence platform built for leaders who need early signals on delivery risk and execution drift. The system pulls data from source control, planning tools, CI, and incidents to reflect how work actually moves across initiatives.

Its Intelligence Engine analyzes patterns across projects to flag hidden delivery risk, including cases where status appears green but execution is slipping. Predictive forecasting ties scope, velocity, and staffing together so delivery dates update as conditions change.

At ShareFile, Allstacks surfaced execution friction across roughly 40 teams. After rollout, cycle time dropped by 32%, and pull request response time improved by 25%, giving leadership confidence in delivery alignment.

Strengths:

- Predictive forecasting tied to real delivery signals.

- Investment reporting that links effort to cost.

- AI-driven risk detection across parallel initiatives.

Limitations:

- Setup requires accurate project data mapping.

- Limited deep code or PR-level analysis.

- Enterprise focus may exceed small-team needs.

Best for: Leaders managing multiple initiatives and delivery risk.

Pricing: Starts at $400 per contributor per year.

Website: Allstacks

8. GitClear

GitClear is a Git-native analytics platform focused on measuring real code impact rather than surface-level activity. The system analyzes commits, pull requests, and review behavior to separate meaningful changes from noise using its Line Impact and Diff Delta models.

Metrics stay grounded in code movement, review latency, churn, and delivery patterns, with full transparency into how every signal is calculated. AI-assisted PR summaries and visual diffs shorten review cycles and reduce reviewer fatigue without altering existing workflows.

At NextGen Healthcare, GitClear supported over 700 developers across 100+ teams. Review under-review time dropped by about 30%, while some teams cut cycle time by up to 76% and increased meaningful code output by roughly 10%.

Strengths:

- Code-level impact scoring beyond raw activity counts.

- Transparent metric definitions engineers can audit.

- AI-supported PR summaries and visual diffs.

Limitations:

- Limited portfolio and financial reporting.

- Focused primarily on Git and issue trackers.

- High metric volume requires discipline.

Best for: Leaders who want code-first delivery signals.

Pricing: Has a free starter tier but the paid plans start at $29 per contributor per month.

Website: GitClear

9. DevDynamics

DevDynamics is for teams that want delivery metrics and code quality signals in the same workflow. The platform pairs standard delivery metrics with an AI code reviewer that scans pull requests and flags issues before human review, which changes how review time is spent day to day.

Custom metrics and dashboards allow measurement to reflect your team’s real delivery goals. However, customizing them correctly is tightly connected to understanding your goals and following the best practices of building an Agile dashboard.

DevDynamics also has R&D reports that connect engineering time to cost, showing how effort splits across features, bugs, and maintenance.

At PayU, engineering managers used DevDynamics to replace intuition-driven discussions with concrete performance data across teams. After rollout, leaders relied on detailed reports covering coding speed, pull request cycle times, and issue resolution to run planning and execution meetings.

Strengths:

- AI code reviewer integrated into PR workflows.

- Customizable metrics, dashboards, and reports.

- R&D reports with cost insights and basic metrics.

Limitations:

- Developer 1:1 reports can be misused by management.

- AI review still requires human judgment.

- High flexibility can dilute focus.

Best for: Teams balancing code quality, delivery, and cost visibility.

Pricing: A free tier is available, and paid plans start at $19 per contributor per month.

Website: DevDynamics

10. Oobeya

Oobeya works like a digital command center for engineering leaders who need fast, decision-ready signals from the SDLC. It pulls data from source control, CI/CD, planning tools, security scanners, and quality systems to connect delivery speed with reliability and risk.

Instead of static dashboards, the platform flags recurring delivery symptoms such as stalled reviews, repeated bug reopenings, or unstable releases and ties them back to concrete workflow behavior. At the same time, AI usage tracking shows how coding assistants affect throughput, quality, and rework across teams.

Strengths:

- Acts as a digital war room or copilot for leadership decisions.

- DORA, SPACE, DevEx, security, and code quality signals in one view.

- Team symptoms, bug reporting, and AI coding impact analysis.

Limitations:

- Gamification features can be misused without clear leadership guardrails.

- Metric breadth may require upfront calibration.

- Symptom signals depend on clean underlying data.

Best for: Senior leaders running multiple teams who want early warning signals.

Pricing: Custom quote.

Website: Oobeya

How We Evaluated These BlueOptima Alternatives

To help you pick the right BlueOptima alternative, we evaluated how each tool supports execution, tradeoffs, and accountability in real delivery conditions.

Delivery Visibility

We evaluated whether each tool shows how work moves through the development lifecycle. Specifically, we looked at whether delays become visible while teams can still act, and whether historical data explains where time was spent when throughput changed. Tools that stop at aggregate summaries make root-cause analysis slower and decisions reactive.

Workflow-Level Context

Workflow-level context determines whether teams can trace delays back to specific causes, such as review queues, handoffs, or sequencing decisions. We assessed whether each platform connects code changes, tickets, and approval steps into a single view that explains why work stalled and what to adjust next, rather than forcing teams to infer causes across tools.

AI Impact Measurement

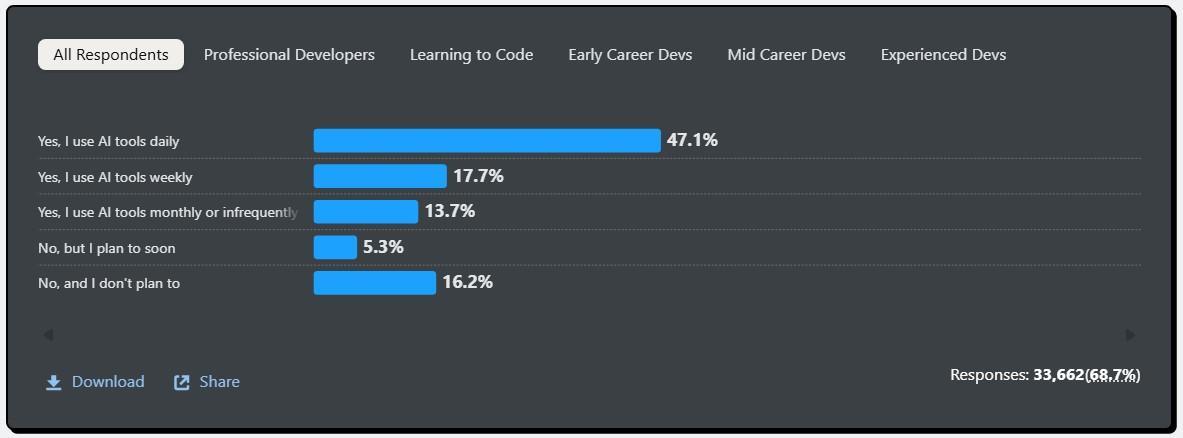

AI usage has shifted from experimental to daily workflow. According to the 2025 Developer Survey by Stack Overflow, 84% of respondents are using or planning to use AI tools in their development process.

As such, we awarded extra points to platforms that can compare delivery performance before and after AI assistance through objective engineering metrics instead of relying on license counts or self-reported usage.

Source: 2025 Developer Survey by Stack Overflow

Decision-Support Signals

Most teams do not need tools to make decisions for them. They need tools that provide sufficient visibility to support decisions without ambiguity.

We evaluated whether each platform provides that baseline visibility across code, workflow, and delivery outcomes. Tools score higher when they go beyond visibility and actively support decision-making by highlighting what changed, why it changed, and which lever is most likely to affect the outcome.

Prioritization Clarity

You have prioritization clarity when delivery effort matches stated priorities and tradeoffs are visible.

As such, we evaluated whether each tool helps teams and leaders understand how capacity is allocated, how work competes across initiatives, and where priorities conflict with delivery reality. Tools score higher when they surface misalignment early, show the impact of shifting priorities, and make tradeoffs explicit before delivery degrades.

Low Analysis Overhead

We evaluated how much effort is required to interpret delivery signals. Tools perform better when leaders can understand what changed and why during normal reviews, without additional data preparation, custom reports, or analyst support.

Executive Readiness

Delivery metrics must translate cleanly outside engineering. We assessed whether platforms connect delivery behavior to risk, cost, and staffing implications, so leaders can explain outcomes in financial and operational terms without re-framing or manual interpretation.

Which BlueOptima Alternative Will You Pick?

If you read so far, you know that BlueOptima performs well when the goal is standardized reporting, benchmarking, and executive-level comparison.

Teams look elsewhere when they need visibility that explains why delivery outcomes changed and what to do next inside real workflows.

The tools covered in this list differ in how they support that shift. Some improve delivery visibility. Others go further by connecting signals across code, workflow, and release to support prioritization, risk management, and accountability.

The strongest platforms make tradeoffs visible early, reduce interpretation overhead, and help leaders act before delivery degrades.

To see how this works in practice, book a demo with Axify and evaluate the difference directly.

FAQs

What does BlueOptima do?

BlueOptima aggregates commit data, code changes, and contribution signals to measure developer activity and productivity across teams, roles, and regions. Its primary use is standardized reporting and benchmarking, allowing leadership to compare output trends and productivity patterns at scale. This makes it well suited for executive oversight, portfolio-level reviews, and performance reporting.

What is BlueOptima code detector?

BlueOptima Code Detector analyzes codebases to surface quality and security signals associated with developer activity. It identifies issues such as vulnerabilities, exposed secrets, and risky coding patterns at the commit level. These findings feed into centralized reports and developer productivity insights, rather than directly influencing day-to-day delivery workflows or execution decisions.

What are the best BlueOptima alternatives?

The best BlueOptima alternatives include Axify, Jellyfish, Swarmia, and similar tools that track your software delivery metrics. Axify differentiates itself by measuring AI impact with before/after delivery comparisons and by providing AI-driven insights based on your actual delivery history. Its AI assistant analyzes bottlenecks, changes in flow, and reliability trends, then returns context-specific explanations and recommendations