Measuring engineering productivity shows you the status quo. Decisions determine what changes next.

The issue is not always missing data. If you have good data, how you interpret it matters just as much.

You might already track essential engineering metrics. Yet leadership still asks: what changed, and what should we adjust?

This means you should move from mere reporting to explaining cause and effect, as our client Newforma did.

After working with us at Axify for five months, the team reduced lead time for changes by 63%, cut PR cycle time by 60%, and increased deployment frequency by 2,150%, which resulted in 22x more deliveries. Those gains came from identifying bottlenecks, reorganizing teams, and tightening validation and deployment processes.

In this article, you will see how measuring productivity becomes the foundation for engineering decisions that lead to real business results. Plus, you will learn how to design a system that tells you what to change next and why.

Pro tip: Axify Intelligence builds on that foundation by analyzing your historical delivery data, suggesting workflow-level actions based on recent trends, and helping you implement them right from the app. Try it out today!

What Does It Mean to Measure Engineering Productivity?

Measuring engineering productivity means building a decision model that uses delivery signals to explain system behavior and guide action. As such, you need to decide which metrics can support planning and risk management.

Measurement starts with separating signals from outcomes.

Metrics like lead time, deployment frequency, or work-in-progress reflect how your delivery system operates. Revenue, retention, or customer growth are business outcomes shaped by many variables. Conflating them creates false accountability and weakens planning decisions.

Remember: Every metric is a representation of system behavior. What matters is how reliably it reflects what is happening inside the delivery workflow.

- Some metrics describe delivery mechanics directly: cycle time, throughput, deployment frequency, and change failure rate. These signals are generated by workflow events and help explain how work moves through the system and where friction accumulates.

- Other metrics approximate effort or output, such as story points completed or pull request volume. These are constructed indicators. They can still provide useful context, but only if you understand what they represent and what they do not.

Once these signals are defined, the next step is understanding when they appear in the delivery process. This is where the distinction between leading and lagging indicators becomes useful.

- Lagging indicators describe outcomes after they occur. Change failure rate and escaped defects show that quality issues already materialized; in other words, they confirm impact.

- Leading indicators signal pressure earlier in the system. Growing work-in-progress, longer review queues, or aging tickets indicate instability before failures surface.

If you want to measure engineering productivity, combine both. Leading indicators allow early intervention, while lagging indicators confirm whether interventions worked.

If you build a model that remains reliable under real delivery conditions, it will support better decisions over time.

As two experts explain:

“Of course, when I say measure, what I mean is how do we build a model of engineering productivity? … any model we build is going to be wrong. So today, we want to think about how to measure engineering productivity so that our models are not wrong in problematic ways. We want them to still be useful and applicable to the problems we're trying to solve.”

- Ciera Jaspan and Collin Green - Why Most Engineering Productivity Measurement Fails

Most engineering productivity measurement fail because it produces static reports that don’t help you make better decisions. Data accumulates, but trade-offs remain unclear, and accountability does not change. This usually happens for structural reasons.

These are the most common measurement system failures you might encounter:

- Metric overload: You adopt multiple frameworks and dashboards in parallel, hoping broader coverage improves decision-making confidence. However, research from Spotlight on Productivity Engineering shows that 78% of organizations use multiple measurement frameworks, yet only 12% report high confidence in their productivity measurements. That gap shows the mere number of metrics does not translate into decision clarity, especially if your leadership team is debating interpretations or not focusing on the same signals.

- Vanity KPIs: Commits, pull request counts, and story points describe work performed at an individual level. They do not automatically describe how efficiently work moves through the system or how reliably it reaches production. High activity can coexist with long queues, rework, or instability. When activity becomes the primary signal, planning often focuses on increasing output or adding capacity. The actual constraint in the workflow (review bottlenecks, environment instability, unclear requirements) remains unaddressed.

- Misinterpreting trends: This means reacting to single data points instead of sustained patterns. But delivery systems are influenced by seasonality, release cycles, and batch size variation. Without sustained trend analysis, teams optimize for temporary variation instead of structural improvement.

- Local vs. global optimization: One team reduces review time, while downstream validation queues grow. Improvements in one part of the engineering stack shift delays elsewhere, which masks system-level risk.

- Metrics without ownership: Signals exist, but they are not connected to a decision owner. No one is responsible for interpreting the change, diagnosing the cause, and proposing an intervention. In that environment, metrics become historical artifacts. They describe what happened, but they do not influence what happens next.

Tools for Measuring Engineering Productivity: What to Look For

You can certainly manage measurement without a dedicated platform, but having one makes the process much smoother and gives you back valuable time when it matters most.

When evaluating platforms, focus on whether they feature essential engineering metrics that you can easily translate into action.

These are the three layers you should examine.

What Most Engineering Productivity Tools Offer [and What They Lack]

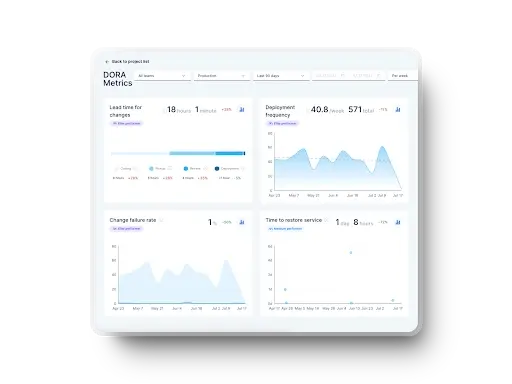

Most tools aggregate metrics and display them through dashboards. They surface DORA indicators, flow metrics, pull request throughput, and stability trends. That visibility matters because you need shared baselines.

Axify covers this layer thoroughly. You can track:

- DORA metrics such as deployment frequency, lead time for changes, change failure rate, and mean time to recovery (now known as failed deployment recovery time).

- Flow metrics expose queue times, review delays, and the distribution of work in progress.

- Value stream mapping shows bottlenecks across your delivery stages.

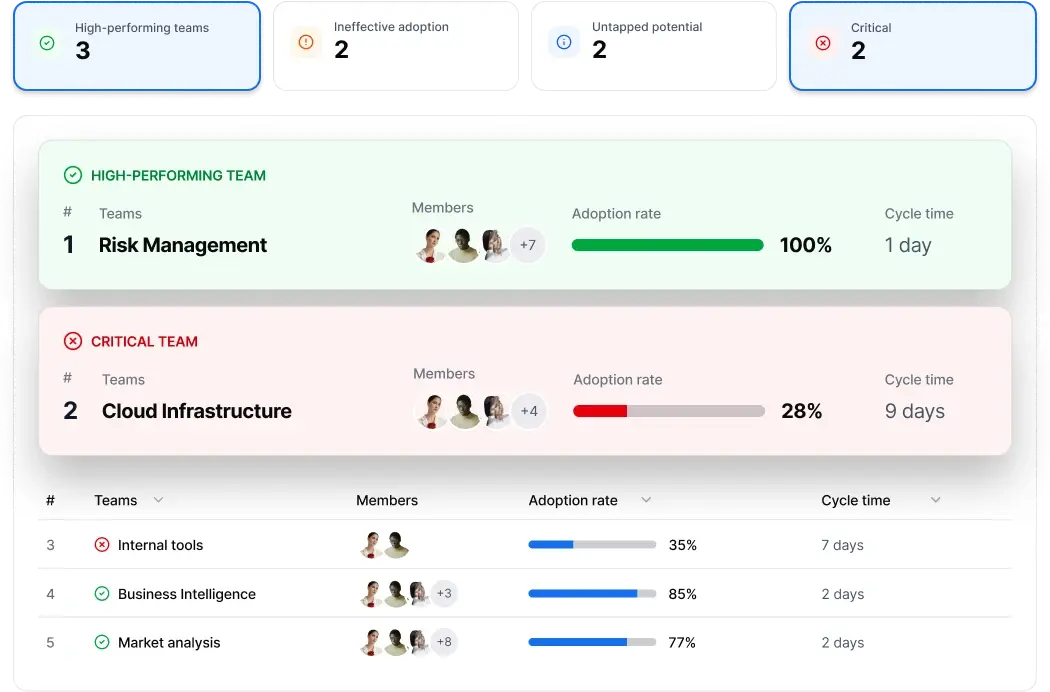

- AI Impact adds correlation analysis between AI adoption and engineering metrics. This allows you to see how cycle time, rework, or review delays change as AI usage increases across teams.

These capabilities give you detailed key performance indicators across the delivery lifecycle.

However, some platforms stop at exposure. Dashboards show what happened, but do not explain why it happened or which policy to adjust.

For example, if review time increases, the tool may show longer queues but rarely connect that to pickup delays, large pull requests, or ownership rules. As a result, leaders don’t have the full picture.

Visibility is necessary. Yet visibility alone does not resolve prioritization debates or forecast uncertainty.

That gap leads to the next requirement.

Engineering Productivity Measurement Function

Before optimizing, you need to understand baseline maturity.

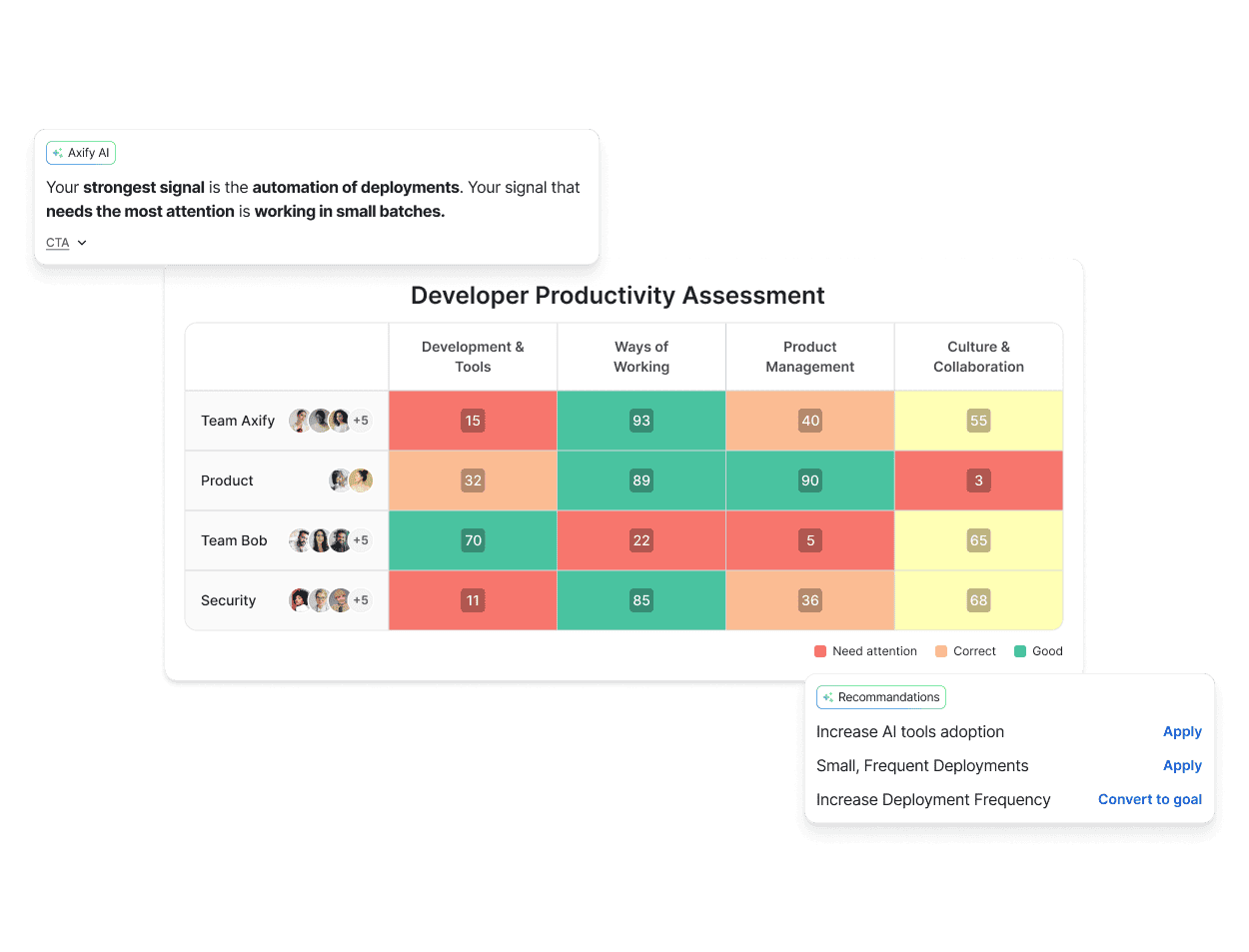

Axify’s Developer Productivity Assessment gives you a standardized evaluation of your engineering productivity across four pillars:

- Development & Tools

- Ways of Working

- Product Management

- Culture & Collaboration

First, you connect your existing toolkit to automatically capture delivery data. Then, you complete a concise questionnaire to capture qualitative context. Finally, you receive a consolidated report that compares teams against consistent benchmarks.

This function serves a different purpose than metrics dashboards: it identifies structural gaps and doesn’t focus specifically on daily performance.

For example:

- If automation coverage is low and review cycles are unstable, the issue may not be “developer speed.” It may be weak CI maturity or inconsistent validation practices.

- If collaboration friction slows validation, the issue may be unclear ownership or documentation standards, not headcount.

So the function of this assessment is:

- Establish a baseline maturity level.

- Reveal systemic weaknesses across tooling, workflow, product, and culture.

- Prevent premature hiring or broad “transformation” efforts.

- Create alignment between technical leads and leadership around what actually needs fixing.

And most importantly:

It separates maturity from output.

A team can ship a lot of code and still be structurally fragile. The assessment measures structural readiness and process strength, so improvement can be measured over time against a consistent baseline.

Tools That Turn Engineering Productivity Metrics into Decisions

Even with strong visibility and baseline assessments, interpretation remains complex because delivery systems involve multiple interacting constraints.

When a queue expands, the cause may be higher review load, increased context switching, or rising code volume from AI-assisted changes. A dashboard can show the queue growth, but it rarely explains which operational condition triggered it.

This is where decision-layer capability matters.

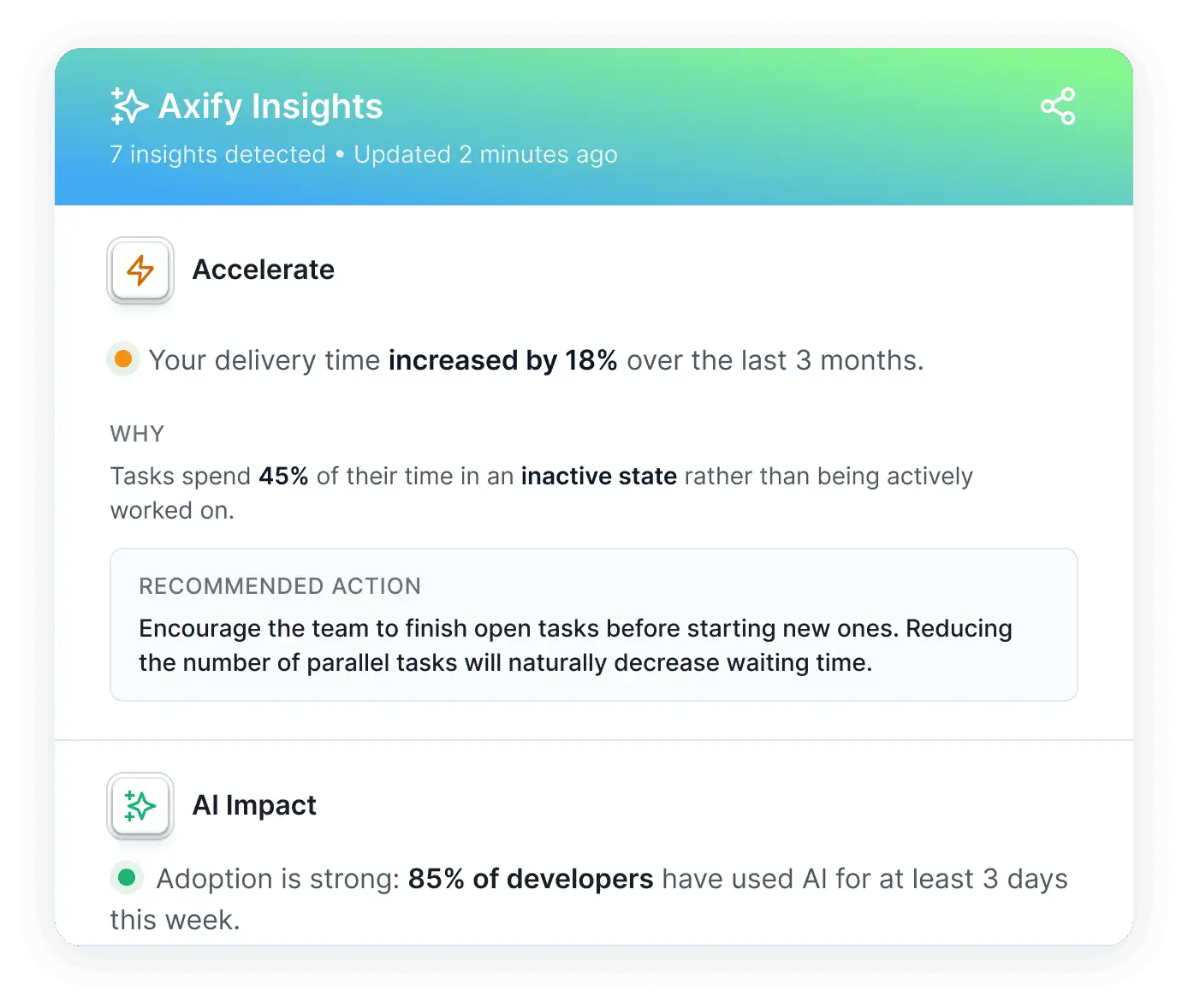

Axify Intelligence analyzes delivery patterns continuously and surfaces insights tied to workflow decisions.

Instead of presenting isolated metrics, it:

- Identifies bottlenecks.

- Explains likely causes.

- Recommends actions such as adopting a review-first policy or limiting work-in-progress to reduce waiting time.

Pro tip: Because it operates on your internal delivery history, this AI assistant’s recommendations reflect your system context. In other words, it doesn’t offer generic advice like basic LLMs.

For instance, if delivery time increases 18% over three months and tasks remain inactive for extended periods, the system can connect inactivity patterns to queue expansion and suggest finishing open tasks before starting new ones. That explanation links the signal to the cause and proposed correction.

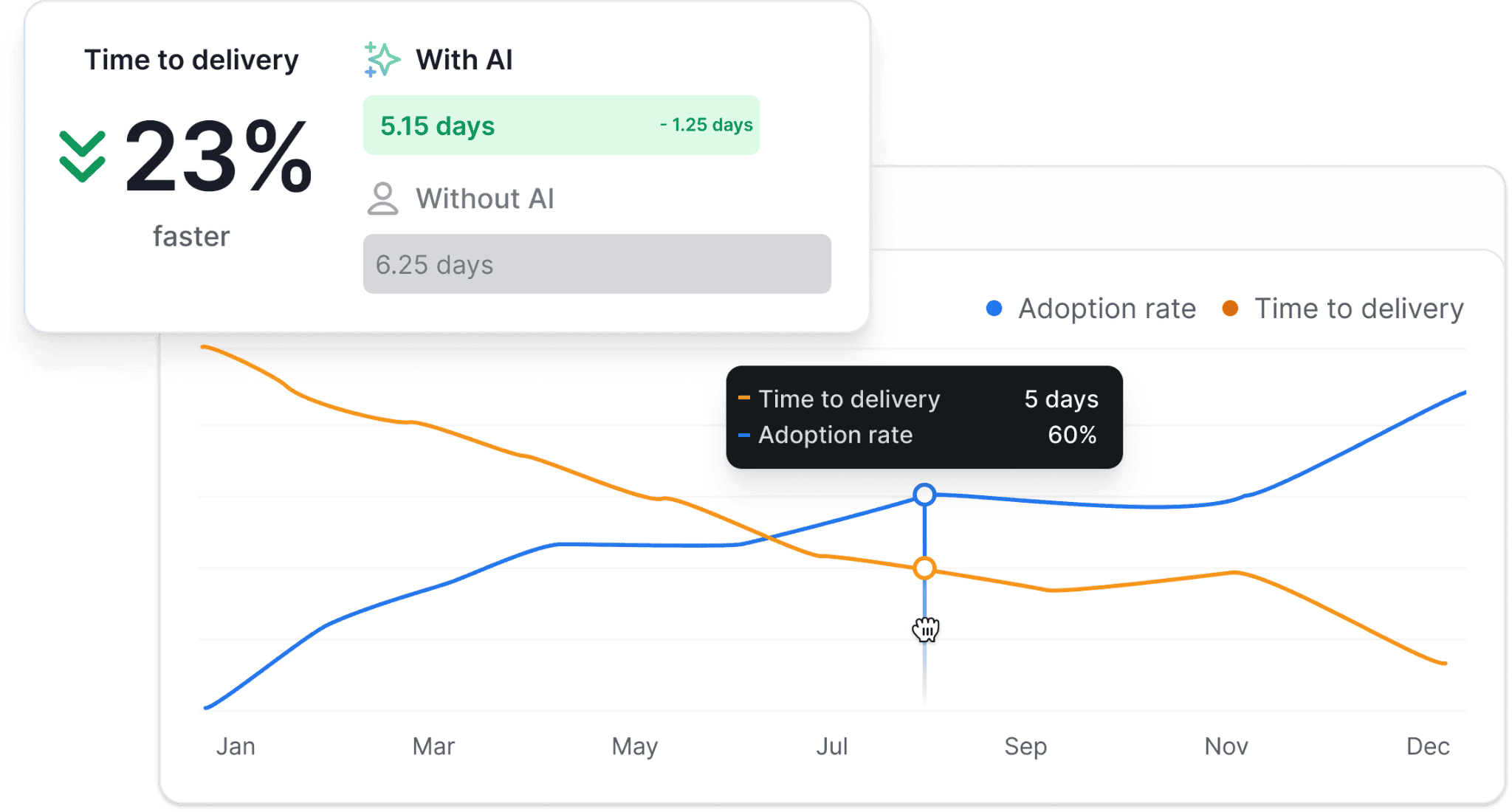

Additionally, you can see AI-related insights regarding how AI adoption influences your engineering productivity.

If AI-assisted tasks move faster but code review duration expands, the system surfaces that trade-off. This helps you balance speed against stability and maintain discipline around continuous improvement.

More importantly, this AI assistant supports conversational interaction. Teams can ask focused questions about specific stages, recent metric changes, or forecast implications.

Then, the assistant provides context-aware responses and actionable recommendations grounded in your data. As a result, decision-making shifts from interpreting dashboards to evaluating proposed workflow adjustments and implementing solutions right from the platform.

How Axify Designs Engineering Productivity Frameworks

In practice, a measurement framework only becomes useful when it connects metrics to operational decisions. At Axify, productivity frameworks are built through a structured process that combines delivery data, workflow analysis, and targeted interventions.

1. Establish a Baseline of Engineering Productivity

The first step is understanding how the delivery system currently behaves. Axify’s Developer Productivity Assessment gives you the kind of structured evaluation you need to establish baseline maturity level and structural gaps. You can also distinguish between productivity constraints caused by workflow design, tooling limitations, or coordination issues.

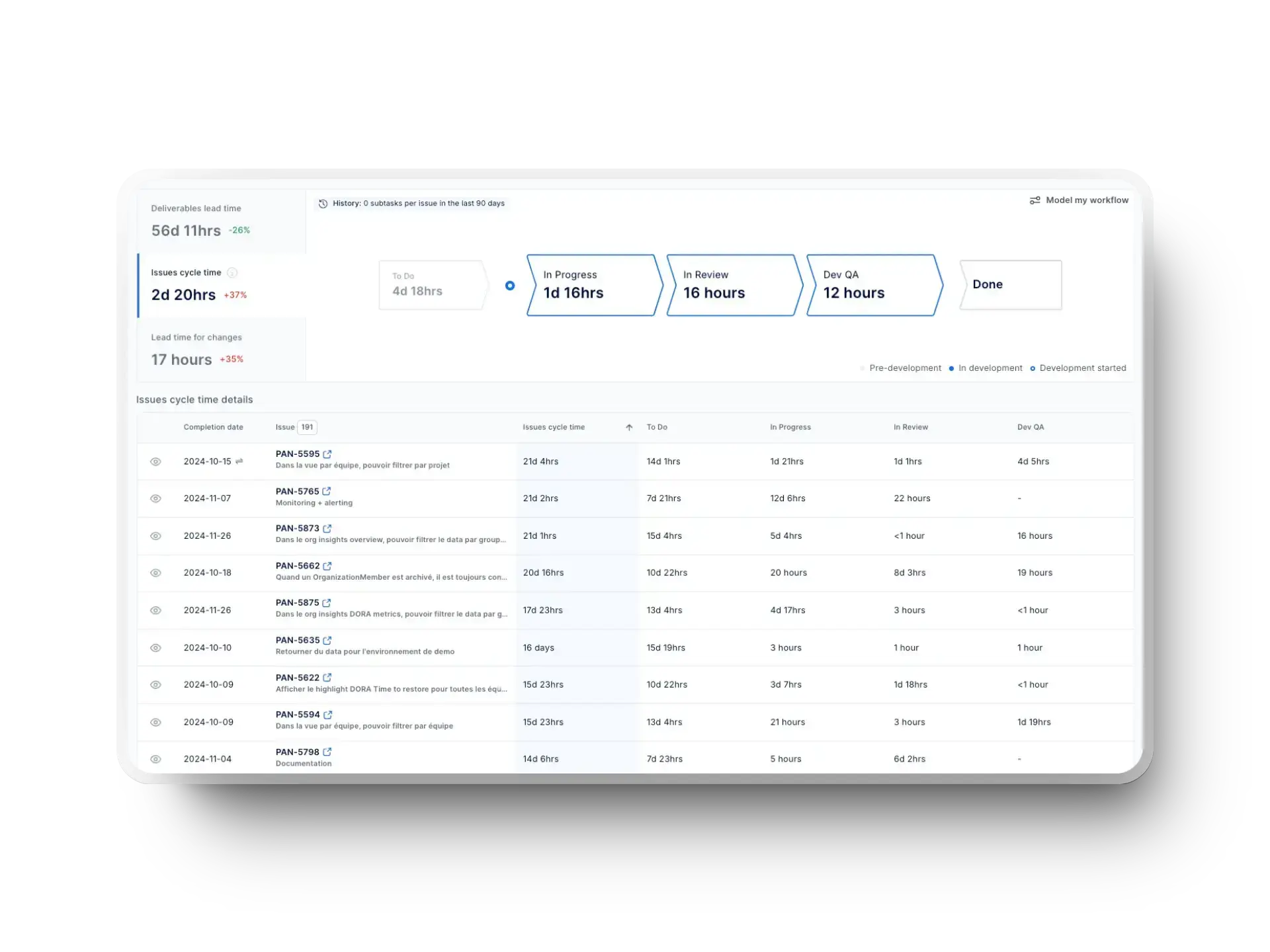

2. Analyze Delivery Flow and Identify Constraints

Once the baseline is established, teams analyze how work moves through the delivery pipeline using delivery metrics such as DORA and flow metrics.

Value stream mapping helps expose where work accumulates, while trend analysis reveals whether delays originate in planning, validation, review, or deployment stages. The goal is to identify structural constraints in the system.

This approach helped two engineering teams at the Development Bank of Canada to identify inefficiencies in pre-development activities and quality control processes.

Within three months, delivery time improved by up to 51% and the teams gained an additional 24% development capacity, leading to $700k recurring productivity gains/year.

Here was our game plan:

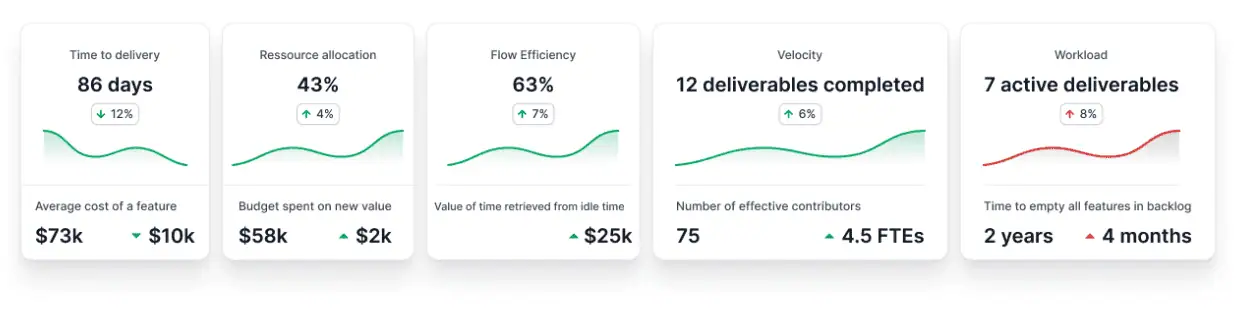

3. Measure the Impact of Changes and New Tooling

When teams introduce new practices or tools, the next step is verifying whether they actually improve delivery outcomes.

Use Axify’s AI Impact to measure changes in delivery performance before and after AI adoption. That way, you can determine whether new tools accelerate delivery or simply shift delays elsewhere in the workflow.

4. Continuously Analyze Delivery Patterns and Recommend Actions

Finally, productivity measurement becomes part of an ongoing improvement cycle. Use Axify Intelligence to surface insights tied to workflow decisions, identify bottlenecks, see likely causes, and get recommendations of targeted actions.

How to Design Your Own Framework for Measuring Engineering Productivity

A measurement framework should clarify what decisions leaders make and what data informs those decisions. Without that link, metrics remain descriptive.

These are the core design elements your framework must address.

What Questions Should Measurement Answer?

Measurement should answer operational questions that change what you do next. If your model cannot influence planning or intervention, it is incomplete.

These are the questions your framework must consistently address:

- Where are you losing the most time in your delivery process?

- What changed recently, and how did it affect flow, stability, or predictability?

- Which teams or workflows need intervention first?

- Are your improvements working, or are you shifting bottlenecks elsewhere?

Start with time loss. Nearly half of developers lose up to 10 hours per week searching for information due to poor documentation and fragmented knowledge systems.

That friction slows the developer workflow, which affects development speed and increases idle time across teams. If pickup time, review latency, or rework loops increase, you can examine ownership clarity, documentation standards, or workflow design before adding headcount.

Next, focus on trend variability. A single data point does not inform a decision. Trends and variance do. You need to see when review queue time expands, when throughput variance increases, or when change failure rate moves beyond baseline.

In Axify, every metric includes trendlines so you can see how your engineering productivity evolves over time.

The value stream mapping view exposes where work accumulates and where flow remains stable. That contrast helps leaders decide whether to rebalance review capacity, adjust WIP limits, or address validation bottlenecks.

Meanwhile, the AI Impact feature helps you see if AI adoption influenced your delivery outcomes. This can help you identify whether new tooling improves your workflow or simply shifts delays to other stages.

How Often Should You Review Engineering Productivity Metrics?

Review metrics at intervals aligned to the decisions they inform. Here’s what we recommend:

- Daily or near-real-time: Use for operational control. Monitor incidents, blocked PRs, review queue time, and validation failures. Daily signals surface stalled pull requests, pickup delays, or sudden CFR spikes before they compound.

- Weekly: Use for team-level adjustments. Review cycle time distribution, rework rate, review latency, and WIP levels. Weekly reviews determine whether recent workflow changes reduced queue time or shifted bottlenecks downstream.

- Monthly or quarterly: Use for leadership planning and capital allocation. Evaluate sustained trends in throughput stability, change failure rate, cost per change, and commit reliability. At this level, the question is whether performance improvements are durable and financially meaningful.

From our experience, good insight comes from trends, and rarely from single data points. A one-day spike in cycle time doesn’t typically justify intervention, but a three-sprint upward trend does.

That’s because operational systems fluctuate daily, but structural constraints indicate capacity imbalance, workflow breakdown, or growing risk exposure that will not self-correct without intervention.

Who Should Use Engineering Productivity Metrics?

Engineering leaders, managers, and technical leads should use the same measurement system, but apply it to different decisions. Alignment comes from shared definitions of cycle time, rework, WIP, and change failure rate.

Here’s how roles rely on measurement:

- Engineering managers: Monitor bottlenecks, WIP levels, review latency, and throughput variance. Their decisions focus on capacity allocation, reviewer balance, and workflow adjustments.

- Tech leads: Use rework rate, defect density, PR size distribution, and review cycles to prioritize refactoring, reduce tech debt, and improve validation practices. Research shows poor code quality can consume up to 42% of developer time, which affects developer productivity directly. Rework and defect signals quantify that cost.

- Engineering leadership: Track commit reliability, throughput stability, cost per change, and change failure rate. These signals inform risk management, hiring decisions, and investment tradeoffs.

When rework increases or review queues expand, tech leads can justify structural fixes. When commit reliability drops or CFR rises, leadership can assess delivery risk before it affects revenue or margin.

How Should Engineering Productivity Metrics Influence Planning?

Engineering productivity metrics should directly shape planning decisions. If planning ignores measurement, forecasts remain speculative.

- Planning should adapt based on capacity trends. If sustained throughput declines across multiple sprints, your scope or timelines must adjust accordingly.

- Bottlenecks should drive prioritization. For example, if review queue time expands or validation cycles lengthen, you need to prioritize review capacity instead of adding new features.

- Forecasting should rely on historical delivery patterns. If you’re simply using averages or single data points, you might over- or under-commit.

- Improvement initiatives should tie to measurable system constraints. Before adding headcount, adopting new AI tools, or restructuring teams, examine whether the constraint is capacity, workflow design, or quality. If the bottleneck is review load or rework, new tooling or hiring may not solve it.

When planning reflects actual system behavior, commitments become defensible and capital allocation becomes disciplined. When it doesn’t, risk accumulates until deadlines force reactive corrections.

How to Interpret Engineering Productivity Metrics

Interpreting metrics means examining which stage of your delivery pipeline changed, what operational event triggered it, and which specific rule, ownership model, or capacity constraint needs adjustment.

If you skip that step, you might mistakenly add engineers when the real issue is review pickup delay, oversized pull requests, or work sitting idle between stages.

Factors that Affect Engineering Productivity Metrics

These are the factors that typically affect engineering productivity metrics:

- Context: Metrics reflect system behavior under specific constraints. A longer cycle time during a major architectural refactor may signal deliberate investment, not inefficiency. A temporary drop in throughput during onboarding periods may reflect team growth, not declining performance. Always ask: what else changed around the same time?

- Correlation vs. causation: Two metrics moving together does not prove one caused the other. A rise in deployment frequency alongside improved lead time might suggest better automation, but it could also reflect smaller batch sizes or scope reduction. Avoid attributing causality without examining workflow changes.

- Seasonality: Delivery patterns fluctuate across release cycles, holidays, and planning cadences. Comparing a pre-release stabilization phase with a feature-heavy development period can distort conclusions. Trend analysis should account for recurring cycles.

- Change events: Introducing new release engineering policies, reviewing ownership shifts, or AI-assisted workflows alter system behavior. Metrics will fluctuate during adaptation. When a signal moves sharply, check for a structural change before assuming your engineering productivity slipped.

- Organizational shifts: Team restructuring, new leadership, or evolving product strategy affect coordination and team health. These shifts can negatively influence cycle times and stability before process changes take effect.

Applying these filters helps you avoid reacting to temporary fluctuations and instead focus on whether a real workflow constraint requires intervention.

Examples of How to Interpret Engineering Productivity Metrics

Let's consider a cycle time spike.

A sudden increase in cycle time does not automatically mean developers are slowing down. It means work is spending more time somewhere in the system.

- Start by breaking cycle time into its components: Is time increasing in review? In testing? In waiting for clarification? In deployment queues?

- Then check recent changes. Was a new approval layer added? Did WIP increase? Did the team take on larger batch sizes? Did dependencies grow?

If review queues expanded, the constraint may be limited reviewer capacity. If aging work is increasing, WIP limits may be too high. If testing time grew, environment stability may be the issue.

The goal is not to push teams to “move faster,” but to identify the constraint and reduce friction at that point.

Next, let's visualize rising throughput with declining quality.

Higher throughput with a rising change failure rate or increased defect volume signals imbalance.

Before reacting, ask:

- Did batch size shrink or grow?

- Were quality gates relaxed?

- Was deployment frequency increased without strengthening automated testing?

Sometimes, teams increase output by reducing validation effort or compressing review time. That may temporarily improve delivery speed but increase rework and incident recovery time later.

The right response is to rebalance flow and reliability. That might mean strengthening CI coverage, adjusting WIP limits, or reinforcing review standards.

Finally, examine worsening predictability.

Predictability declines when planned work diverges from delivered work. That can happen even if throughput remains stable.

Start by examining variance patterns:

- Are estimates consistently off?

- Are interruptions increasing?

- Is unplanned work growing?

Worsening predictability may signal unstable prioritization, unclear scope, or frequent context switching.

Instead of increasing pressure to “commit harder,” leaders should reduce volatility. That may involve limiting parallel initiatives, improving backlog refinement, or protecting teams from mid-sprint priority shifts.

Remember: Predictability improves when the system becomes stable, not when expectations become stricter.

Measure Your Engineering Productivity with Axify

Measuring engineering productivity shouldn’t be done for its own sake. It should improve how you plan, prioritize, and intervene.

Having visibility is great, but using visibility to make the right decisions is better.

That’s what Axify helps you do.

With Axify, you connect delivery signals to bottlenecks, capacity constraints, and workflow policies, so trade-offs become explicit, and intervention becomes targeted. Instead of debating metrics, you evaluate causes, compare impact, and adjust with discipline.

With Axify Intelligence, you go a step further.

This AI decision-making partner gives you AI-driven insights based on your actual delivery history. You can explore root causes, ask questions through the AI assistant, and apply recommended actions directly. Visibility turns into guided intervention.

Book a demo with Axify to see how your delivery data can guide the next decision with clarity and confidence.

FAQs

What is engineering productivity?

Engineering productivity is your organization’s ability to convert engineering effort into reliable, timely, and valuable software outcomes. It reflects delivery speed, stability, predictability, and quality across the full development system.

How to calculate engineer productivity?

In theory, productivity is calculated as output divided by input, such as value delivered relative to engineering time or cost. In practice, software teams rarely use a single formula. Instead, they assess productivity through system-level indicators like delivery flow, stability, and predictability together, then tie those signals to planning and workflow decisions.

What metrics are most commonly used to measure engineering productivity?

The most commonly used metrics include deployment frequency, lead time for changes, change failure rate, and time to restore service. Axify also tracks flow efficiency, review delays, work-in-progress distribution, bottlenecks, and the impact of the AI tools you implement.

Are DORA metrics enough to measure engineering productivity?

No, DORA metrics alone are not enough because they show performance outcomes but not underlying workflow constraints. You still need flow visibility, bottleneck analysis, and structured interpretation to guide intervention decisions.

What is the best tool for measuring engineering productivity?

The best tool is one that combines metric visibility, system-level analysis, and actionable insight. Hence, Axify provides delivery dashboards, structured assessments, and AI-driven recommendations grounded in your internal delivery data.

How often should engineering productivity be reviewed?

Engineering productivity should be reviewed at different intervals: daily for flow disruptions, weekly for team adjustments, and quarterly for leadership planning. Trends over time matter more than isolated data points.

%20(4).png?width=500&name=Mega%20menu%20-%20Vignette%20-%20(241%20x%20156%20px)%20(4).png)

.png?width=60&name=About%20Us%20-%20Axify%20(2).png)