Some software teams adopted AI tools faster than they built a way to measure impact.

If you implemented AI assistants but release plans still miss, handoffs still slow teams down, and coordination still eats time, that’s usually the first sign your AI metrics are tracking activity, not delivery behavior.

This article closes that gap. We’ll treat AI as a change to your delivery system and measure its effects on flow, cost, risk, and decisions across your delivery system. You will learn how to determine whether AI usage reduced cycle time, lowered rework, stabilized throughput, or simply increased output without improving delivery reliability.

Let's get started.

Pro tip: If you can’t explain where AI improved delivery in one sentence, you’re not measuring it correctly. Axify gives you visibility automatically, recommends what to fix next, helps you implement changes, and lets you consult with an AI assistant. Try Axify today and move from AI activity to controlled delivery improvement.

Tl;dr: The Axify AI Impact Framework in a Nutshell

Why AI Measurement Fails in Many Organizations Today

Most AI measurement fails because it stops at tool usage and tool performance.

Teams track prompts, seats, sessions, feature clicks, or model benchmark scores. Those signals can tell you adoption is happening, but they do not tell you whether:

- PRs merged faster or just arrived in larger batches

- Review queues shrank or reviewers became the new bottleneck

- Rework dropped or defects moved downstream

- Incident volume or rollback frequency changed after the same calendar time

- Delivery became more predictable from plan to release

Benchmark scores and feature metrics also don’t capture system effects. They won’t tell you whether cycle time decreased, whether review delays shortened, or whether defect escape rates changed. Usage dashboards show activity, but they don’t explain impact.

That’s why leaders end up with a familiar pattern: more AI activity, unclear delivery improvement, and harder prioritization.

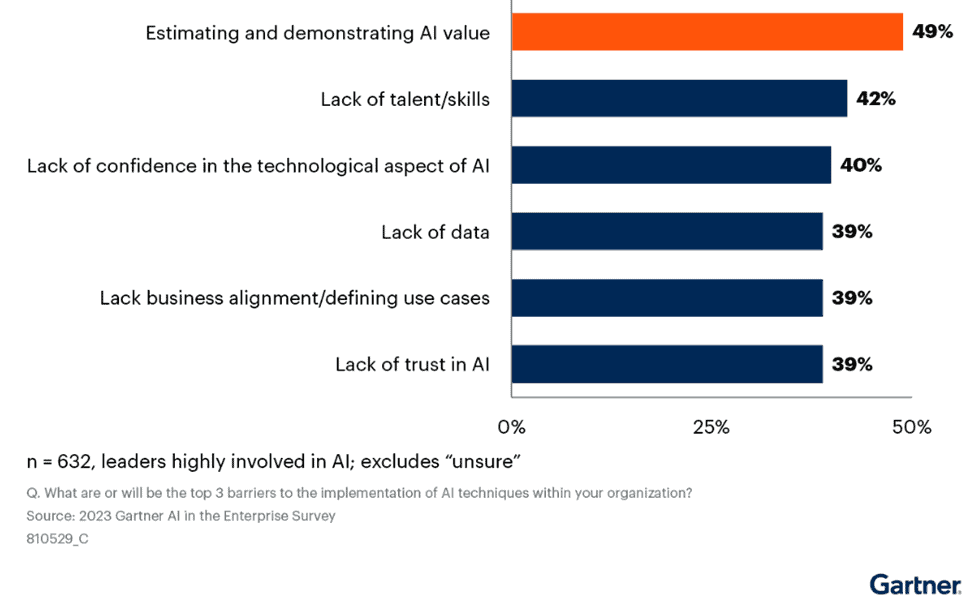

This gap is widely recognized. Gartner reports that 49% of business leaders say proving AI business value is their biggest adoption challenge.

Source: Gartner

That number reflects the same problem you face: measurement and evaluations focus on narrow, tool-related metrics and not on the macro level: delivery flow, cost structure, or risk.

When that visibility is missing, decision-making becomes harder. You can’t decide where to invest, what to standardize, or what to roll back based on adoption signals alone.

That brings us to the next point.

What Does “Real Business Impact” Mean in AI?

Real business impact from AI is reflected in ROI, faster time to market, lower cost of delivery, and reduced risk exposure.

But those financial outcomes appear only when delivery mechanics change.

AI does not create ROI directly. It changes how work moves through planning, development, review, validation, and release. Those changes alter lead time, rework, coordination load, and production stability. Financial impact is downstream.

That’s why we advise you to look beyond narrow tool-related AI metrics; after all:

- If model accuracy improves but lead time stays flat, time to market does not improve.

- If output increases but change failure rate rises, margins compress.

- If PR volume grows but review queues expand, planning accuracy degrades.

None of those scenarios shows a durable ROI being produced.

To connect AI to business results, you need a clear chain:

Delivery mechanics → Operational outcomes → Financial impact

For example:

- Shorter cycle time and stable throughput → faster release cadence → earlier revenue realization

- Lower rework and defect escape → fewer incidents and rollbacks → reduced support cost and risk

- Reduced coordination overhead → lower cost per change → improved return on engineering spend

Unfortunately, industry surveys often stop at outcome labels, too.

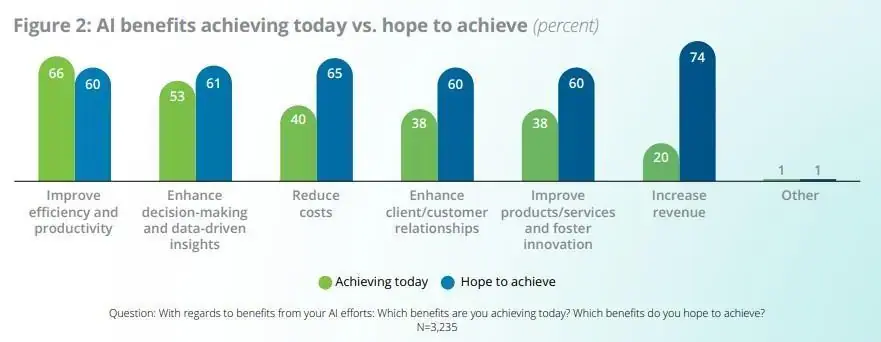

Deloitte’s 2026 AI report notes that 66% of organizations report productivity gains, 53% report better decision-making, and 40% cite cost reductions.

Source: Deloitte’s 2026 AI report

These are results categories, not causal explanations. Without delivery signals underneath them, attribution remains weak.

AI should be evaluated as a structural change inside the delivery system. It modifies how code is produced, reviewed, and released. That is where measurable impact begins.

Pro tip: AI also acts as an amplifier. In a stable workflow, it reduces wait time and rework. In a constrained system, it increases PR volume, review queues, and downstream defects. Business impact depends on which effect dominates.

Unpopular Opinion: Traditional AI Metrics Fail to Predict Business Value

Most AI reporting looks rigorous, yet it rarely explains why your delivery system improved or deteriorated. The problem is not data scarcity but misaligned AI metrics.

These are the three patterns that distort how you interpret impact.

1. Model Benchmarks Don’t Map to Operations

Accuracy, BLEU, F1 scores, or latency improvements in large language models describe technical capability, but they don't explain operational effect. A model can generate higher-quality code suggestions, yet lead time for changes may remain unchanged if review queues or approval policies stay the same.

Also, lower response latency does not reduce rework if generated changes still require significant correction. Technical gains only matter when they change delivery flow, cost, or risk.

2. Usage Metrics Are Not Value Metrics

Seats activated, sessions opened, prompts submitted, or tokens consumed tell you who is using the tool and how. They do not tell you whether the work reached production faster or with fewer defects.

High usage may reflect experimentation but not improvement. We think usage becomes meaningful only when you connect it to shifts in system-level engineering metrics, like cycle time, deployment frequency, or defect escape rate.

3. Output Metrics Hide Second-Order Effects

Your output volume can increase while your system health can decline. The DORA 2025 report notes how AI can amplify existing practices, for better or worse. If workflow discipline is weak, AI-assisted engineering can intensify that weakness. For example, you can have:

- Speed gains that increase rework.

- Automation that creates coordination debt.

In those cases, metrics that look positive in isolation become misleading. These are typically treated as “vanity” indicators when measured without context, including:

- Time saved.

- Tasks automated.

- % AI-generated.

Without linking them to system metrics, they obscure tradeoffs instead of clarifying impact.

This leads us to the next point.

The True Four Dimensions of AI Business Impact: The Axify Framework

To assess the impact AI tools have on software development, teams need a structured measurement lens. The framework below evaluates how AI adoption influences software delivery across four dimensions that shape engineering performance and leadership decisions: delivery speed, cost structure, system stability, and decision quality.

1. Delivery Impact Metrics

Delivery impact metrics show how AI changes flow, speed, stability, and throughput across your system. Instead of simply evaluating tool features, you compare performance before and after AI adoption and observe whether delivery behavior shifts in measurable ways.

Pro tip: Before tracking system-wide impact, set clear expectations. The first changes appear at the lowest level of the delivery loop, and they are not always improvements.

| “When teams adopt AI, the first measurable impact shows up at the pull request level, not at the issue or delivery level. It can even have a negative effect at first, as more code creates pressure on review, testing, and deployment. As teams adapt, the impact becomes visible at higher levels like issues and overall delivery.” (Pierre Gilbert, Software Delivery Expert, Axify) |

In practice, PR throughput, PR size, and review time are usually the first metrics to change. Metrics like cycle time or lead time take longer to improve because more code initially creates pressure on downstream steps in the delivery process.

This is where Axify can also help.

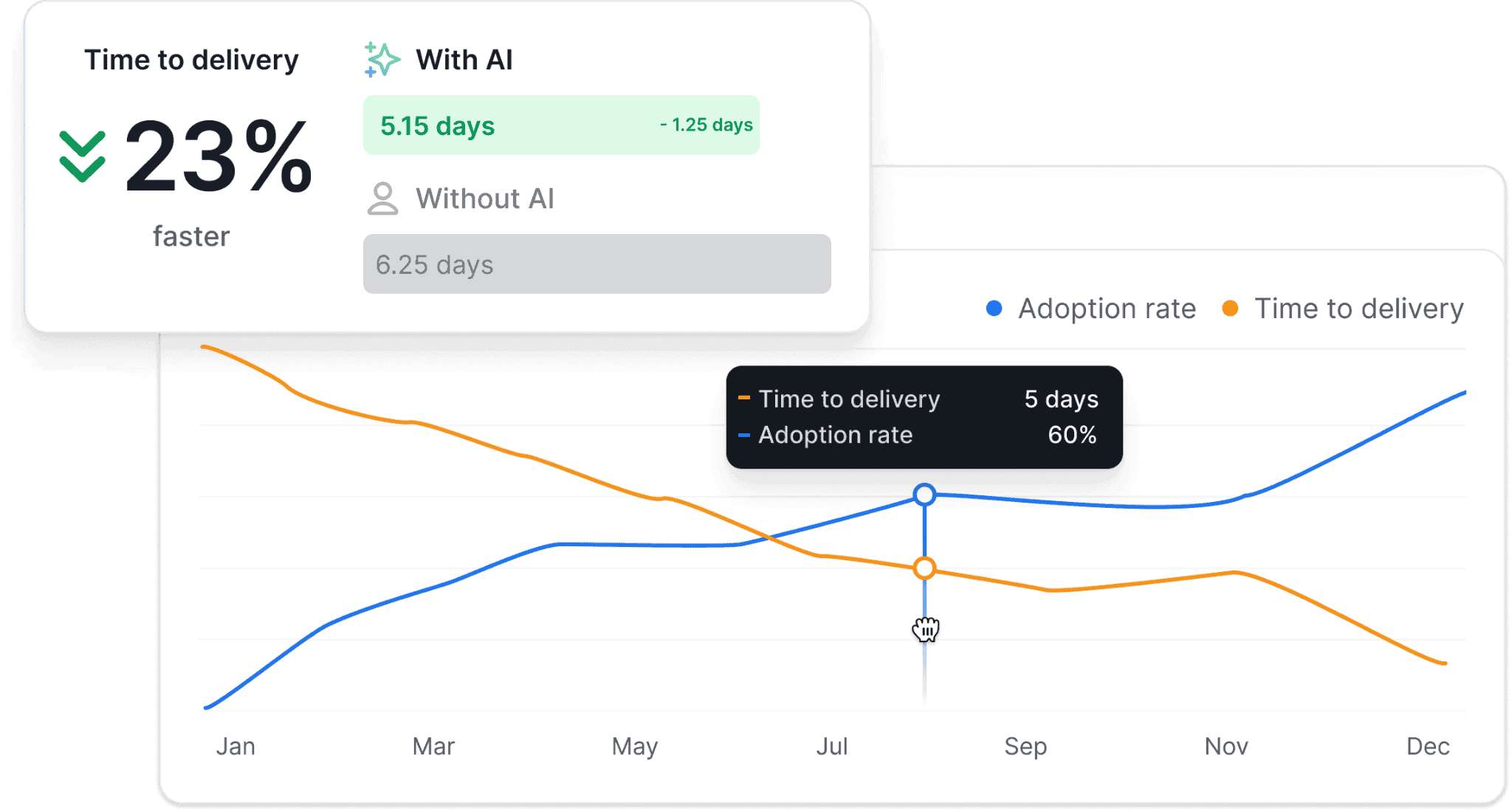

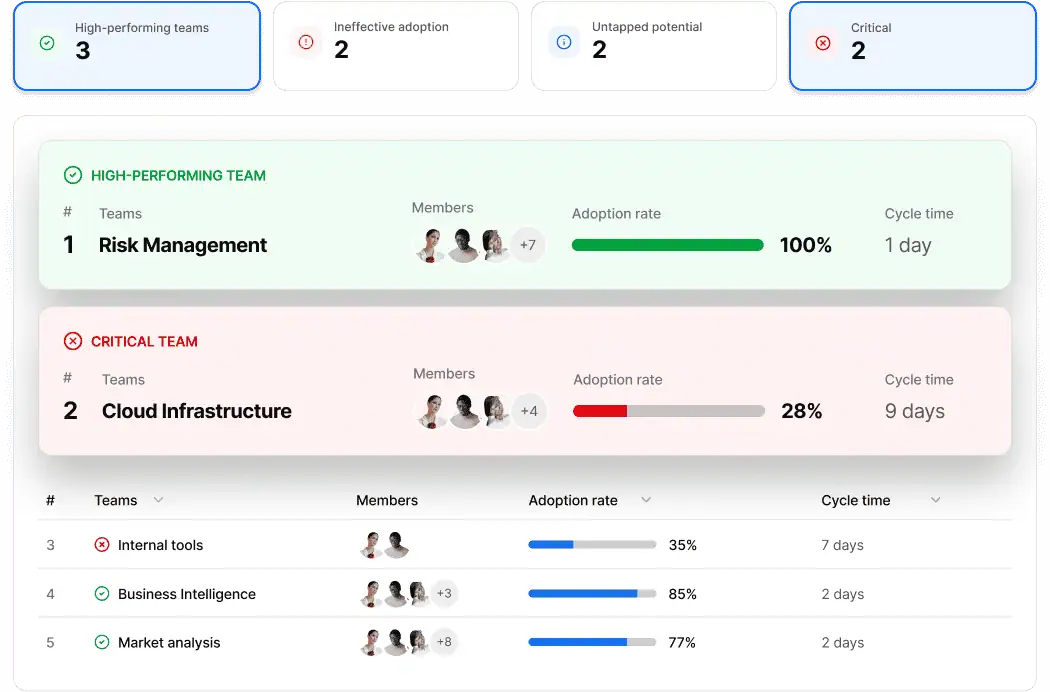

Through our AI Impact capability, you can correlate AI adoption levels with changes in core process metrics and compare cohorts with and without AI support.

That said, you should focus on:

- Time to delivery delta: Compare the elapsed time between a change being committed and the moment it reaches production before and after AI adoption. If coding becomes faster but testing, approvals, or deployment gates remain unchanged, this metric may not improve. A reduction shows that faster development translates into faster releases. As you can see in the image above, Axify’s AI Impact feature helps track this delta and identify where delivery delays still occur.

- Cycle time delta: Compare total elapsed time from work start to completion before and after AI adoption. A reduction signals faster flow, but a flat result or an increase suggests bottlenecks remain elsewhere.

- Lead time delta: Track the full elapsed time from the moment a stakeholder submits a request to the point when the change is live in production and usable by the end user. If AI shortens coding but approval policies remain unchanged, lead time may not improve.

- PR review latency (pre- vs. post-AI): This is usually where AI impact becomes visible first, as more or larger PRs can quickly slow down reviews. Compare the median time from PR open to merge. If AI-generated changes increase review load, queue time rises. Any reduction in coding time may be absorbed by review delay, leaving end-to-end cycle time unchanged.

- Flow efficiency delta: Evaluate the ratio of active work to waiting time before and after AI. If AI reduces waiting states in review, testing, or handoffs, flow efficiency should increase. If PR volume rises and queues expand, waiting time grows and flow efficiency declines. This exposes whether AI reduced idle time or introduced coordination friction.

- Deployment frequency shift: Track production deployment frequency alongside validation gate outcomes (test pass rate, rollback rate, change failure rate). An increase in deployment frequency only indicates improvement if CFR and rollback frequency remain stable. If release cadence rises but defects escape more often, risk increased.

- Delivery stability delta: Measure variance in throughput and completion rate over time. AI-driven output spikes followed by slowdowns indicate batch buildup or downstream rework. Stable throughput with lower cycle time reflects systemic improvement. Stability is required for planning accuracy and reliable forecasting.

These metrics connect directly to business value because they reflect how reliably work moves through your pipeline. When AI increases output but destabilizes deployment metrics, you face planning risk.

But when AI adoption correlates with lower cycle time and steady throughput, you gain predictable delivery. That is a measurable impact.

2. Cost Impact

Cost impact measures whether AI reduces the cost per validated change, not whether it increases output.

Speed alone does not lower cost. Cost improves only if AI changes effort distribution without increasing downstream correction, coordination, or risk.

Assess cost using these signals:

- Cost per unit of output: Compare total engineering spend to validated releases or production changes over a defined window (for example, 90 days pre- vs. post-AI). If feature volume rises but review time, incident response, and coordination effort rise proportionally, unit economics remain flat.

- Rework cost: Measure hours spent rewriting AI-generated code, addressing review feedback, reopening tickets, and fixing escaped defects. If review and correction time increases, initial coding speed gains are absorbed downstream.

Side note: In fact, recent data shows developers now spend about 9% of their total time reviewing and correcting AI-generated code instead of building new functionality. If that time displaces net-new delivery, the cost structure does not improve.

- Coordination cost: Track time from PR open to merge, number of review cycles, and cross-team clarification threads. If AI increases PR size or ambiguity, review cycles expand and coordination overhead rises. That overhead is labor cost.

- Support and maintenance cost: Measure time spent maintaining prompts, configuring tools, managing updates, and onboarding teams. This is operational overhead that affects cost per change.

- Validation overhead: Track additional review layers or extended QA cycles introduced to verify AI output. If validation gates expand, labor cost increases even if coding time decreases.

- Tooling cost and overlap: Audit license spend and duplicated tooling across teams. Parallel AI tools with overlapping functionality dilute ROI.

If AI output requires heavier review, correction, or parallel workflows, the total amount of work increases. To see cost improvements, you need a net reduction in effort across the entire lifecycle, not isolated gains in code generation.

As Pierre Gilbert explains, the real impact of AI depends on how efficiently work moves through the delivery pipeline.

“One of the most overlooked factors in AI adoption is flow efficiency. I’ve seen many organizations accelerate coding with AI, only to create new bottlenecks in reviews, testing, or deployment. When that happens, the total effort doesn’t actually decrease because the work just moves downstream. The teams that truly benefit from AI are the ones that optimize how work flows across the entire delivery system.”

Pierre Gilbert

Software Delivery Expert, Axify

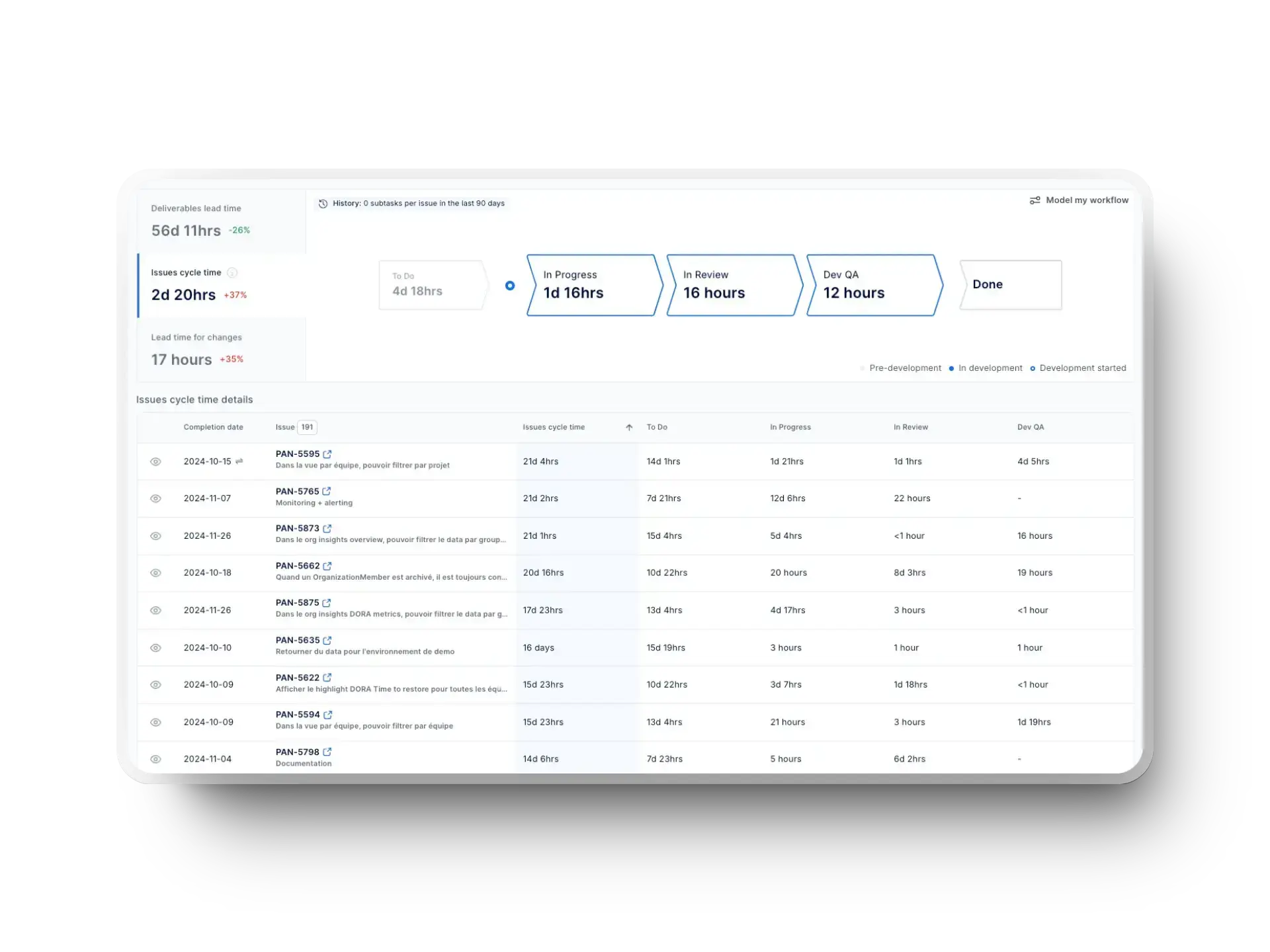

And if you’re tracking flow efficiency, you can use our Value Stream Mapping feature, which gives you a clean view of your operations and value creation across SDLC phases.

3. Risk Impact

Risk impact measures how AI impacts your operational risk profile. Specifically, whether it increases or reduces failure probability, defect escape, recovery time, and delivery predictability.

Pro tip: With Axify’s AI Impact capabilities, you can correlate AI adoption levels with change failure rate, rollback frequency, throughput variance, and MTTR. This lets you determine whether increased AI usage coincides with stable delivery or rising operational risk.

You can identify teams where AI reduces rework and stabilizes throughput, standardize effective patterns, and intervene where failure rates or review load increase.

Remember: Higher throughput is not an improvement if failure rates, rollback frequency, or recovery time increase. Risk exposure must be evaluated alongside flow and cost to determine whether AI strengthens or destabilizes the system.

In Axify’s framework, you assess risk through measurable deltas before and after AI adoption:

- Change failure rate: Measure the percentage of deployments that result in degraded service, hotfixes, or rollbacks. If AI increases deployment volume but CFR rises, operational risk increases. Throughput gains do not offset production instability.

- Rollback frequency: Track how often production changes are reverted. An increase indicates unstable integration, inadequate review, or weak validation of AI-generated changes.

- Defect density (AI-assisted vs. non-assisted): Compare defect rates between AI-assisted changes and manually authored changes. This isolates whether AI reduces or introduces defects instead of blending both into overall defect metrics.

- Incident recovery time (MTTR): Measure time to restore service after failure. If AI-generated changes increase incident frequency and recovery time remains flat or worsens, total exposure increases. Faster MTTR after AI implementation can partially offset higher failure frequency, but only if it remains consistently low.

- Throughput variance: Measure variance in completed work over time. Large swings can indicate batch buildup, rework spikes, or unstable review capacity. High variance reduces forecast accuracy and increases delivery risk.

- Plan vs. actual completion rate: Compare committed work to completed work within a sprint or release window. If AI increases output but completion predictability declines, planning reliability degrades.

4. Decision Impact

Decision impact refers to how AI affects your decision-making process. Specifically, whether it facilitates planning and execution decisions or not. It’s important to remember that a faster output does not automatically lead to better decisions.

What matters is whether you can:

- Commit to delivery dates with defensible assumptions

- Detect constraints early enough to prevent schedule slip

- Allocate capacity based on observed bottlenecks instead of anecdotes

In Axify’s AI measurement framework, decision impact is measured using the following indicators (before and after AI implementation):

- Commit reliability: Compare what the team planned to deliver at the start of a sprint or release with what was actually shipped. Look at two things: how much of the planned work was completed, and how far off the plan was when work was missed. If AI leads to more activity but a larger gap between planned and delivered work, it means your planning assumptions are becoming less reliable.

- Forecast drift: Compare planned delivery dates with actual delivery dates, and track how often and by how much they change. If you notice big, frequent differences between the two, it means your planning assumptions are unstable. If dates stay consistent, your planning is more reliable.

- Mid-cycle scope churn: Compare how much work is added, removed, or changed after execution starts, before and after AI adoption. Higher churn after AI is a negative sign because your output may be failing review or you may have a weak upstream validation. Lower churn post-AI is good: it might mean that AI may be improving discovery, clarification, or implementation fit.

- Intervention latency: Measure the time between detecting a problem and taking action, before and after AI rollout. Define both the trigger (e.g., review queue grows, WIP exceeds limits, failure rate increases) and the response (e.g., reassign reviewers, split PRs, adjust WIP, add test capacity). Shorter response time post-AI means issues are handled faster; longer response times means AI doesn’t help you decide.

- Decision signal quality: Compare how stable your delivery metrics are before and after AI. Look at variation in cycle time, review queue time, failure rate, and recovery time. If variation increases post AI, decisions rely on less predictable data. If variation decreases, decisions are based on more consistent delivery behavior.

- Decision reversals: Compare how often initiatives are paused, rolled back, or significantly changed due to delivery issues or risk, before and after AI adoption. If reversals decrease post-AI, your decisions are more reliable - and vice-versa.

These indicators show whether delivery is predictable and whether planning decisions are reliable.

- If AI increases output but also increases forecast changes or mid-cycle rework, your delivery becomes harder to predict. As such, risk increases.

- You want AI to reduce planning changes, improve how often teams meet commitments, and shorten the time to react to problems. In this scenario, you can plan with more confidence and don’t need large safety buffers.

One question remains: how do you respond to AI’s impact on your team performance?

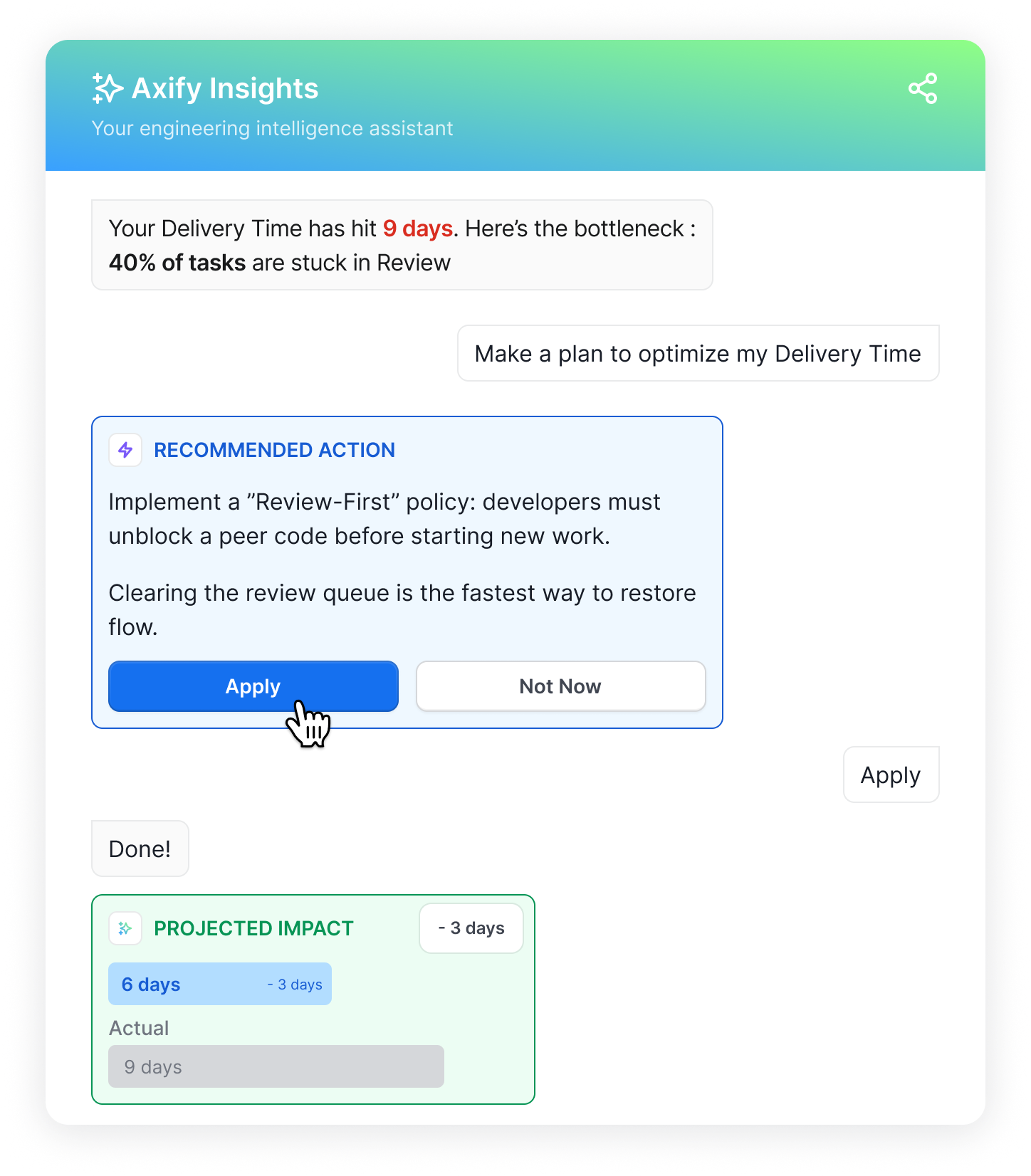

Axify Intelligence is the tool you need to turn delivery data into concrete actions.

It identifies bottlenecks, shows you actionable insights based on historical data, and recommends specific adjustments to the workflow.

Engineering leaders can implement these recommendations directly from the platform.

Because Axify uses your actual delivery data, the recommendations reflect how your team works. This allows faster response when AI affects delivery speed, cost, or risk.

How to Measure AI’s Real Impact

Measuring AI’s real impact requires a proper structure. That means you need disciplined comparison and system-level observation.

Here are the steps we encourage you to follow.

1. Establish Pre-AI Baselines

Document delivery performance before adoption:

- Cycle time

- Lead time for changes

- Change failure rate

- Rework rate (reopened PRs, defect correction time)

- Throughput variance

Without a defined baseline window, improvement claims are not verifiable.

2. Introduce AI in Controlled Scopes

Roll out AI in defined teams, repositories, or workflow stages rather than across the organization at once. This limits confounding variables and allows you to isolate whether changes in your engineering metrics correlate with AI usage.

3. Track Adoption Separately From Impact

Adoption does not equal impact. More interestingly, executive adoption is faster than employee adoption.

In fact, Business Insider reports executive AI usage at 87%, compared to 27% among employees. That gap creates a risk: leadership perception of progress can be completely different from actual adoption. In turn, even the current AI usage rate doesn’t necessarily trigger measurable changes in delivery.

As such, we encourage you to:

- Track adoption signals, such as usage frequency, acceptance rate of AI-generated changes, and contribution to merged PRs.

- Also monitor system outcomes like cycle time, rework, and CFR.

- If usage rises but delivery metrics remain unchanged, AI is active but not yet improving the system.

4. Compare Cohorts

Use both pre/post comparisons and parallel team comparisons. Evaluate:

- AI-enabled teams vs. non-enabled teams

- Same team before vs. after adoption

Control for team size and scope where possible. If differences persist after normalization, AI is a plausible contributing factor.

5. Measure Second-Order Effects

Monitor rework, review load, and coordination time because those signals show whether faster output is creating downstream friction. If defect correction increases or review queues grow, net capacity does not improve even if raw throughput rises. That is why impact must be evaluated beyond first-order output.

6. Monitor System Behavior

Measure how the system behaves over time, not just whether output increases.

Track throughput volatility, commit reliability, forecast drift, and unplanned work ratio before and after AI adoption. If output rises temporarily but forecast accuracy declines or reactive work increases, instability is increasing.

Sustainable improvement appears as consistent throughput, stable forecasts, and fewer corrective interventions. Short-term spikes followed by correction cycles indicate that AI is amplifying variability rather than strengthening the system.

Axify Intelligence operationalizes this measurement process. It continuously analyzes delivery data to detect bottlenecks, connect related signals, and explain root causes using your historical trends. It then recommends corrective actions tied to workflow mechanics.

Leaders can:

- Explore issues.

- Ask structured questions through the embedded AI assistant.

- Apply recommended changes directly.

Because it operates on your system data, not generic prompts, decisions are grounded in how your organization actually delivers.

Common Mistakes When Measuring AI Impact

AI measurement fails for two reasons: teams track the wrong signals and then draw the wrong conclusions from the signals they do have. When adoption scales without a disciplined evaluation plan, leaders can’t separate real system change from noise.

Common mistakes:

- Measuring tool activity instead of delivery outcomes: Tracking prompts, seats, sessions, or benchmark scores does not tell you whether system-level metrics (flow metrics, DORA metrics, review latency, rework, or forecast stability) improved. You end up with high adoption charts and no causal story.

- Treating AI as a tool add-on instead of a workflow change: AI changes how work is created, reviewed, tested, and released. If measurement stays at the “tool layer” (as we explained here), you miss second-order effects like review queue growth, larger PRs, or defect escape shifting downstream.

- Optimizing for speed in one stage: AI coding agents can reduce coding time while increasing review cycles, defect correction, or rollback frequency. If downstream load rises, end-to-end lead time and cost per change may not improve.

- Ignoring variance and predictability: Short spikes in output are easy to misread as improvement. If throughput variance increases, commit accuracy drops, or forecast drift grows, planning risk increases even when average output is higher.

- Using metrics as surveillance: Measuring individual output (or individual AI usage) drives local optimization and gaming (teams may under-report issues, avoid raising quality concerns, or push work downstream). It also reduces signal quality: people change behavior to satisfy the metric, which makes before/after comparisons less reliable.

- Changing too many variables at once: Rolling out new agents, prompt policies, or tooling configurations every week contaminates attribution. You can’t tell whether cycle time improved thanks to a certain AI tool, workflow changes, staffing changes, or a shifting definition of “done.”

What Good AI Measurement Enables

Good AI measurement lets you make defensible operational and financial decisions based on observed delivery behavior. It shifts the conversation from “Are people using AI?” to “What changed in flow, cost, and risk, and should we scale it?”

These are the outcomes that follow:

- Faster, safer decisions: Clear causal links between adoption and delivery outcomes reduce hesitation and allow earlier corrective action. That’s because decisions rely on clear data. Besides, Axify’s AI Intelligence helps you see and compare potential solutions, so you can implement the best right from the platform.

- Earlier detection of downstream damage: Measurement surfaces second-order effects (review queue growth, rework spikes, higher rollback risk) before they compound into missed dates or incidents.

- Higher predictability: When you measure variance and commit reliability, you can separate short-lived output spikes from sustainable improvement. Forecasts get more stable because the system is less surprising. This predictability lowers escalation frequency and improves planning accuracy.

- More trust in data: When metrics reflect real workflow behavior, teams rely on them. Consistent signals replace anecdotal interpretation.

- Better capital allocation: When impact is quantified against cost drivers and risk exposure, you can scale AI where it improves outcomes and stop spend where it doesn’t, with a clear audit trail.

Solid AI Measurement in Software Delivery Starts with Axify

AI measurement becomes credible when it explains how adoption changes delivery flow, cost structure, risk exposure, and planning quality.

Throughout this guide, you saw that usage metrics and model benchmarks do not answer executive questions about predictability, margin, or capital allocation. What matters is whether cycle time shifts, rework declines, failure rates stabilize, and forecasts become more accurate.

Axify gives you more than visibility.

It connects AI adoption levels, acceptance rates, and interaction patterns to concrete delivery signals such as throughput stability, review latency, defect density, and forecast variance. As such, you understand what changed, where it changed, and what it means for delivery performance.

Axify Intelligence goes further by turning those signals into decisions.

It detects bottlenecks, explains root causes using your historical data, recommends corrective actions tied to workflow mechanics, and lets you act directly.

If you want disciplined AI evaluation that leads to faster, defensible decisions, book a demo with Axify and see how your data drives execution.

FAQs

How do I measure AI in software development?

Measure AI by comparing delivery, cost, risk, and decision metrics before and after adoption. Track cycle time, change failure rate, rework, and forecast accuracy rather than prompts or licenses. Tools like Axify help correlate adoption levels with workflow outcomes so you can see whether performance actually improved.

What’s the best AI measurement framework?

The best AI measurement framework evaluates system impact across delivery speed, cost drivers, risk exposure, and decision quality. A single metric cannot explain performance change.

But a structured model, such as Axify’s four-dimension approach, correlates AI usage and acceptance rates with observable shifts in throughput, defect correction, and predictability.

How long until I see the impact of AI?

You should expect a measurable impact within one to two planning cycles if adoption meaningfully changes workflow behavior. However, early improvements may affect speed before stability or cost savings appear. Sustainable impact shows up as consistent performance.

Can AI make performance worse?

Yes, AI can degrade performance if it increases rework, review load, or coordination overhead. Faster code generation without process alignment can raise change failure rates. Impact depends on how well AI integrates within your existing delivery practices.

How often should AI metrics be reviewed?

AI metrics should be reviewed at the same cadence as delivery performance, typically weekly for operational signals and monthly for trend analysis. Frequent review helps you detect volatility before it compounds.

What tools should I use for AI measurement in software development?

Use tools that connect adoption data with delivery metrics. Platforms like Axify combine workflow analytics with contextual insights, which allows you to interpret cause and effect across your system. Besides, Axify’s AI Intelligence showcases bottlenecks and recommends solutions based on these analytics, so leaders can implement data-driven decisions right from the platform.

%20(4).png?width=500&name=Mega%20menu%20-%20Vignette%20-%20(241%20x%20156%20px)%20(4).png)

.png?width=60&name=About%20Us%20-%20Axify%20(2).png)